[Guest Diary] New Malware Libraries means New Signatures

Introduction

The SHA-256 a8460f446be540410004b1a8db4083773fa46f7fe76fa84219c93daa1669f8f2 is one of the most-observed Outlaw / Shellbot artifacts on the public internet. VirusTotal first ingested it on 5 July 2018 [2]. It is the SHA-256 of the authorized_keys file written by the campaign whose persistence comment string is mdrfckr, a campaign documented in handler diaries, vendor reports, and independent honeypot research for nearly seven years.

This diary does not announce a new campaign. The file hash, the public key, the mdrfckr comment string, the chattr -ia .ssh defensive disarm, the chpasswd account hijack, and the /tmp/secure.sh competitor cleanup are all well-described in prior reporting [3][4][5][6][7]. What this diary does add is one new data point in an existing lineage: between 14 and 21 April 2026, my DShield sensor [8] observed the mdrfckr campaign using a third libssh client version that has not, to my knowledge, been published as part of this campaign’s hassh chronology. The botnet’s authorized_keys file is unchanged across four years. Its SSH client library is on its third documented major version. Detection rules pinned to the older hasshes will miss the current generation.

The point of this diary is to put the prior reports side by side with my April 2026 observation, document the new hassh, and offer detection-engineering guidance for handlers maintaining mdrfckr-aware rules.

What is already known

I want to be careful to credit the prior work this diary builds on, because the new contribution is small relative to it.

The mdrfckr persistence key was first associated with the Outlaw / Dota family by Trend Micro in 2018 [3], with subsequent updates in 2019 and follow-up reporting from Anomali, Yoroi [9], Juniper [10], CounterCraft [11], Cybereason, and Kaspersky. The recon command sequence and the competitor-cleanup playbook are described across that body of work. None of the file or behaviour signatures discussed in this diary are novel.

In late 2022 and early 2023, the port22.dk blog [4][7] published a two-part deep dive on the campaign. Part one (data from October–November 2022) observed 12,913 unique IPs writing the mdrfckr key from a network of 10 honeypots. Crucially, the post introduced hassh-based clustering as a defender’s tool: 99.1% of the observed mdrfckr-key writes shared the hassh 51cba57125523ce4b9db67714a90bf6e, which corresponds to the SSH client banner SSH-2.0-libssh-0.6.0 / SSH-2.0-libssh-0.6.3. Part two (data from December 2022 onward) documented the campaign migrating to a second hassh f555226df1963d1d3c09daf865abdc9a, corresponding to SSH-2.0-libssh_0.9.5 / SSH-2.0-libssh_0.9.6, with ~30,000 unique IPs across the new fingerprint and a 94.5% confidence link. Part two also documented two new related command variants: chattr -ia .ssh; lockr -ia .ssh as a separate command, and lockr -ia .ssh run on its own, executed alongside the original key-write command.

In May 2023, a SANS ISC diary by Jesse La Grew [5] presented two example sessions writing the same SHA-256, captured via a cowrie-log enrichment script. One session originated from a DigitalOcean datacentre IP; the other from a VPN-fronted Tencent IP. Both sessions executed the post-December-2022 split-command variant.

In May / June 2023, Guy Bruneau’s monthly DShield diary [6] noted the same key-write playbook in honeypot data and attributed it explicitly to the Outlaw group via the original Trend Micro reporting.

That is the public chronology this observation extends.

What the April 2026 sensor saw

Between 2026-04-14 01:23:41 UTC and 2026-04-21 02:22:56 UTC, my DShield sensor logged 24 unique source IPs writing the SHA-256 a8460f446be540410004b1a8db4083773fa46f7fe76fa84219c93daa1669f8f2 to /root/.ssh/authorized_keys (and to other compromised account paths). The cluster wrote 229 authorized_keys modifications across 1,230 SSH sessions and executed 4,133 post-authentication commands.

The peak burst occurred on 19 April 2026: 20 of the 24 IPs first connected to the sensor between 06:05:19 UTC and 06:07:30 UTC, a 131-second window. The remaining four IPs appeared on neighbouring days but executed the same playbook with the same key.

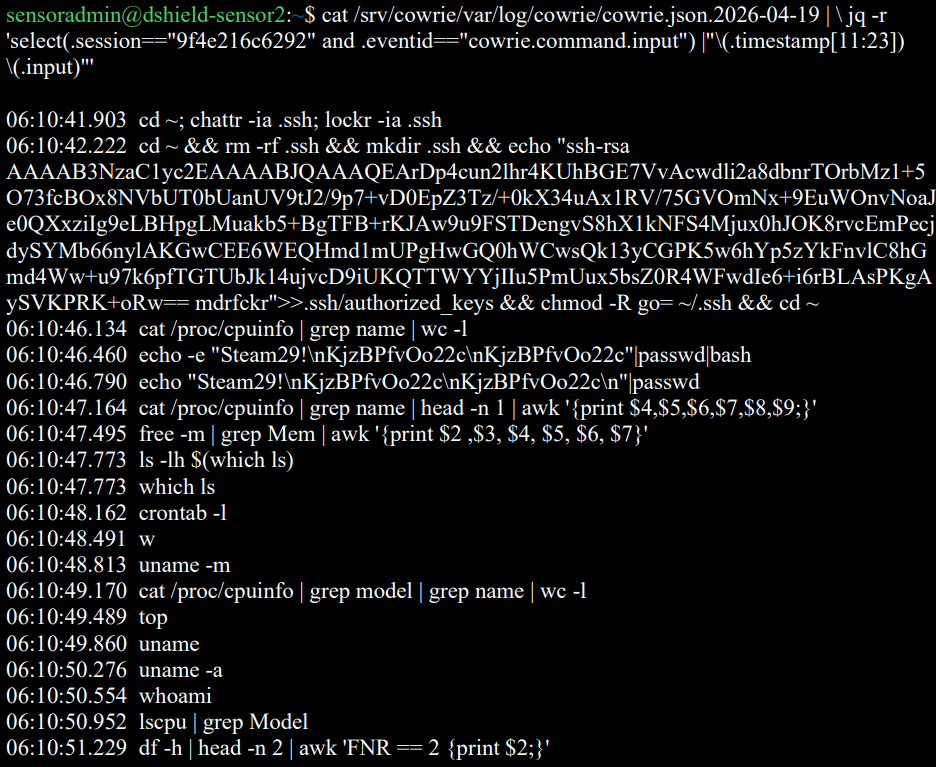

The defensive-disarm and key-write command observed across every successful session is the post-December-2022 split variant documented by port22 part two:

Figure 1: Cowrie session capture of one cluster IP executing the post-December-2022 split variant (defensive disarm, key write, recon).

The new data point is the SSH client.

The new hassh: libssh 0.11.x

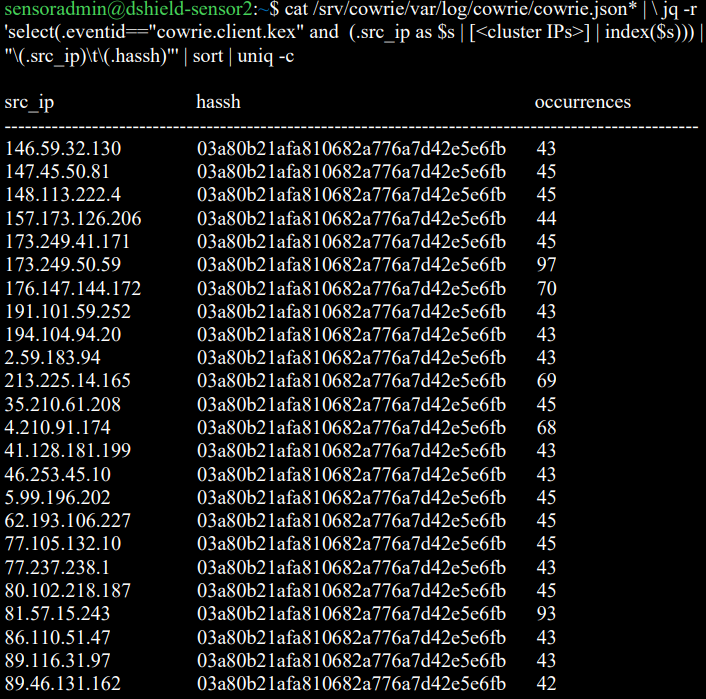

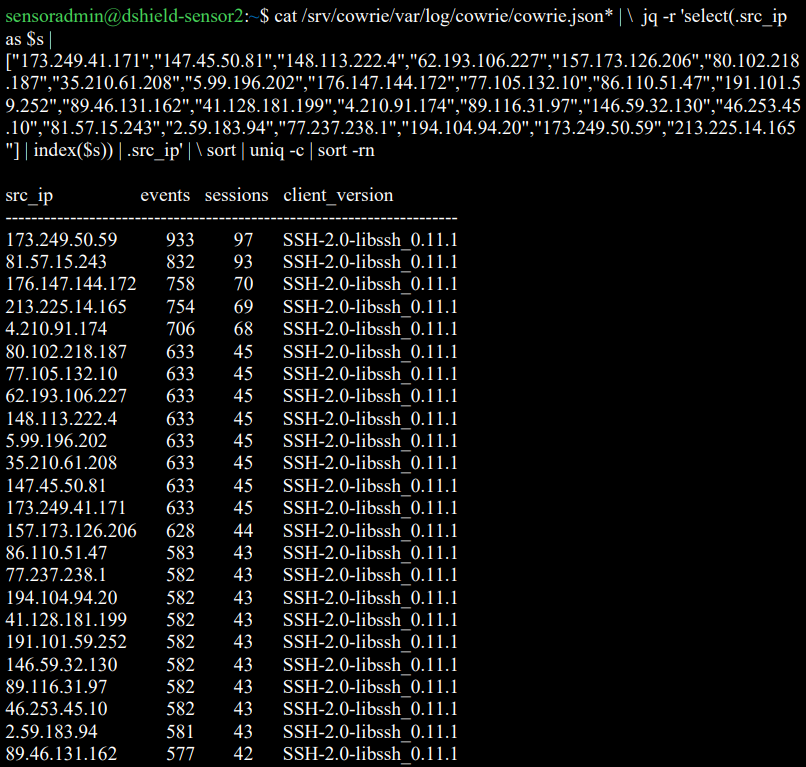

Every one of the 24 IPs in the April 2026 cluster advertised the SSH client banner SSH-2.0-libssh_0.11.1 and produced the hassh fingerprint 03a80b21afa810682a776a7d42e5e6fb.

Figure 2: Per-IP hassh fingerprint listing - all 24 cluster IPs share 03a80b21afa810682a776a7d42e5e6fb.

???????

???????

Figure 3: Per-IP SSH client banner listing - SSH-2.0-libssh_0.11.1 across the cluster.

This hassh does not match the hashes documented in port22 parts one and two, nor in the May 2023 ISC diary.

| Reporting period | Hassh fingerprint | Client banner | Source |

|---|---|---|---|

| Oct–Nov 2022 | 51cba57125523ce4b9db67714a90bf6e |

libssh-0.6.0 / libssh-0.6.3 |

port22 part one [4] |

| Dec 2022 → 2023 | f555226df1963d1d3c09daf865abdc9a |

libssh_0.9.5 / libssh_0.9.6 |

port22 part two [7] |

| Apr 2026 | 03a80b21afa810682a776a7d42e5e6fb |

libssh_0.11.1 |

This sensor |

A hassh is a hash of the SSH client’s advertised cipher, MAC, key-exchange, and compression algorithm lists [12]. Different libssh major versions ship with different default algorithm preferences, so each new libssh version a campaign adopts produces a new hassh. The 2026 hassh 03a80b21afa810682a776a7d42e5e6fb is the third documented entry in this campaign’s libssh version walk, separated from port22’s last published value by approximately three years and one major libssh version (0.9 → 0.10 → 0.11).

I do not have a baseline of how prevalent this hassh is across the full DShield sensor population - that is the question I would most like other handlers and DShield operators to help answer. On my single sensor, this hassh accounted for 3,473 SSH log lines across the eight-day window, making it the most active SSH attacker-tooling fingerprint observed during the period.

The 24-IP burst: small confirmation of an existing observation

Twenty of the 24 cluster IPs first connected within a 131-second window. This is consistent with the coordination behaviour documented at much larger scale by port22, and does not represent a new claim. I mention it only for completeness, and because it has one practical implication for detection: per-source-IP rate limits (fail2ban, sshguard) will not trigger on this pattern because each IP performs only ~10 login attempts. Detection rules useful against this campaign should aggregate by target account rather than by source IP - ten distinct IPs attempting steam:Steam29! against the same host within five minutes is a stronger signature than any individual IP’s behaviour.

The cluster IPs and the credential dictionary are listed in the indicators section. None of the credential pairs are new: steam:Steam29!, postgres:q1, dev:dev5, sammy:sammy26, root:AAAaaa111, root:root000@, sysadmin:test123, test1:passwd, tester:testerpass, sammy:12345. This is the existing Outlaw target list.

Why this matters for defenders

The detection-engineering implication of the libssh version walk is straightforward: hassh-based detection rules written in 2022 or 2023 against 51cba57125523ce4b9db67714a90bf6e or f555226df1963d1d3c09daf865abdc9a will silently miss the 2026 generation of the same campaign. The SHA-256 of the authorized_keys file remains the most reliable single indicator (it has not changed in four years), but operators relying on hassh enrichment as a leading indicator - for example, alerting on hassh values before a successful authentication occurs - should add 03a80b21afa810682a776a7d42e5e6fb to their watch lists.

More broadly, the four-year libssh version walk suggests the campaign operator (or operators - the persistence model has always been consistent with shared infrastructure rather than self-propagation in the strict sense) keeps the targeting infrastructure stable while letting the underlying client library age forward. A defender writing a detection rule against this campaign should expect the hassh to change again on a roughly multi-year cadence as libssh ships new defaults, and should pin alerting to the SHA-256, the public key blob, the mdrfckr comment string, and the recon command sequence - none of which have changed since 2018 - rather than to any single hassh value.

What I am not claiming

The 24-IP April 2026 cluster is much smaller than the populations port22 worked with. I cannot meaningfully extend port22’s hassh-confidence statistics from one sensor’s eight-day window. The 99.1% / 94.5% figures published in 2022 and 2023 should not be extrapolated to the 2026 hassh from this data alone - that calculation requires a multi-sensor population study, which is exactly the kind of analysis ISC handlers and the DShield operator community are positioned to do better than any of my sensors.

Indicators

authorized_keysSHA-256 (unchanged since 2018):a8460f446be540410004b1a8db4083773fa46f7fe76fa84219c93daa1669f8f2- Public key comment string:

mdrfckr - April 2026 hassh:

03a80b21afa810682a776a7d42e5e6fb - April 2026 SSH client banner:

SSH-2.0-libssh_0.11.1 - Burst window: 19 April 2026, 06:05:19 → 06:07:30 UTC

- Credential dictionary:

steam:Steam29!,postgres:q1,dev:dev5,sammy:sammy26,root:AAAaaa111,root:root000@,sysadmin:test123,test1:passwd,tester:testerpass,sammy:12345 - 24 source IPs from the April 2026 cluster (Appendix A)

Conclusion

The mdrfckr campaign is older than many of the SSH honeypots currently watching it. Its authorized_keys file is approaching its eighth anniversary on VirusTotal and has not been rotated. Its target dictionary, recon sequence, and competitor-cleanup playbook have all remained stable across the four years that public researchers have been tracking the libssh version walk. What changes is the client.

The April 2026 hassh 03a80b21afa810682a776a7d42e5e6fb joins 51cba57125523ce4b9db67714a90bf6e and f555226df1963d1d3c09daf865abdc9a as the third documented entry in this campaign’s lineage. Detection rules pinned to either earlier hassh will miss it. I would be very interested to hear from any other DShield operator or ISC handler who has independently observed the 0.11.x hassh writing the SHA-256 above - particularly with population data that would let the community update the hassh-to-mdrfckr confidence figures published by port22 in 2022 and 2023.

Acknowledgments

Drafting assistance from Claude (Anthropic) [13]. All log review, the hassh and SHA-256 verification, the credential and IP enumeration, and the comparison against prior reporting were done from the sensor’s own logs and the cited public sources.

References

[1] https://www.sans.edu/cyber-security-programs/bachelors-degree/

[3] Trend Micro, https://www.trendmicro.com/en/research/20/b/outlaw-updates-kit-to-kill-older-miner-versions-targets-more-systems.html

[4] port22.dk, “mdrfckrs – part one,” March 2023. https://blog.port22.dk/mdrfckrs-part-one/

[5] Jesse La Grew, “More Data Enrichment for Cowrie Logs,” SANS Internet Storm Center, 24 May 2023. https://isc.sans.edu/diary/29878

[6] Guy Bruneau, “DShield Honeypot Activity for May 2023,” SANS Internet Storm Center, 11 June 2023. https://isc.sans.edu/diary/29932

[7] port22.dk, “mdrfckrs – part two,” July 2023. https://blog.port22.dk/mdrfckrs-part-two/

[8] https://isc.sans.edu/honeypot.html

[9] Yoroi, “Outlaw is Back: A New Crypto-Botnet Targets European Organizations.” https://yoroi.company/research/outlaw-is-back-a-new-crypto-botnet-targets-european-organizations/

[10] Juniper Threat Research, “Dota3: Is your Internet of Things device moonlighting?” https://blogs.juniper.net/en-us/threat-research/dota3-is-your-internet-of-things-device-moonlighting

[11] CounterCraft, “Dota3 malware again and again.” https://www.countercraftsec.com/blog/dota3-malware-again-and-again/

Appendix A: Source IPs (24)

| 2.59.183.94 | 4.210.91.174 | 5.99.196.202 | 35.210.61.208 |

| 41.128.181.199 | 46.253.45.10 | 62.193.106.227 | 77.105.132.10 |

| 77.237.238.1 | 80.102.218.187 | 81.57.15.243 | 86.110.51.47 |

| 89.46.131.162 | 89.116.31.97 | 146.59.32.130 | 147.45.50.81 |

| 148.113.222.4 | 157.173.126.206 | 173.249.41.171 | 173.249.50.59 |

| 176.147.144.172 | 191.101.59.252 | 194.104.94.20 | 213.225.14.165 |

Simple bypass of the link preview function in Outlook Junk folder

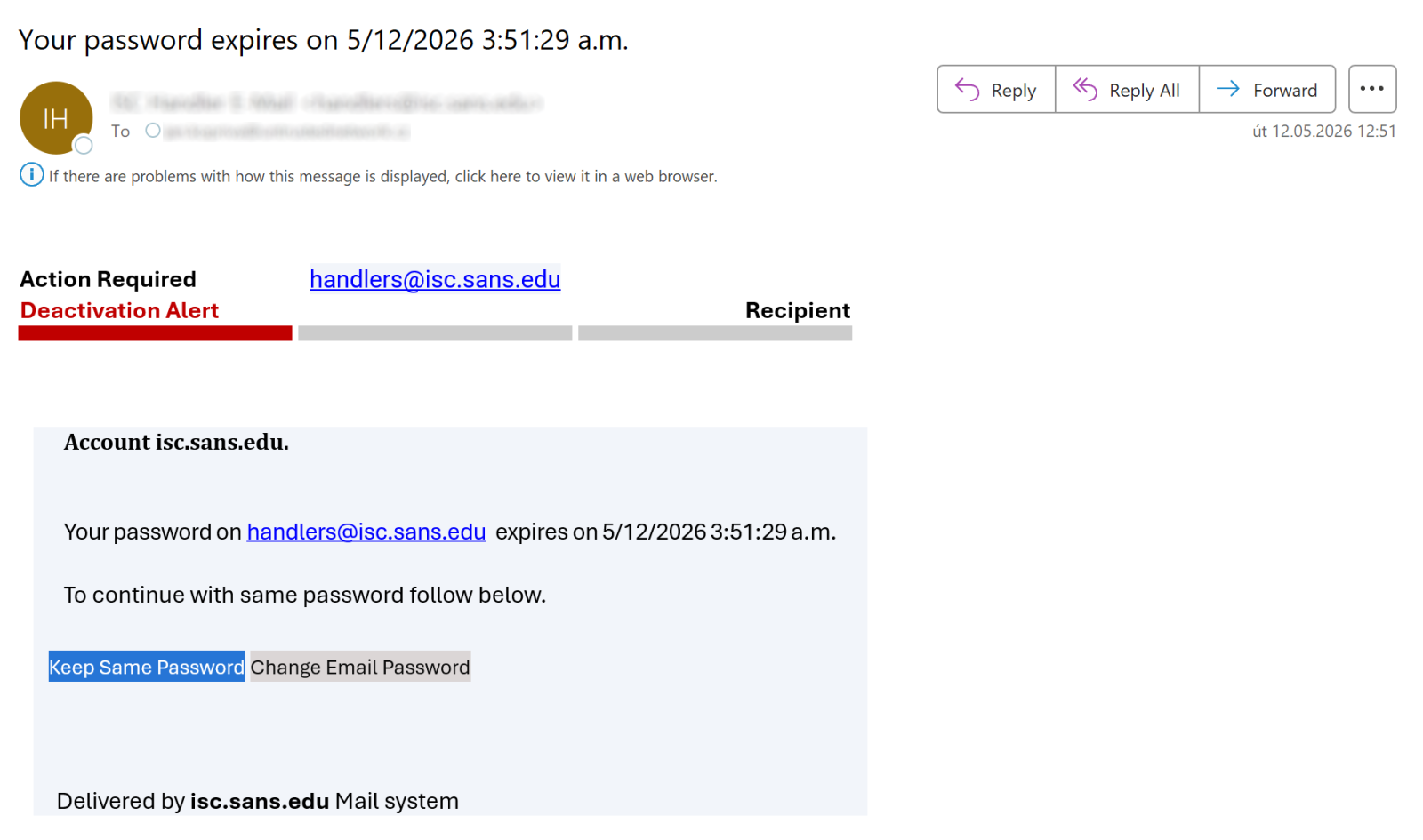

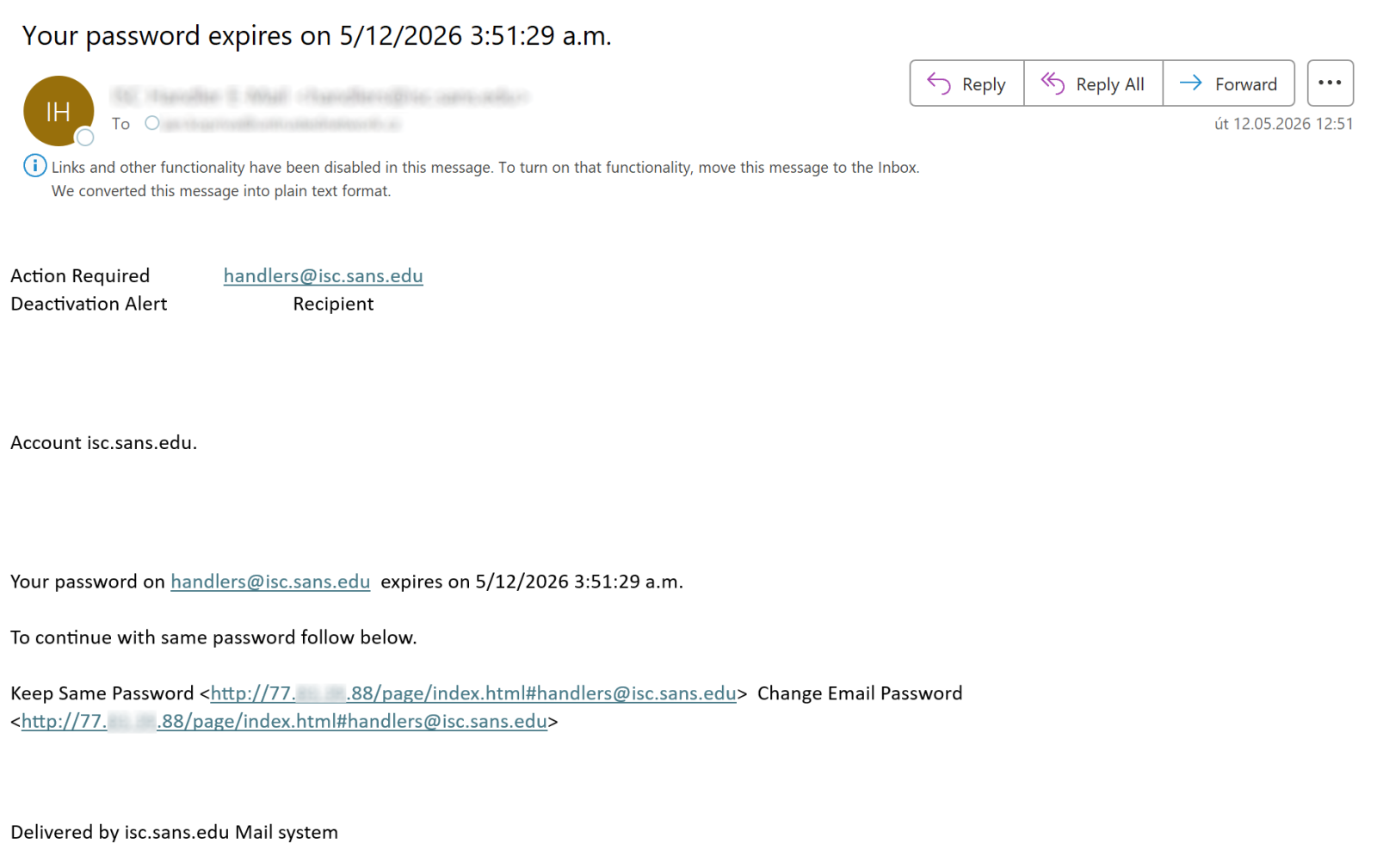

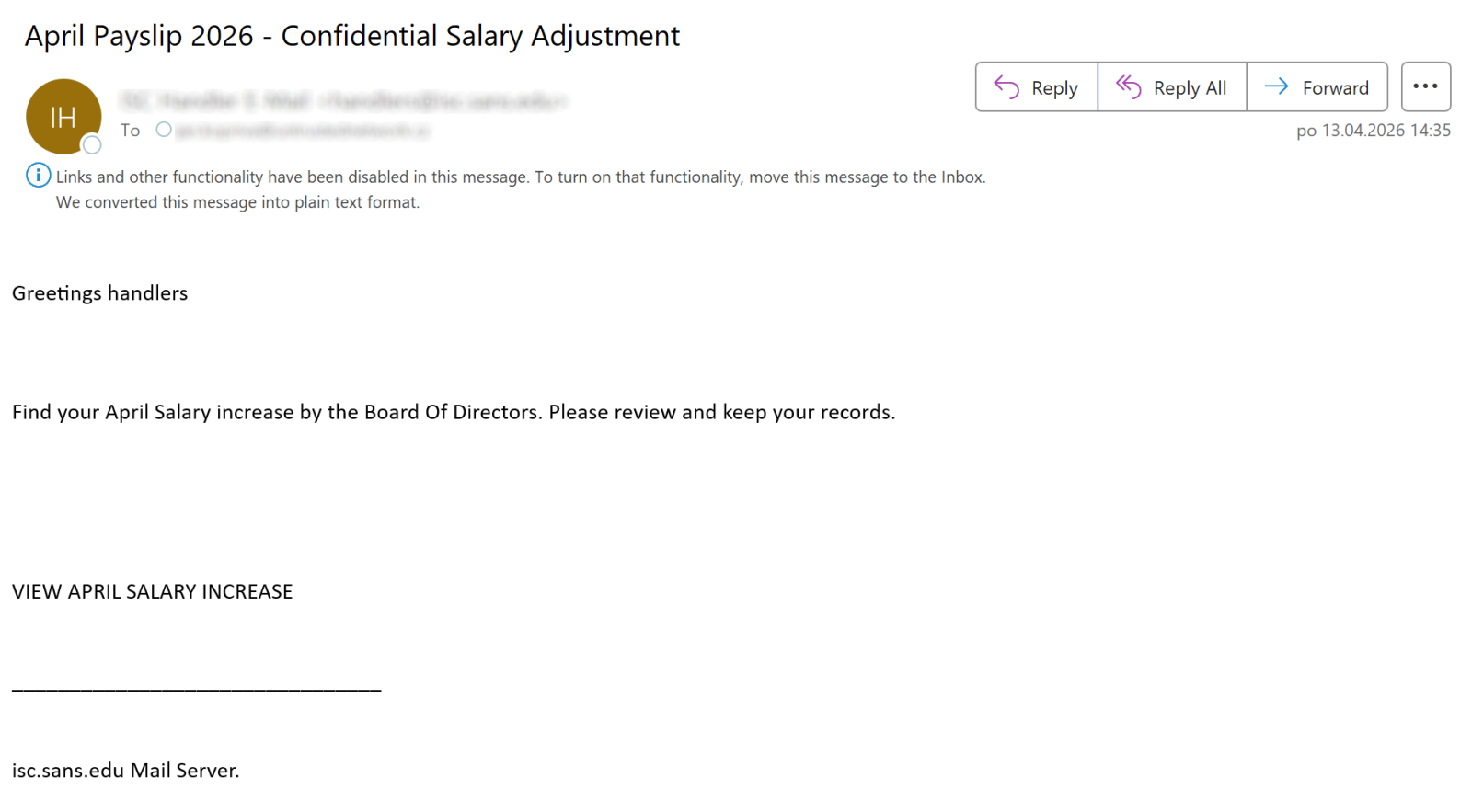

Besides serving as a place where Microsoft Outlook places suspected spam, the Outlook Junk folder has one additional function that can be quite helpful when it comes to identifying malicious messages. Any e-mail placed in this folder is stripped of all formatting, and destinations of all links included in the message become visible to the user, as you can see in the following images which show the same e-mail when it is placed in the inbox, and when it is placed in the Junk folder.

Having access to this functionality is quite advantageous, since it helps easily and safely inspect where a link included in an e-mail might lead. Moving suspicious messages to the Junk folder and viewing them there is correspondingly one of the tips I often give during security awareness training sessions…

Although I will continue to do so, I will now have to add a caveat based on an experience with a phishing message I found in my Junk folder in April.

Before I opened the message in question, I was under the impression that the link preview mechanism works without issues with arbitrary HREF included in an e-mail, and that it always shows the corresponding URL. Which is why I was surprised when the Outlook preview pane showed me no links for the following message, even though the “VIEW APRIL SALARY INCREASE” text is obviously supposed to represent a link to some URL.

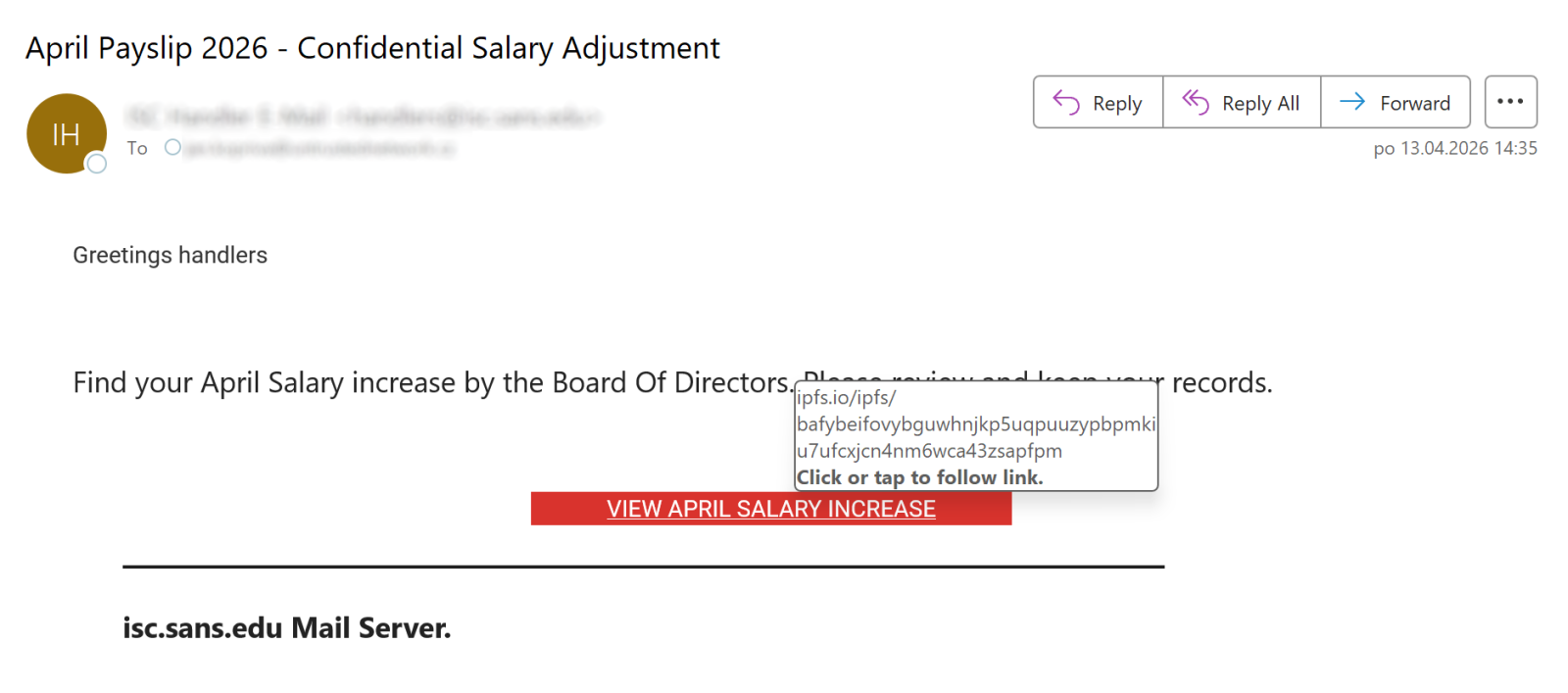

Once I moved the message to another folder, it turned out my assumption was correct, as the text really was associated with a link, as you can see…

So, how did this link manage to “bypass” the Junk folder preview mechanism?

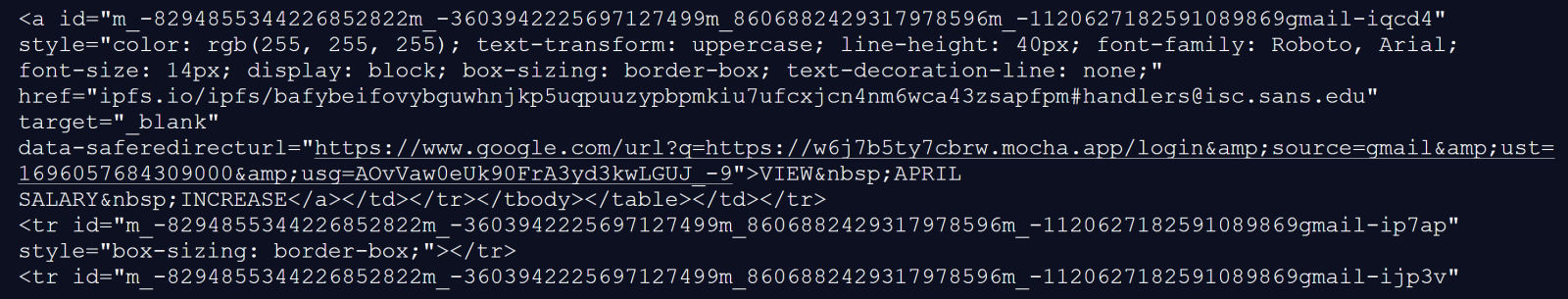

At first, I thought that the behavior might be caused by the relevant A tag containing another embedded tag “inside it”, which can lead to quite unexpected results in Outlook, such as it modifying where an HREF points to without any input from the user.[1]

Nevertheless, after looking at the HTML code – which seems reasonably normal, as you may see – and a little testing, it became obvious that the truth was much more straightforward.

The cause for the link not being displayed by Outlook when the message was placed in the Junk folder was the fact the HREF target didn’t contain a valid URI – the scheme (protocol) part was missing, with only the path segment present. The link preview mechanism therefore didn’t parse it as a valid link and didn’t show it.

On one hand, this is understandable, since the HREF really didn’t contain a valid URL/URI as per the RFC3986[2], however, since the link is clickable (and works) when the message is open normally, I would consider this behavior of the link preview mechanism to be somewhat unfortunate…

In any case, it is certainly good to know about it, especially if – like me – you commonly recommend that non-specialists use the link preview mechanism that Outlook Junk folder provides to look at suspicious messages. As it turns out, it is not as dependable a mechanism as I had believed it to be.

[1] https://isc.sans.edu/diary/Broken+phishing+accidentally+exploiting+Outlook+zeroday/26254

[2] https://www.rfc-editor.org/rfc/rfc3986.html

-----------

Jan Kopriva

LinkedIn

Nettles Consulting

0 Comments

Proxying the Unproxyable? Sending EXE traffic to a Proxy

.. if “unproxyable” is a word that is ..

I had a recent engagement where I had to look at the network traffic generated by a Windows executable. Unfortunately, it was all TLS, and all TLS1.3 to boot. So from a PCAP all I got was a whole lot of “yup, that’s encrypted”, and since it was TLSv1.3 all I really had to work with was the IP addresses, not even server names in the server hello packets to help out. And the IP addresses involved were those “500 DNS names AWS” shotgun addresses, so no help there.

What I really needed was something to take specific traffic, say traffic from an executable, and redirect that to a proxy. If that proxy is then burp suite, then Bob’s yer Uncle, now I can look at the traffic!! If you’d rather use fiddler or some other proxy, go for it, anything will work.

A few minutes of Googling, and I found Proxifier (https://www.proxifier.com/)

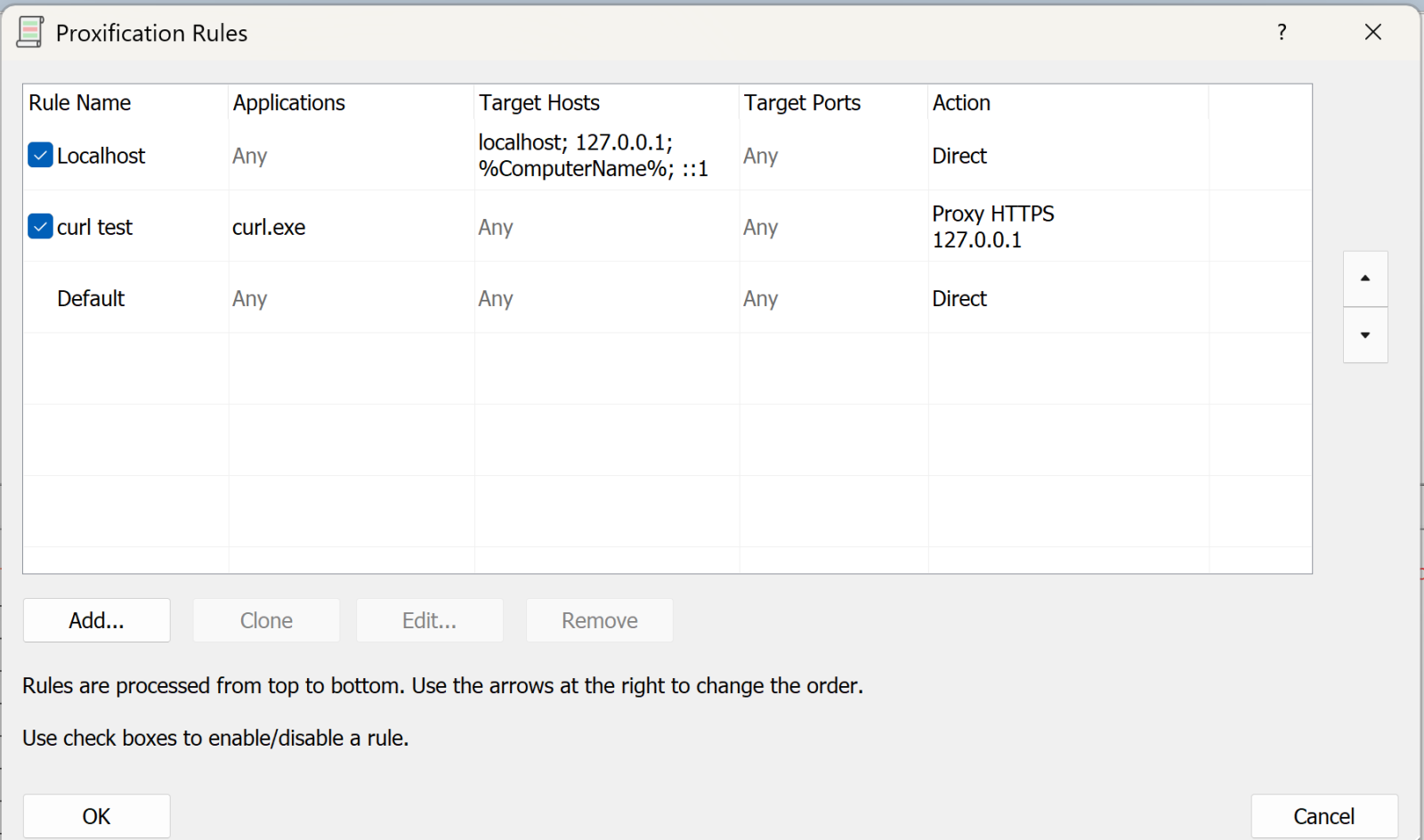

Proxifier allows you set up rules, for instance “send traffic from abc.exe to proxy A”, “send traffic from def.exe to proxy B”, or “send everything else direct”, or any combination. Proxies can be direct or Socks5.

In my case, I was looking at a client executable, and was able to follow all the API calls and data transferred, it was EXACTLY what I needed that day.

I can’t show you the client output - watching the API’s roll by was as cool as it gets though, and the proxy intercept in burp lets you “play” with individual calls if that’s what you need. But I can certainly show you how this works, let’s use curl as our example exe.

Let's start in proxifier. First you need to set up your proxy(s). In this case I'm using Burp Suite Pro running locally, so the proxy is:

.png)

Next, we’ll set up the rules:

The first rule says “anything to my own machine, send direct”. Given how much loopback cruft happens on a typical Windows box, this rule is gold (unless that’s what you are looking for that is).

The second rule is “anything from curl.exe, send to the proxy we just defined” (or whatever your executable is).

You can have multiple of these rules doing different things.

The final rule is “everything else, send direct”

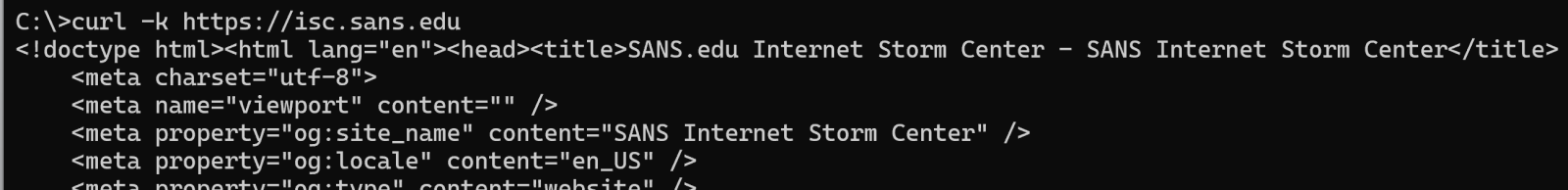

Now, let’s run a test with curl:

(and so on)

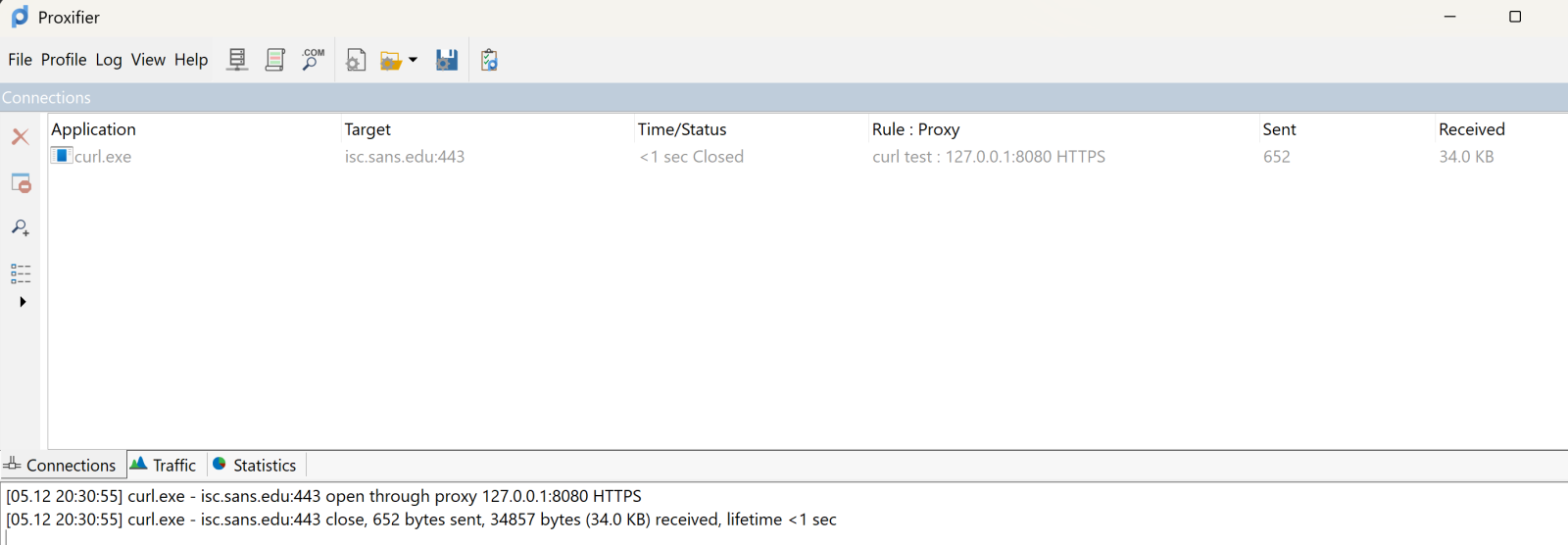

On proxifier, you see the transaction happen in real time:

The top pane shows the executable, target and so on. It’s somewhat ephemeral, it’ll show the live view, then will go grey after the transaction complets, then after a few second disappears. The bottom pane scrolls in a more “log like” manner.

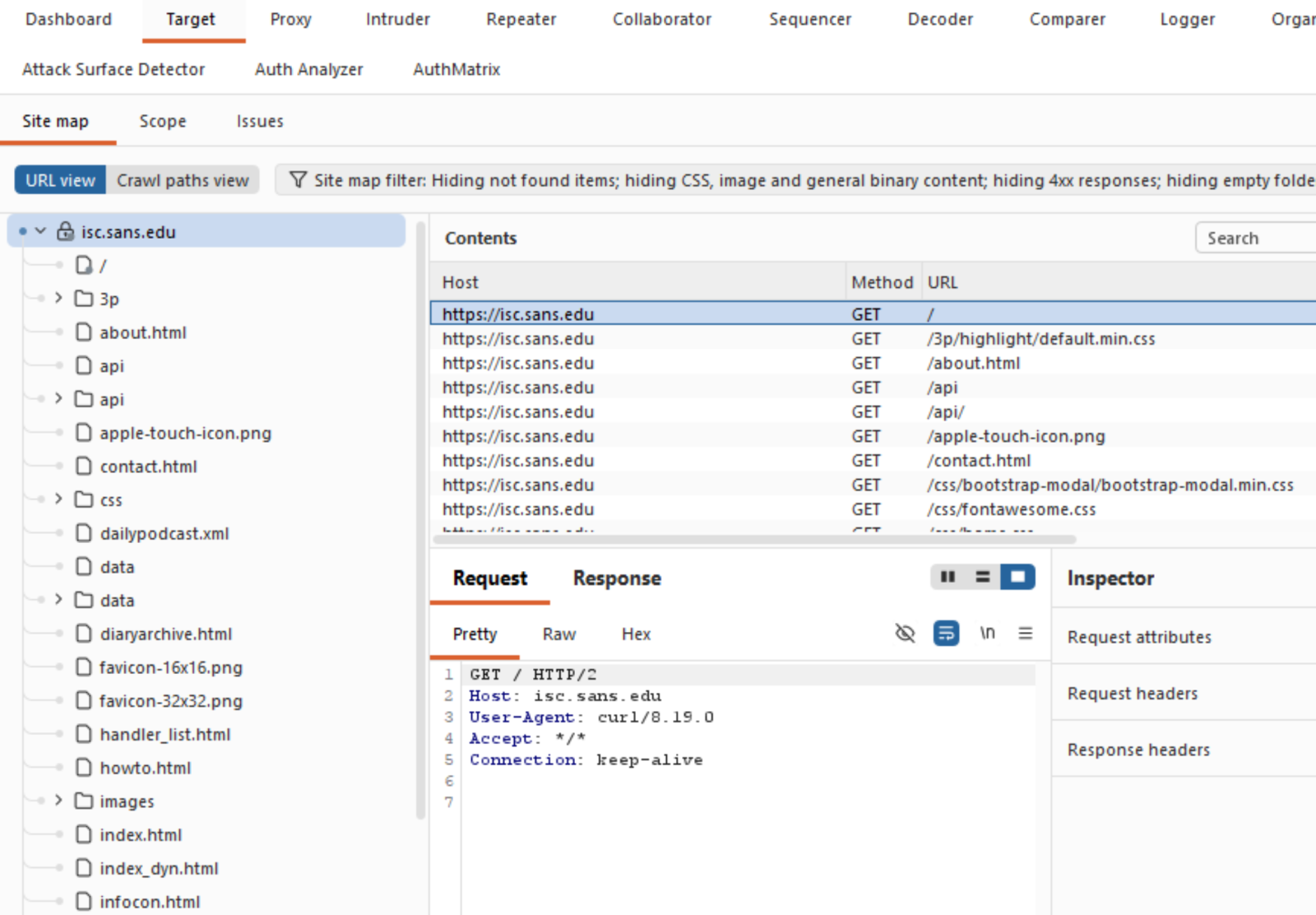

Over in Burp, you see all the business that most sites have as their lead page:

Which is exactly what you need, and can't get these days from a packet capture!

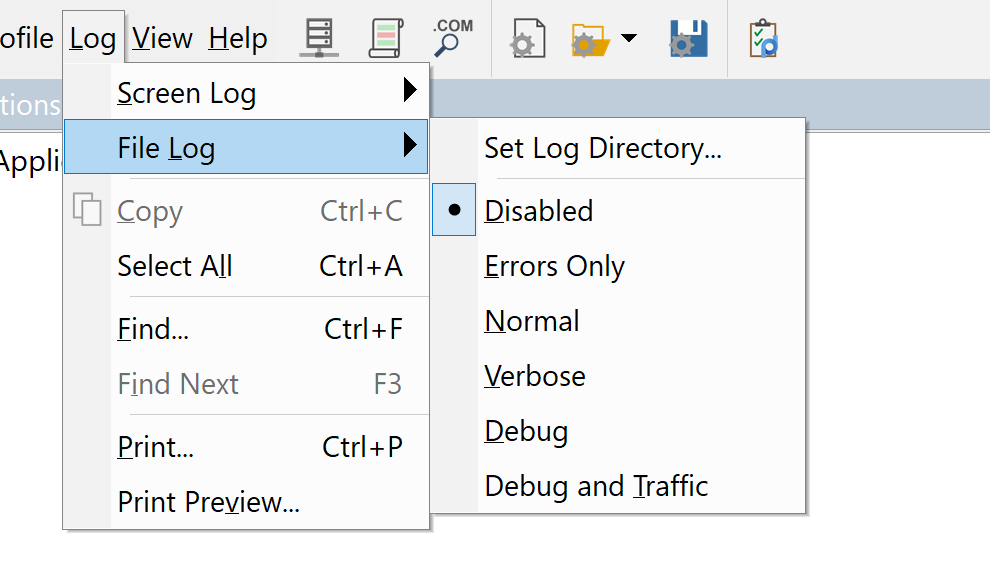

What else does Proxifier do? It also spits out a configurable log file, you can configure what’s in the logs and where to send it:

You can set similar sensitivity on the live on-screen log.

All in all, this tool was a life-saver for me, I’ve used it for a few years now and keep coming up with things that it can bail me out of!

Got a cool use for a tool like this? Give it a try and share your experiences in our comment form below (please keep any NDA’s in mind).

Do you have a similar or better tool for this, again, by all means share in our comment form!

===============

Rob VandenBrink

[email protected]

1 Comments

[GUEST DIARY] Tearing apart website fraud to see how it works.

[This is a Guest Diary by Joshua Nikolson, an ISC Intern and part of the SANS.edu Bachelor's degree in Applied Cybersecurity (BACS) program.]

Introduction

One day at work, a friend messaged me, “How do you check a website to see if it’s legit?” This friend recently received a phishing text message from a “bank”, and I figured he wanted to be careful and double-check. I told him to put the URL into VirusTotal but said that just because it may say it’s clean, that doesn’t mean it’s not malicious.

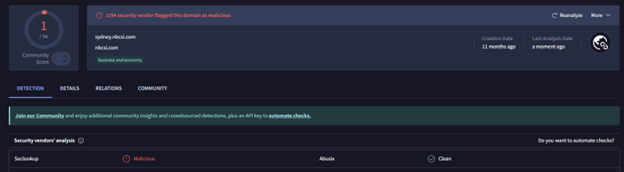

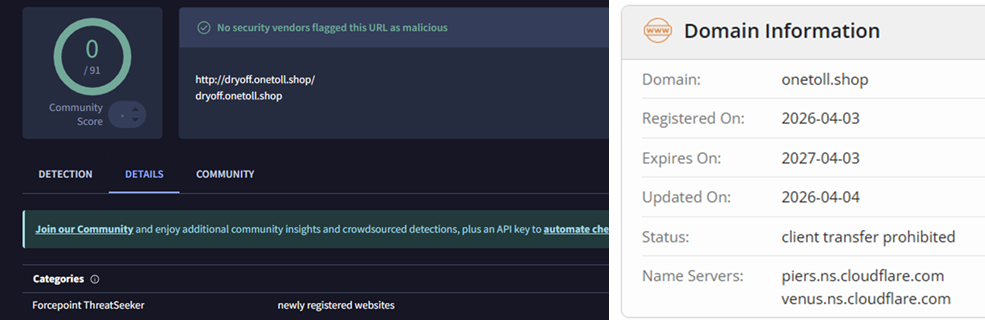

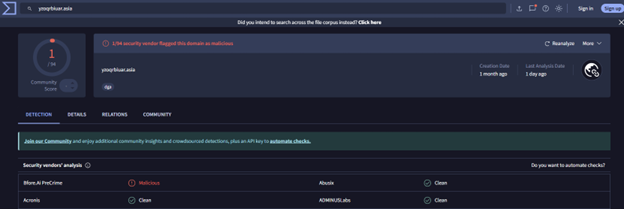

He sent me a screenshot of the VirusTotal page for the URL, with no detections and everything showing green. I took a moment to look at it a little more closely. The domain name was unusual, and right off the bat I could see it had been created in the last few months. As of now, it has one detection from a vendor. All domains mentioned in this blogpost will be listed in the Indicators of Compromise section at the end.

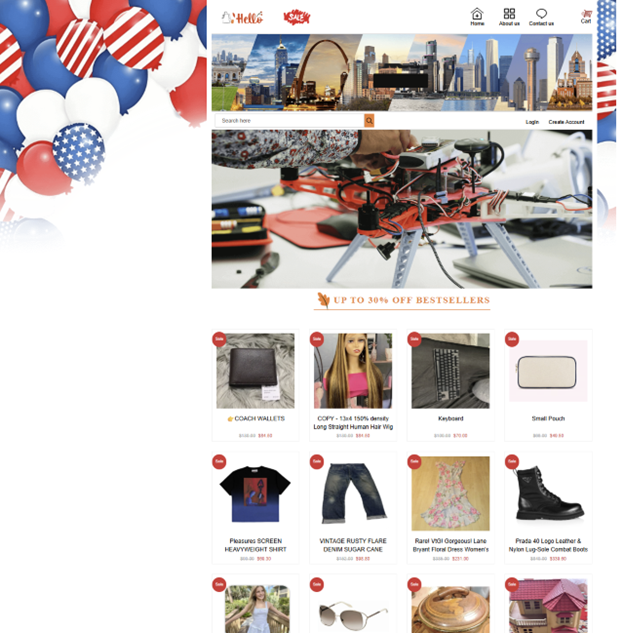

Going to the site, I could immediately tell that something was off about it. It was a secondhand marketplace that seemed to sell just about everything under the sun, with tons of listings in each category and items priced too good to be true. While the site had that “AI vibecoded feeling”, I wanted to give my friend something more concrete other than “don’t trust this site”.

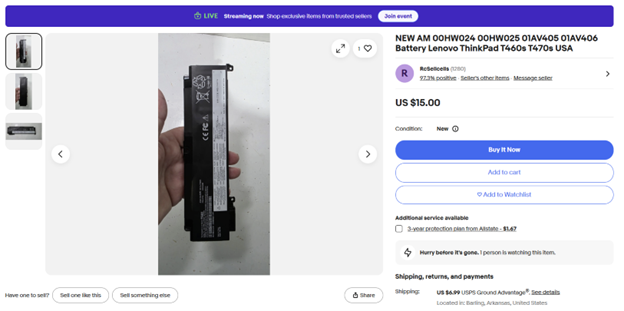

I decided to reverse image search one of the product images, a Lenovo ThinkPad battery replacement, and after some digging, I found an eBay listing with all the same product images and item descriptions. I did this for a few more of the site’s listings and came to the same result. I let my friend know, and he said, “Yeah, it looked too good to be true”.

Finding a Marketplace

I found this interesting and wanted to see if I could find something similar again. Today, it is trivial to use AI to mass-deploy these scams, and I wanted to see what would happen if I tried to buy something.

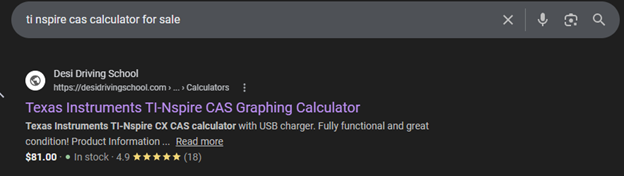

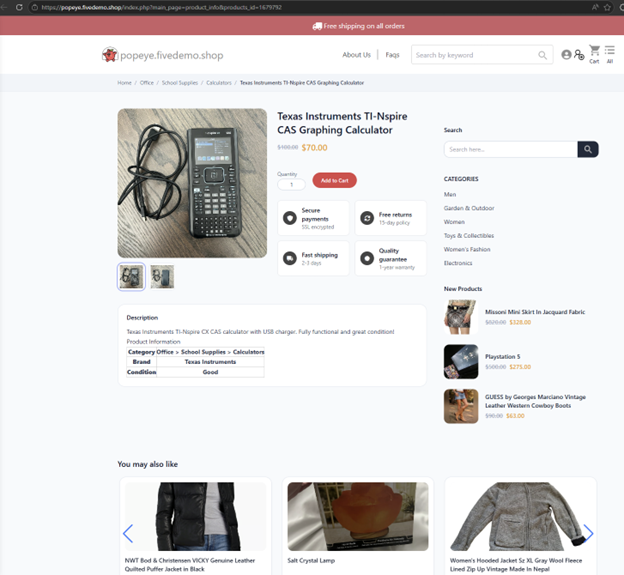

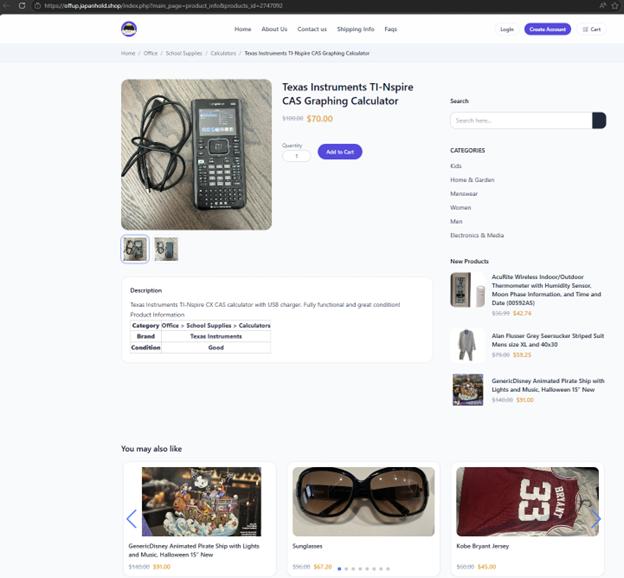

Let’s look up what my friend was originally looking for: a Texas Instruments TI-nSpire CAS calculator. Simply searching on Google and going to the second page, something pops out to me. Why is a driving school selling a calculator?

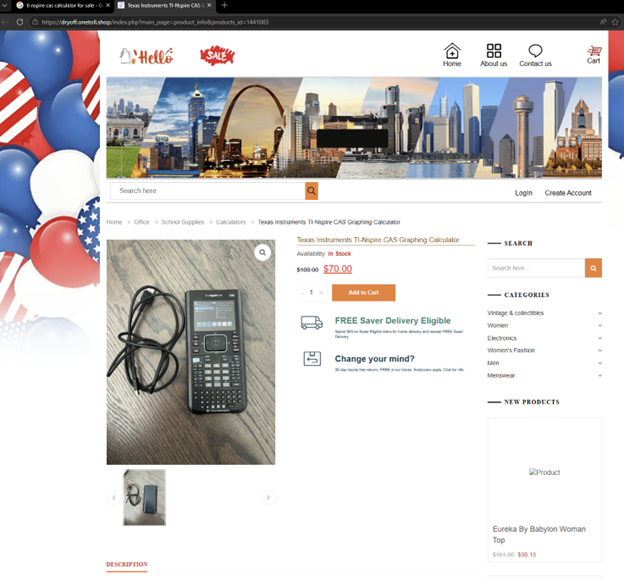

The search result link, hxxps://desidrivingschool[.]com/listing/164903741/ redirects to a marketplace where it is for sale:

This domain looks suspicious on its own, and to add insult to injury, it was registered ~12 days ago on April 3rd, 2026:

What's happening here?

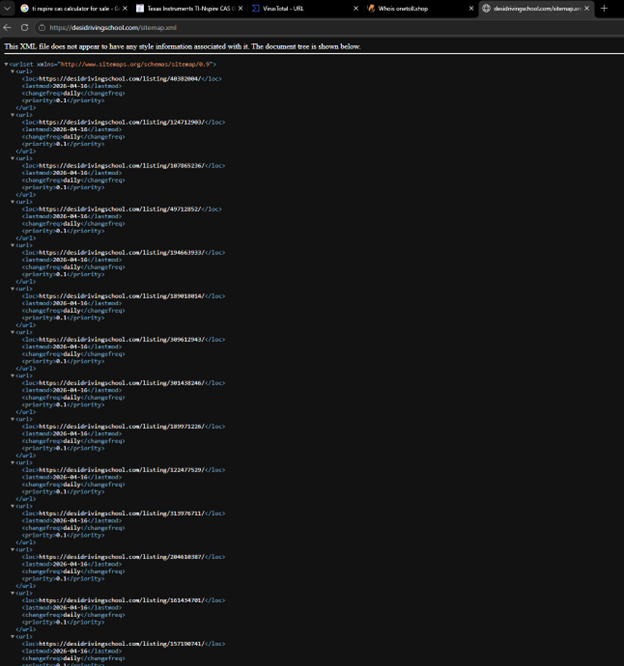

You may be asking why this Desi Driving School is showing up in the search results for this calculator? Good question. If you append “/sitemap.xml” to the URL, you can see tons of these listings that are meant to infiltrate the search results. This is a prime example of SEO poisoning, in which potential victims are lured through their shopping searches to these fake marketplaces.

Threat actors have previously used compromised WordPress sites as command-and-control infrastructure or to stage payloads, but this is being used as a distinct attack vector. Unfortunately, this website was likely compromised, whether through something like a malicious WordPress plugin or stolen credentials, and is now being used to drive traffic to this malicious marketplace:

You can see the range of products that this site offers. They must have a good distribution network.

While reverse-image searching for images of the calculator, I found another similar posting on a different fake marketplace. Same images, same description and title, same exact setup with a redirect…

And another… with what looks like loads of other compromised sites being used.

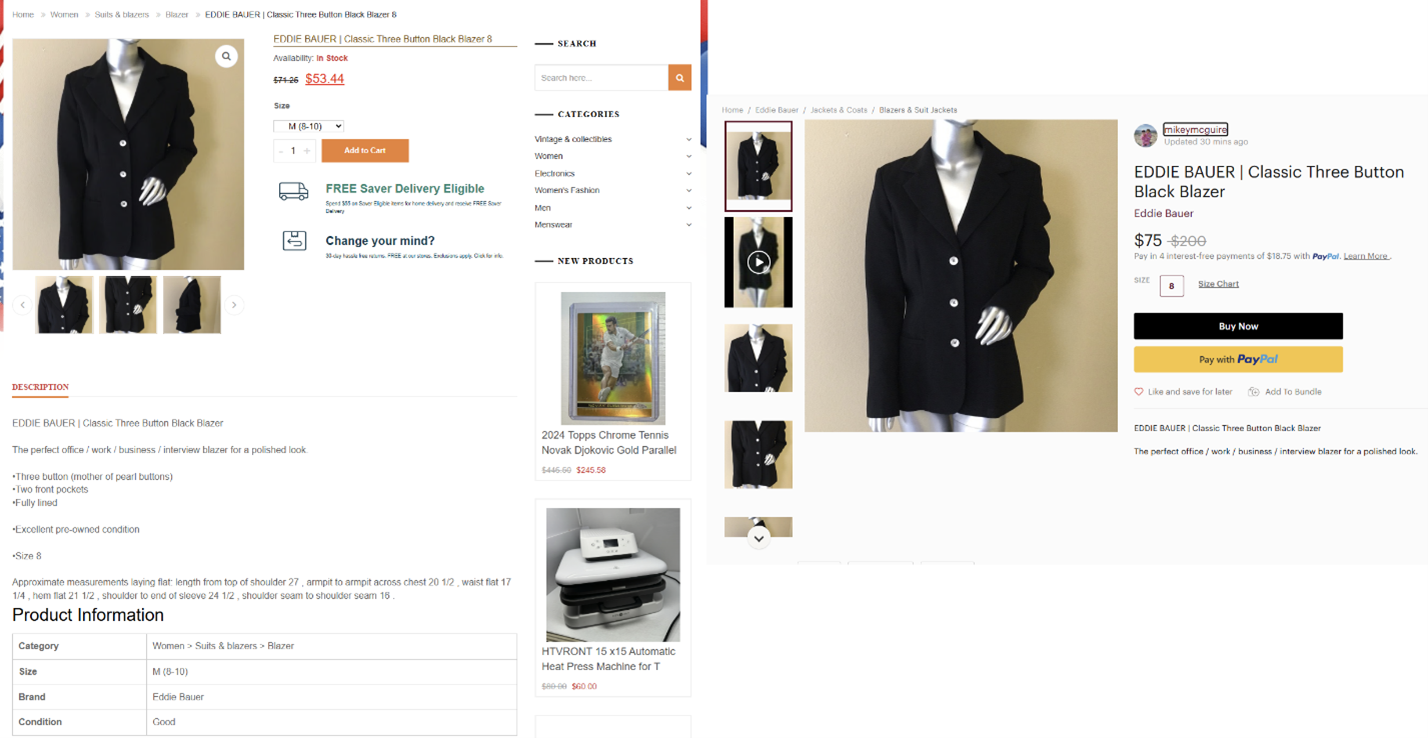

You can endlessly find these sites by searching any description of a product listed with quotes in Google to find the real item that is being sold and copied from, such as this blazer:

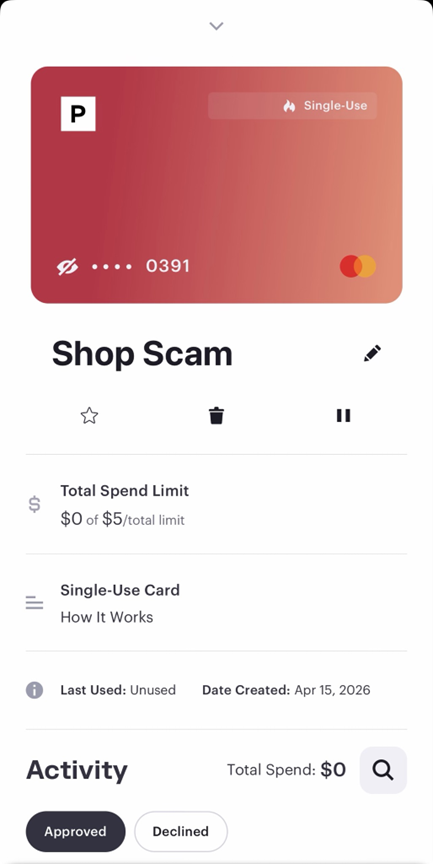

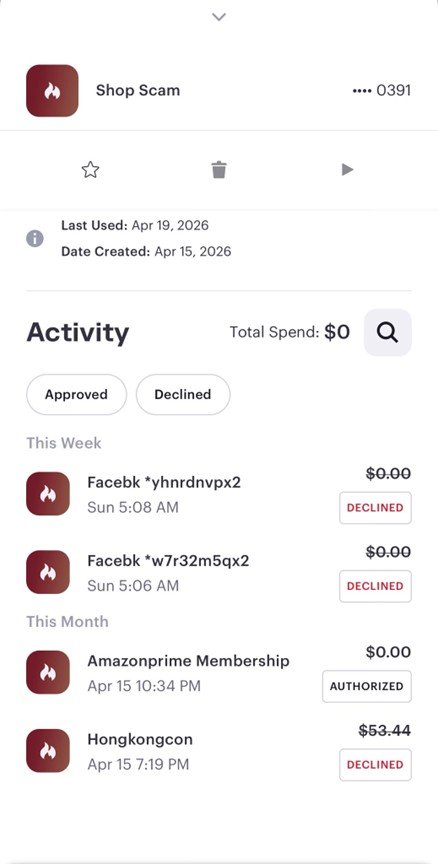

If the price was higher than the actual listing, you might think the scheme is just scraping listings, posting them at a higher price, then purchasing the cheaper item and shipping it. However, I do not think that is the case. Let’s try to buy this Eddie Bauer blazer with a fake identity and a fake debit card from Privacy.com and see what happens.

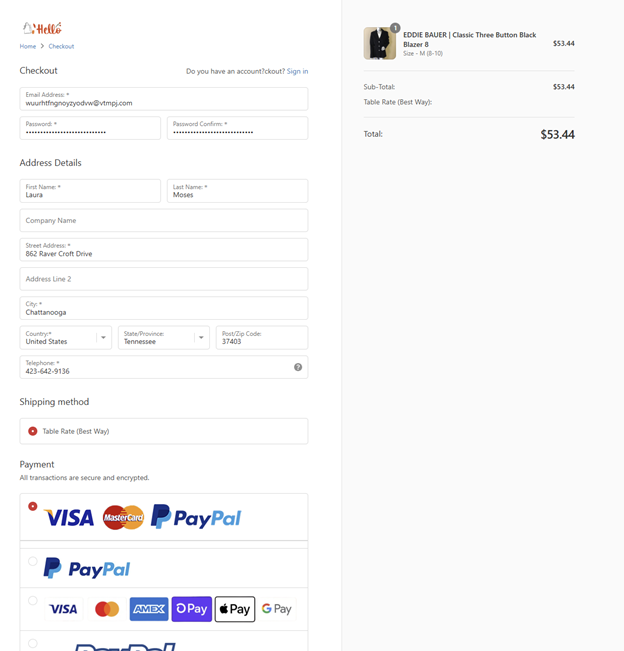

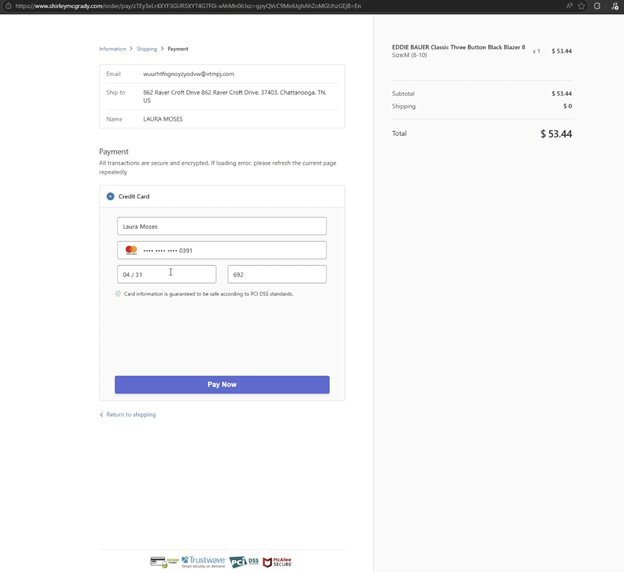

Right away, I can tell this checkout page is a replica of the Shopify checkout page and looks almost identical.

Choosing Mastercard, we’ll check out and pay now. Let’s prepare my fake card. Creating a temporary debit card with a $5 spending limit is extremely easy with Privacy.com, and I have my Mastercard ready.

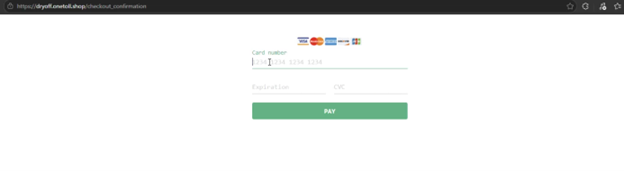

First, I am instructed to enter my card here:

Then I get redirected to this page and must enter my information again:

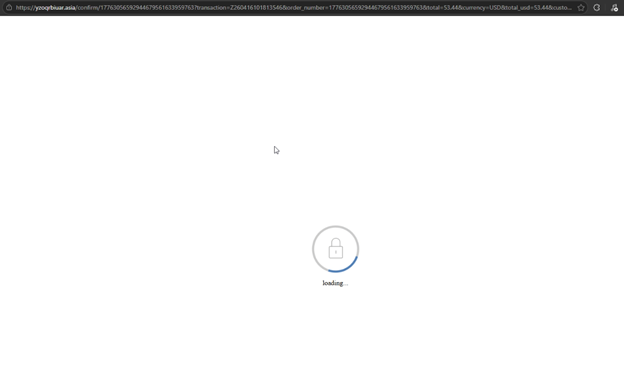

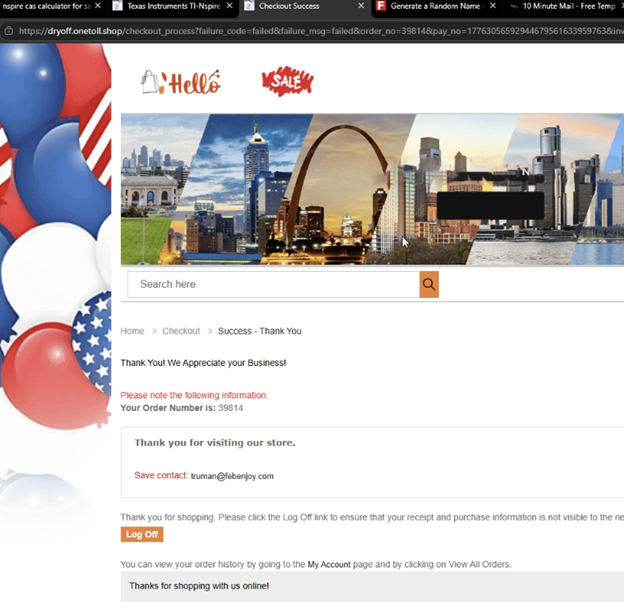

After hitting pay now, a loading screen with a different URL is shown that reminds me of the PayPal loading screen:

And then I am redirected to a thank-you page for my order, despite the card being declined, which they know because it shows failure_code=failed in the URL.

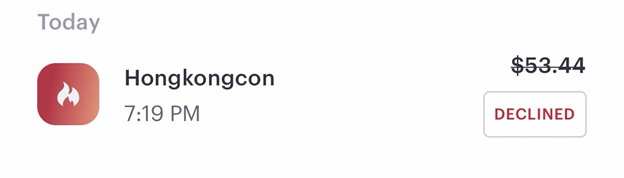

Only one declined charge was seen on my card. However, in other testing instances, I have seen an additional charge at a different price than the “advertised product”.

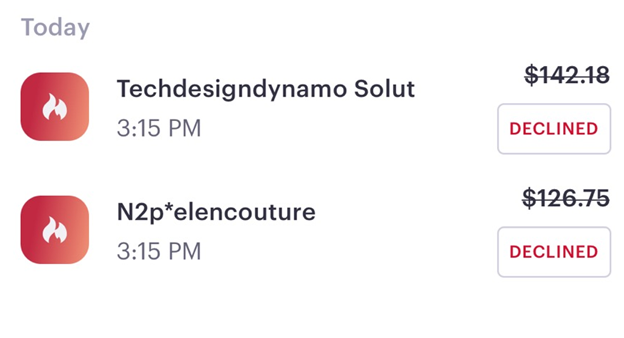

The above screenshot is from the order we just made, and this next screenshot is from a different test where two charges were attempted right away on order:

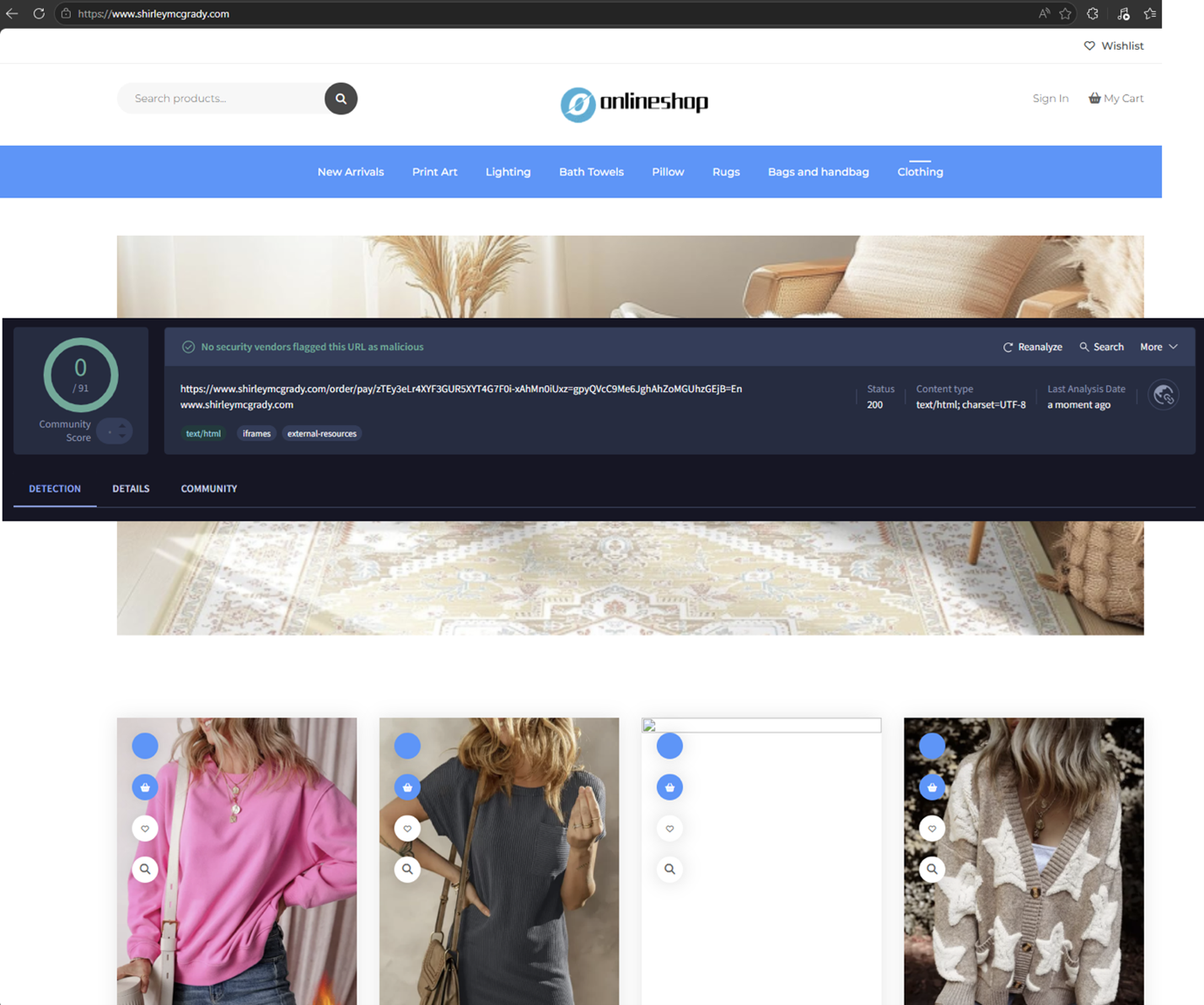

It seems that shirleymcgrady is another similar site, but probably used as the main checkout for the other smaller sites.

The loading page domain from the payment was also created recently, 1 month ago, with one detection on VirusTotal.

Aftermath

A few days after I tried to check out, multiple other charges were attempted on the card:

The clear objective of the scam here is to have the victim make a payment for an item that will never be shipped, resulting in their personal and payment information being stolen. I can only imagine how many people were duped by these marketplaces, considering their popularity, and it must be paying off for the attackers.

With the evolving expertise of AI tools and technology in general, attackers can create these campaigns in a matter of seconds. This takes advantage of the average person’s trust by having a high-ranking site in the search results, images that look real (because they are real, and stolen), and a site that seems trustworthy enough for someone to enter their card details.

I would love to take this a step further and use a card with more funds to purchase a lower-cost item to see how different, if at all, the result would be. It is also fun to hunt down these different marketplaces and see how many you can find. Annoyingly, some of them use registrars that make it harder, not easier, to report abuse, but if anyone reading this would like to investigate this further, I would love to offer my help and see what we can do about it.

Indicators of Compromise

Marketplace Domains:

sydney.nbcsi[.]com

dryoff.onetoll[.]shop

popeye.fivedemo[.]shop

offup.japanhold[.]shop

Redirector Domains:

desidrivingschool[.]com

curencares[.]in

abralipedema.com[.]br

Payment Page Domains:

shirleymcgrady[.]com

yzoqrbiuar[.]asia

0 Comments

Microsoft May 2026 Patch Tuesday

Today's Microsoft patch Tuesday fixes 137 different vulnerabilities. In addition, the update addresses 137 Chromium-related issues affecting Microsoft Edge.

There are no already disclosed or already exploited vulnerabilities included in today's patches. I removed the Chromium issues from the table below and included only the 137 Microsoft issues to make it more readable.

Note that issues related to Microsoft Azure are labeled as "no customer action required.

Significant Vulnerabilities of interest:

CVE-2026-41103: This vulnerability affects the Microsoft SSO Plugin for Jira & Confluence. Exploitation could lead to an elevation of privileges. With ongoing supply chain attacks, development and CI/CD tools like Jira and Confluence are popular targets.

CVE-2026-41089: A preauthentication remote code execution vulnerability in the Netlogon service will always be a juicy target, worth some AI tokens to write an exploit for.

Other critical vulnerabilities include the usual Word and Microsoft Office issues.

| Description | |||||||

|---|---|---|---|---|---|---|---|

| CVE | Disclosed | Exploited | Exploitability (old versions) | current version | Severity | CVSS Base (AVG) | CVSS Temporal (AVG) |

| .NET Core Tampering Vulnerability | |||||||

| %%cve:2026-32175%% | No | No | - | - | Important | 4.3 | 3.8 |

| .NET Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-32177%% | No | No | - | - | Important | 7.3 | 6.4 |

| %%cve:2026-35433%% | No | No | - | - | Important | 7.3 | 6.4 |

| ASP.NET Core Denial of Service Vulnerability | |||||||

| %%cve:2026-42899%% | No | No | - | - | Important | 7.5 | 6.5 |

| Azure AI Foundry Elevation of Privilege Vulnerability (no customer action required) |

|||||||

| %%cve:2026-35435%% | No | No | - | - | Critical | 8.6 | 7.5 |

| Azure Cloud Shell Spoofing Vulnerability (no customer action required) |

|||||||

| %%cve:2026-35428%% | No | No | - | - | Critical | 9.6 | 8.3 |

| Azure Connected Machine Agent Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40381%% | No | No | - | - | Important | 7.8 | 6.8 |

| Azure DevOps Information Disclosure Vulnerability (no customer action required) |

|||||||

| %%cve:2026-42826%% | No | No | - | - | Critical | 10.0 | 8.7 |

| Azure Logic Apps Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-42823%% | No | No | - | - | Important | 9.9 | 8.6 |

| Azure Machine Learning Notebook Spoofing Vulnerability (no customer action required) |

|||||||

| %%cve:2026-32207%% | No | No | - | - | Critical | 8.8 | 7.7 |

| %%cve:2026-33833%% | No | No | - | - | Important | 8.2 | 7.1 |

| Azure Managed Instance for Apache Cassandra Remote Code Execution Vulnerability (no customer action required) |

|||||||

| %%cve:2026-33109%% | No | No | - | - | Critical | 9.9 | 8.6 |

| %%cve:2026-33844%% | No | No | - | - | Critical | 9.0 | 7.8 |

| Azure Monitor Action Group Notification System Elevation of Privilege Vulnerability (no customer action required) |

|||||||

| %%cve:2026-41105%% | No | No | - | - | Critical | 8.1 | 7.1 |

| Azure Monitor Agent Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-32204%% | No | No | - | - | Important | 7.8 | 6.8 |

| Azure Monitor Agent Metrics Extension Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-42830%% | No | No | - | - | Important | 6.5 | 5.7 |

| Azure SDK for Java Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-33117%% | No | No | - | - | Important | 9.1 | 7.9 |

| Copilot Chat (Microsoft Edge) Information Disclosure Vulnerability (no customer action required) |

|||||||

| %%cve:2026-33111%% | No | No | - | - | Critical | 7.5 | 6.5 |

| Data Deduplication Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-41095%% | No | No | - | - | Important | 7.8 | 6.8 |

| GitHub Copilot and Visual Studio Code Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-41109%% | No | No | - | - | Important | 8.8 | 7.7 |

| Internet Key Exchange (IKE) Protocol Denial of Service Vulnerability | |||||||

| %%cve:2026-35424%% | No | No | - | - | Important | 7.5 | 6.5 |

| M365 Copilot Information Disclosure Vulnerability (no customer action required) |

|||||||

| %%cve:2026-26129%% | No | No | - | - | Critical | 7.5 | 6.5 |

| %%cve:2026-26164%% | No | No | - | - | Critical | 7.5 | 6.5 |

| M365 Copilot for Desktop Spoofing Vulnerability | |||||||

| %%cve:2026-41614%% | No | No | - | - | Important | 6.2 | 5.4 |

| Microsoft 365 Copilot for Android Spoofing Vulnerability | |||||||

| %%cve:2026-41100%% | No | No | - | - | Important | 4.4 | 3.9 |

| Microsoft Cryptographic Services Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40377%% | No | No | - | - | Important | 7.8 | 6.8 |

| Microsoft Data Formulator Remote Code Execution Vulnerability | |||||||

| %%cve:2026-41094%% | No | No | - | - | Important | 8.8 | 7.7 |

| Microsoft Dynamics 365 Business Central Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40417%% | No | No | - | - | Important | 7.8 | 6.8 |

| Microsoft Dynamics 365 Customer Insights Elevation of Privilege Vulnerability (no customer action required) |

|||||||

| %%cve:2026-33821%% | No | No | - | - | Critical | 7.7 | 6.7 |

| Microsoft Dynamics 365 On-Premises Remote Code Execution Vulnerability | |||||||

| %%cve:2026-42898%% | No | No | - | - | Critical | 9.9 | 8.6 |

| %%cve:2026-42833%% | No | No | - | - | Important | 9.1 | 7.9 |

| Microsoft Edge (Chromium-based) Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-42838%% | No | No | - | - | Important | 5.4 | 4.7 |

| Microsoft Edge (Chromium-based) Information Disclosure Vulnerability | |||||||

| %%cve:2026-41107%% | No | No | - | - | Moderate | 7.4 | 6.4 |

| Microsoft Edge (Chromium-based) for Android Spoofing Vulnerability | |||||||

| %%cve:2026-42891%% | No | No | - | - | Moderate | 6.5 | 5.7 |

| %%cve:2026-35429%% | No | No | - | - | Moderate | 4.3 | 3.9 |

| %%cve:2026-40416%% | No | No | - | - | Low | 4.3 | 3.8 |

| Microsoft Enterprise Security Token Service (ESTS) Spoofing Vulnerability (no customer action required) |

|||||||

| %%cve:2026-40379%% | No | No | - | - | Critical | 9.3 | 8.1 |

| Microsoft Excel Information Disclosure Vulnerability | |||||||

| %%cve:2026-40360%% | No | No | - | - | Important | 7.8 | 6.8 |

| Microsoft Excel Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40359%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40362%% | No | No | - | - | Important | 7.8 | 6.8 |

| Microsoft Message Queuing (MSMQ) Remote Code Execution Vulnerability | |||||||

| %%cve:2026-34329%% | No | No | - | - | Important | 8.8 | 7.7 |

| Microsoft Office Click-To-Run Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40419%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40418%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-35436%% | No | No | - | - | Important | 8.8 | 7.7 |

| %%cve:2026-40420%% | No | No | - | - | Important | 8.8 | 7.7 |

| Microsoft Office Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40363%% | No | No | - | - | Critical | 8.4 | 7.3 |

| %%cve:2026-42831%% | No | No | - | - | Critical | 7.8 | 6.8 |

| %%cve:2026-40358%% | No | No | - | - | Critical | 8.4 | 7.3 |

| Microsoft Office Spoofing Vulnerability | |||||||

| %%cve:2026-42832%% | No | No | - | - | Important | 7.7 | 6.7 |

| Microsoft Outlook for iOS Tampering Vulnerability | |||||||

| %%cve:2026-42893%% | No | No | - | - | Important | 7.4 | 6.4 |

| Microsoft Partner Center Spoofing Vulnerability (no customer action required) |

|||||||

| %%cve:2026-34327%% | No | No | - | - | Critical | 8.2 | 7.1 |

| Microsoft Power Automate Desktop Information Disclosure Vulnerability | |||||||

| %%cve:2026-40374%% | No | No | - | - | Important | 6.5 | 5.7 |

| Microsoft PowerPoint for Android Spoofing Vulnerability | |||||||

| %%cve:2026-41102%% | No | No | - | - | Important | 7.1 | 6.2 |

| Microsoft SSO Plugin for Jira & Confluence Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-41103%% | No | No | - | - | Critical | 9.1 | 7.9 |

| Microsoft SharePoint Server Remote Code Execution Vulnerability | |||||||

| %%cve:2026-35439%% | No | No | - | - | Important | 8.8 | 7.7 |

| %%cve:2026-40368%% | No | No | - | - | Important | 8.0 | 7.0 |

| %%cve:2026-33110%% | No | No | - | - | Important | 8.8 | 7.7 |

| %%cve:2026-33112%% | No | No | - | - | Important | 8.8 | 7.7 |

| %%cve:2026-40357%% | No | No | - | - | Important | 8.8 | 7.7 |

| %%cve:2026-40365%% | No | No | - | - | Critical | 8.8 | 7.7 |

| Microsoft Team Events Portal Information Disclosure Vulnerability (no customer action required) |

|||||||

| %%cve:2026-33823%% | No | No | - | - | Critical | 9.6 | 8.3 |

| Microsoft Teams Spoofing Vulnerability | |||||||

| %%cve:2026-32185%% | No | No | - | - | Important | 5.5 | 4.8 |

| Microsoft Word Information Disclosure Vulnerability | |||||||

| %%cve:2026-35440%% | No | No | - | - | Important | 5.5 | 4.8 |

| %%cve:2026-40421%% | No | No | - | - | Important | 4.3 | 3.8 |

| Microsoft Word Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40364%% | No | No | - | - | Critical | 8.4 | 7.3 |

| %%cve:2026-40366%% | No | No | - | - | Critical | 8.4 | 7.3 |

| %%cve:2026-40361%% | No | No | - | - | Critical | 8.4 | 7.3 |

| %%cve:2026-40367%% | No | No | - | - | Critical | 8.4 | 7.3 |

| Microsoft Word for Android Spoofing Vulnerability | |||||||

| %%cve:2026-41101%% | No | No | - | - | Important | 7.1 | 6.2 |

| SQL Server Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40370%% | No | No | - | - | Important | 8.8 | 7.7 |

| Secure Boot Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-41097%% | No | No | - | - | Important | 6.7 | 5.8 |

| Visual Studio Code Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-41613%% | No | No | - | - | Important | 8.8 | 7.7 |

| Visual Studio Code Information Disclosure Vulnerability | |||||||

| %%cve:2026-41612%% | No | No | - | - | Important | 5.5 | 4.8 |

| Visual Studio Code Remote Code Execution Vulnerability | |||||||

| %%cve:2026-41611%% | No | No | - | - | Important | 7.8 | 6.8 |

| Visual Studio Code Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-41610%% | No | No | - | - | Important | 6.3 | 5.5 |

| Win32k Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-33839%% | No | No | - | - | Important | 7.0 | 6.1 |

| %%cve:2026-33840%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34330%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34331%% | No | No | - | - | Important | 7.0 | 6.1 |

| Windows 11 Telnet Client Information Disclosure Vulnerability | |||||||

| %%cve:2026-35423%% | No | No | - | - | Important | 5.4 | 4.7 |

| Windows Admin Center Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-35438%% | No | No | - | - | Important | 8.3 | 7.2 |

| Windows Admin Center in Azure Portal Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-41086%% | No | No | - | - | Important | 8.8 | 7.7 |

| Windows Ancillary Function Driver for WinSock Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34344%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34345%% | No | No | - | - | Important | 7.0 | 6.1 |

| %%cve:2026-35416%% | No | No | - | - | Important | 7.0 | 6.1 |

| %%cve:2026-41088%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Application Identity (AppID) Subsystem Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34343%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Cloud Files Mini Filter Driver Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-35418%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-33835%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34337%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Common Log File System Driver Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40407%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40397%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows DNS Client Remote Code Execution Vulnerability | |||||||

| %%cve:2026-41096%% | No | No | - | - | Critical | 9.8 | 8.5 |

| Windows DWM Core Library Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-42896%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows DWM Core Library Information Disclosure Vulnerability | |||||||

| %%cve:2026-35419%% | No | No | - | - | Important | 5.5 | 4.8 |

| %%cve:2026-34336%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Event Logging Service Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-33834%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Filtering Platform (WFP) Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-32209%% | No | No | - | - | Important | 4.4 | 3.9 |

| Windows GDI Remote Code Execution Vulnerability | |||||||

| %%cve:2026-35421%% | No | No | - | - | Critical | 7.8 | 6.8 |

| Windows Graphics Component Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40403%% | No | No | - | - | Critical | 8.8 | 7.7 |

| Windows Hyper-V Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40402%% | No | No | - | - | Critical | 9.3 | 8.1 |

| Windows Kernel Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-33841%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-35420%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40369%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Kernel-Mode Driver Remote Code Execution Vulnerability | |||||||

| %%cve:2026-34332%% | No | No | - | - | Important | 8.0 | 7.0 |

| Windows Lightweight Directory Access Protocol (LDAP) Denial of Service Vulnerability | |||||||

| %%cve:2026-34339%% | No | No | - | - | Important | 5.5 | 4.8 |

| Windows Link-Layer Discovery Protocol (LLDP) Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34341%% | No | No | - | - | Important | 7.0 | 6.1 |

| Windows Message Queuing (MSMQ) Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-33838%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Native WiFi Miniport Driver Remote Code Execution Vulnerability | |||||||

| %%cve:2026-32161%% | No | No | - | - | Critical | 7.5 | 6.5 |

| Windows Netlogon Remote Code Execution Vulnerability | |||||||

| %%cve:2026-41089%% | No | No | - | - | Critical | 9.8 | 8.5 |

| Windows Print Spooler Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34342%% | No | No | - | - | Important | 7.0 | 6.1 |

| Windows Projected File System Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34340%% | No | No | - | - | Important | 7.0 | 6.1 |

| Windows Remote Desktop Services Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40398%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Rich Text Edit Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-21530%% | No | No | - | - | Important | 6.7 | 5.8 |

| %%cve:2026-32170%% | No | No | - | - | Important | 6.7 | 5.8 |

| Windows SMB Client Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40410%% | No | No | - | - | Important | 7.0 | 6.1 |

| Windows Storage Spaces Controller Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-35415%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Storport Miniport Driver Denial of Service Vulnerability | |||||||

| %%cve:2026-34350%% | No | No | - | - | Important | 6.5 | 5.7 |

| Windows TCP/IP Denial of Service Vulnerability | |||||||

| %%cve:2026-40405%% | No | No | - | - | Important | 7.5 | 6.5 |

| %%cve:2026-40414%% | No | No | - | - | Important | 7.4 | 6.4 |

| %%cve:2026-40401%% | No | No | - | - | Important | 7.1 | 6.2 |

| %%cve:2026-40413%% | No | No | - | - | Important | 7.4 | 6.4 |

| Windows TCP/IP Driver Security Feature Bypass Vulnerability | |||||||

| %%cve:2026-35422%% | No | No | - | - | Important | 6.5 | 5.7 |

| Windows TCP/IP Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34351%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40399%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34334%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows TCP/IP Information Disclosure Vulnerability | |||||||

| %%cve:2026-40406%% | No | No | - | - | Important | 7.5 | 6.5 |

| Windows TCP/IP Local Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-33837%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows TCP/IP Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40415%% | No | No | - | - | Important | 8.1 | 7.1 |

| Windows Telephony Service Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-42825%% | No | No | - | - | Important | 7.0 | 6.1 |

| %%cve:2026-34338%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-40382%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Volume Manager Extension Driver Remote Code Execution Vulnerability | |||||||

| %%cve:2026-40380%% | No | No | - | - | Important | 6.2 | 5.4 |

| Windows WAN ARP Driver Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-40408%% | No | No | - | - | Important | 7.8 | 6.8 |

| Windows Win32k Elevation of Privilege Vulnerability | |||||||

| %%cve:2026-34333%% | No | No | - | - | Important | 7.8 | 6.8 |

| %%cve:2026-34347%% | No | No | - | - | Important | 7.0 | 6.1 |

| %%cve:2026-35417%% | No | No | - | - | Important | 7.8 | 6.8 |

--

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

0 Comments

Apple Patches Everything

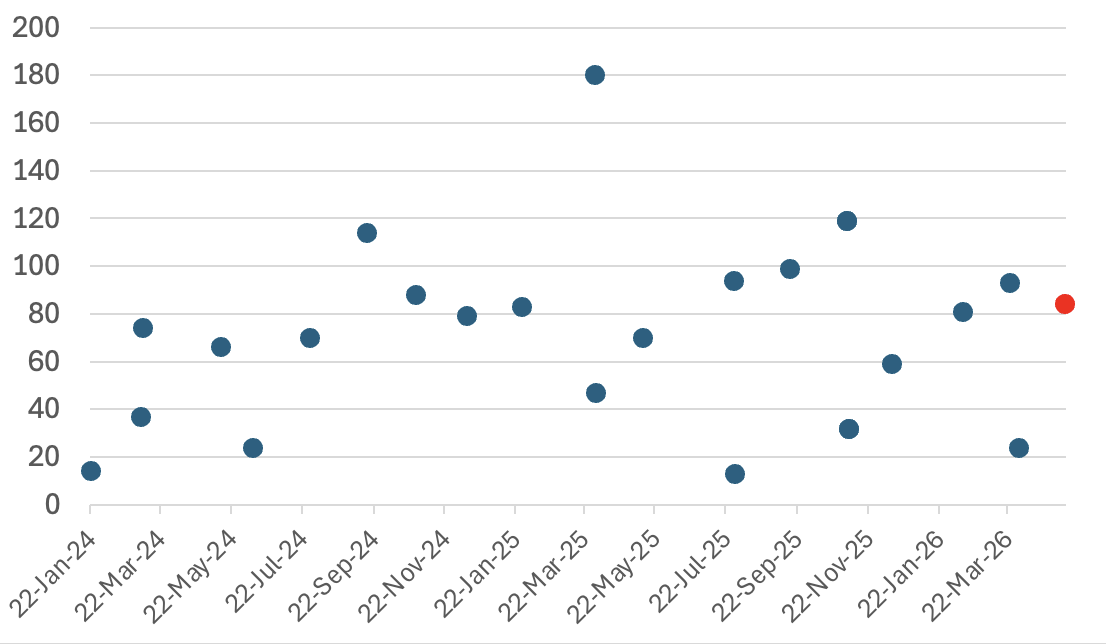

Apple today released its typical feature update across it's operating systems (iOS, iPadOS, macOS, tvOS, watchOS, vision OS). With this update, Apple patched 84 different vulnerabilities. Updates are available for the "26" series of operating systems, as well as for the previous "18" version of iOS/iPadOS, and two versions back for macOS (version 14 and 15).

None of the vulnerabilities has been exploited. The number of addressed vulnerabilities is about average compared to similar Apple updates.

Figure: Number of Vulnerabilities patched for each security update. Last one in red at the end.

| iOS 26.5 and iPadOS 26.5 | iOS 18.7.9 and iPadOS 18.7.9 | macOS Tahoe 26.5 | macOS Sequoia 15.7.7 | macOS Sonoma 14.8.7 | tvOS 26.5 | watchOS 26.5 | visionOS 26.5 |

|---|---|---|---|---|---|---|---|

| CVE-2025-43524: An app may be able to break out of its sandbox. Affects Icons |

|||||||

| x | x | ||||||

| CVE-2026-28819: An app may be able to execute arbitrary code with kernel privileges. Affects Wi-Fi |

|||||||

| x | x | x | x | ||||

| CVE-2026-28840: An app may be able to gain root privileges. Affects PackageKit |

|||||||

| x | x | ||||||

| CVE-2026-28846: A remote attacker may be able to cause unexpected app termination. Affects SceneKit |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28848: A remote attacker may be able to cause unexpected system termination. Affects SMB |

|||||||

| x | x | ||||||

| CVE-2026-28870: An app may be able to access sensitive user data. Affects GeoServices |

|||||||

| x | |||||||

| CVE-2026-28872: A remote attacker may be able to cause a denial-of-service. Affects Calendar |

|||||||

| x | |||||||

| CVE-2026-28873: An app may be able to circumvent App Privacy Report logging. Affects Privacy |

|||||||

| x | |||||||

| CVE-2026-28877: An app may be able to access sensitive user data. Affects Accounts |

|||||||

| x | |||||||

| CVE-2026-28878: An app may be able to enumerate a user's installed apps. Affects Crash Reporter |

|||||||

| x | |||||||

| CVE-2026-28882: An app may be able to enumerate a user's installed apps. Affects libxpc |

|||||||

| x | |||||||

| CVE-2026-28883: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebKit |

|||||||

| x | x | x | x | x | |||

| CVE-2026-28894: A remote attacker may be able to cause a denial-of-service. Affects Calling Framework |

|||||||

| x | |||||||

| CVE-2026-28897: A local user may be able to cause unexpected system termination or read kernel memory. Affects Kernel |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28901: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebKit |

|||||||

| x | |||||||

| CVE-2026-28906: An attacker may be able to track users through their IP address. Affects Networking |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28907: Processing maliciously crafted web content may prevent Content Security Policy from being enforced. Affects WebKit |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28908: An app may be able to modify protected parts of the file system. Affects Kernel |

|||||||

| x | x | x | |||||

| CVE-2026-28913: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebKit |

|||||||

| x | x | x | x | ||||

| CVE-2026-28914: A maliciously crafted ZIP archive may bypass Gatekeeper checks. Affects zip |

|||||||

| x | |||||||

| CVE-2026-28915: An app may be able to gain root privileges. Affects CUPS |

|||||||

| x | x | x | |||||

| CVE-2026-28917: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebKit |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28918: Parsing a maliciously crafted file may lead to an unexpected app termination. Affects CoreSymbolication |

|||||||

| x | x | x | x | x | |||

| CVE-2026-28919: An app may be able to gain root privileges. Affects StorageKit |

|||||||

| x | x | x | |||||

| CVE-2026-28920: Visiting a maliciously crafted website may leak sensitive data. Affects zlib |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28922: An app may be able to access private information. Affects CoreMedia |

|||||||

| x | x | x | |||||

| CVE-2026-28923: A malicious app may be able to break out of its sandbox. Affects GPU Drivers |

|||||||

| x | x | x | |||||

| CVE-2026-28924: An app may be able to access Contacts without user consent. Affects Sync Services |

|||||||

| x | x | x | |||||

| CVE-2026-28925: An app may be able to cause unexpected system termination or write kernel memory. Affects HFS |

|||||||

| x | x | x | |||||

| CVE-2026-28929: Replying to an email could display remote images in Mail in Lockdown Mode. Affects Mail Drafts |

|||||||

| x | x | x | x | ||||

| CVE-2026-28930: An app may be able to access protected user data. Affects Spotlight |

|||||||

| x | |||||||

| CVE-2026-28936: Processing a maliciously crafted file may lead to unexpected app termination. Affects CoreServices |

|||||||

| x | x | x | x | x | |||

| CVE-2026-28940: Processing a maliciously crafted image may corrupt process memory. Affects Model I/O |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28941: Processing a maliciously crafted file may lead to a denial-of-service or potentially disclose memory contents. Affects Model I/O |

|||||||

| x | x | x | |||||

| CVE-2026-28942: Processing maliciously crafted web content may lead to an unexpected Safari crash. Affects WebKit |

|||||||

| x | x | x | x | ||||

| CVE-2026-28943: An app may be able to determine kernel memory layout. Affects IOHIDFamily |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28944: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebRTC |

|||||||

| x | x | x | |||||

| CVE-2026-28947: Processing maliciously crafted web content may lead to an unexpected Safari crash. Affects WebKit |

|||||||

| x | |||||||

| CVE-2026-28951: An app may be able to gain root privileges. Affects Kernel |

|||||||

| x | x | x | x | x | |||

| CVE-2026-28952: An app may be able to cause unexpected system termination. Affects Kernel |

|||||||

| x | x | x | x | ||||

| CVE-2026-28953: Processing maliciously crafted web content may lead to an unexpected process crash. Affects WebKit |

|||||||

| x | |||||||

| CVE-2026-28954: A maliciously crafted disk image may bypass Gatekeeper checks. Affects Kernel |

|||||||

| x | x | x | x | ||||

| CVE-2026-28956: Processing a maliciously crafted media file may lead to unexpected app termination or corrupt process memory. Affects AppleJPEG |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28957: An app may be able to capture a user's screen. Affects Status Bar |

|||||||

| x | x | x | |||||

| CVE-2026-28958: An app may be able to access sensitive user data. Affects WebKit |

|||||||

| x | x | x | |||||

| CVE-2026-28959: An app may be able to cause unexpected system termination. Affects APFS |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28961: An attacker with physical access to a locked device may be able to view sensitive user information. Affects Network Extensions |

|||||||

| x | |||||||

| CVE-2026-28962: Processing maliciously crafted web content may disclose sensitive user information. Affects WebKit |

|||||||

| x | x | x | x | ||||

| CVE-2026-28963: An attacker with physical access may be able to use Visual Intelligence to access sensitive user data during iPhone Mirroring. Affects Screenshots |

|||||||

| x | |||||||

| CVE-2026-28964: An app may be able to access sensitive user data. Affects CoreAnimation |

|||||||

| x | x | ||||||

| CVE-2026-28965: A user may be able to view restricted content from the lock screen. Affects WidgetKit |

|||||||

| x | |||||||

| CVE-2026-28969: An app may be able to cause unexpected system termination. Affects IOKit |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28971: A malicious iframe may use another website?s download settings. Affects WebKit |

|||||||

| x | x | x | |||||

| CVE-2026-28972: An app may be able to cause unexpected system termination or write kernel memory. Affects Kernel |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28974: An app may be able to cause a denial-of-service. Affects Spotlight |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28976: An app may be able to gain root privileges. Affects UserAccountUpdater |

|||||||

| x | |||||||

| CVE-2026-28977: Processing a maliciously crafted file may lead to unexpected app termination. Affects ImageIO |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28978: A malicious app may be able to break out of its sandbox. Affects Installer |

|||||||

| x | x | x | |||||

| CVE-2026-28983: A remote attacker may be able to cause a denial of service. Affects LaunchServices |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28985: An attacker on the local network may be able to cause a denial-of-service. Affects mDNSResponder |

|||||||

| x | x | x | |||||

| CVE-2026-28986: An app may be able to cause unexpected system termination. Affects Kernel |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28987: An app may be able to leak sensitive kernel state. Affects Kernel |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28988: An app may be able to bypass certain Privacy preferences. Affects Accounts |

|||||||

| x | x | x | x | ||||

| CVE-2026-28990: Processing a maliciously crafted image may corrupt process memory. Affects ImageIO |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28991: An app may be able to cause a denial-of-service. Affects Accelerate |

|||||||

| x | x | x | x | x | |||

| CVE-2026-28992: An attacker may be able to cause unexpected app termination. Affects IOHIDFamily |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-28993: An app may be able to access user-sensitive data. Affects Shortcuts |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28994: An attacker in a privileged network position may be able to perform denial-of-service attack using crafted Wi-Fi packets. Affects Wi-Fi |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-28995: A malicious app may be able to break out of its sandbox. Affects App Intents |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-28996: An app may be able to access sensitive user data. Affects Storage |

|||||||

| x | x | x | x | x | x | x | |

| CVE-2026-39869: Processing an audio stream in a maliciously crafted media file may terminate the process. Affects Audio |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-39870: Processing a maliciously crafted image may corrupt process memory. Affects SceneKit |

|||||||

| x | x | x | |||||

| CVE-2026-39871: An app may be able to observe unprotected user data. Affects TV App |

|||||||

| x | x | x | |||||

| CVE-2026-43652: An app may be able to access protected user data. Affects Sandbox |

|||||||

| x | |||||||

| CVE-2026-43653: An attacker on the local network may be able to cause a denial-of-service. Affects mDNSResponder |

|||||||

| x | x | x | x | x | |||

| CVE-2026-43654: An app may be able to disclose kernel memory. Affects Kernel |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-43655: An app may be able to cause unexpected system termination or read kernel memory. Affects IOSurfaceAccelerator |

|||||||

| x | x | x | x | ||||

| CVE-2026-43656: Parsing a maliciously crafted file may lead to an unexpected app termination. Affects Quick Look |

|||||||

| x | x | x | x | x | |||

| CVE-2026-43658: Processing maliciously crafted web content may lead to an unexpected Safari crash. Affects WebKit |

|||||||

| x | x | x | x | x | |||

| CVE-2026-43659: An app may be able to access sensitive user data. Affects FileProvider |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-43660: Processing maliciously crafted web content may prevent Content Security Policy from being enforced. Affects WebKit |

|||||||

| x | x | x | x | x | x | ||

| CVE-2026-43661: Processing a maliciously crafted image may corrupt process memory. Affects ImageIO |

|||||||

| x | x | x | x | ||||

| CVE-2026-43666: An attacker on the local network may be able to cause a denial-of-service. Affects mDNSResponder |

|||||||

| x | x | x | x | x | x | x | x |

| CVE-2026-43668: A remote attacker may be able to cause unexpected system termination or corrupt kernel memory. Affects mDNSResponder |

|||||||

| x | x | x | x | x | x | x | x |

--

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

0 Comments

Why we use CAPTCHAs

A few months ago, I implemented Cloudflare's Turnstile CAPTCHA on some pages. The reason for implementing these CAPTCHAs is obvious: Bots make up a large percentage of traffic and affect site performance.

So I figured it was a good time to look back and see how effective these CAPTCHA are. The quick number: Out of about 300 requests, only 1 passed the test. Or 99.7% of requests came from bots. And this is after we have been running this for a few months. Some bots may have stopped scanning the page.

But what about false positives? One false positive I noted from the login page was people clicking "Submit" on the login form before the CAPTCHA test was completed. This was easily fixed with a bit of JavaScript, which enabled the button only after a test was completed.

Some of the top offenders:

- 219.117.237.208. - resolves to 219.117.237.208.static.zoot.jp and appears to be some kind of spider

- 18.229.88.75 - an AWS host, also attempting to download our IP data

- 164.52.120.0/24 - Cloud provider in HK

- 2a03:2880:f806::/48 - Facebook Ireland

So far, I have received only a few complaints about false positives (aside from the now fixed login page issue).

Why I selected "Turnstile" over other CAPTCHA options:

- Cloudflare's turnstile implementation appears to have fewer privacy issues than others, like Google Recaptcha

- They are in my opinion, low impact to the user

- Implementing them on the site wasn't too difficult

- We already use Cloudflare as a CDN.

- They work well enough

CAPTCHA can often be bypassed. The right CAPTCHA solution makes it hard enough for an attacker to bypass that the value of the data they would be getting is not worth the effort.

--

Johannes B. Ullrich, Ph.D. , Dean of Research, SANS.edu

Twitter|

0 Comments

YARA-X 1.16.0 Release

YARA-X's 1.16.0 release brings 4 improvements and 4 bugfixes.

Didier Stevens

Senior handler

blog.DidierStevens.com

0 Comments

Another Universal Linux Local Privilege Escalation (LPE) Vulnerability: Dirty Frag

Less than two weeks after the public disclosure of the Copy Fail vulnerability (CVE-2026-31431), another local privilege escalation (LPE) vulnerability in the Linux kernel has been revealed. Referred to as "Dirty Frag," this vulnerability was discovered and reported by Hyunwoo Kim (@v4bel) [1]. In this diary, I will provide a brief background on Dirty Frag, and discuss its relationship to Copy Fail. I will then discuss how to mitigate Dirty Frag and outline recommended next steps for system owners.

The existence of Dirty Frag was revealed after the coordinated disclosure embargo was broken by an unrelated third party [1]. Just like Copy Fail [2], Dirty Frag allows an unprivileged local user to escalate to root on most major Linux distributions. Due to the premature disclosure of Dirty Frag, no CVE IDs were assigned [3].

Dirty Frag chains two distinct vulnerabilities:

- xfrm-ESP Page-Cache Write - residing in the IPsec ESP decryption fast paths (

esp4,esp6) - RxRPC Page-Cache Write - residing in the RxRPC module

Both sub-vulnerabilities share a common root cause: on a zero-copy send path where splice() plants a reference to a page cache page that an attacker only has read access to into the frag slot of the sender-side skb, the receiver-side kernel code performs in-place crypto on top of that frag. As a result, the page cache of files that an unprivileged user only has read access to (such as /etc/passwd or /usr/bin/su) is modified in RAM, and every subsequent read sees the modified copy [1].

While both Dirty Frag and Copy Fail belong to the same broad vulnerability class (page-cache corruption via kernel crypto in-place operations), they were discovered by different researchers and reside in different kernel subsystems. Copy Fail (CVE-2026-31431) was discovered by researchers at Theori and abuses the algif_aead module in the AF_ALG crypto interface. Dirty Frag, on the other hand, exploits the ESP and RxRPC in-place decryption fast paths directly.

With reference to Table 1, the key differences of each vulnerability are shown.

| Factors | Copy Fail (CVE-2026-31431) | Dirty Frag |

|---|---|---|

| Kernel Subsystem | AF_ALG / algif_aead | xfrm ESP (esp4, esp6) and RxRPC |

| CVE Assigned | Yes (CVE-2026-31431) | No (embargo broken before allocation) |

| Controlled Bytes Written | 4 bytes | 4 bytes (per sub-vulnerability) |

| Chaining Required | No (single vulnerability) | Yes (two sub-vulnerabilities chained) |

| Discoverer | Theori (Research Team) | Hyunwoo Kim (@v4bel) |

| Public Disclosure Date | 29 April 2026 | 7 May 2026 |

An interesting factor of Dirty Frag is that chaining the two sub-vulnerabilities covers each other's blind spots. As described in the write-up, neither the xfrm-ESP Page-Cache Write nor the RxRPC Page-Cache Write alone provides a sufficiently reliable primitive for full root escalation. However, when combined, the chained exploit achieves immediate root on most distributions.

The Dirty Frag vulnerability is significant (beyond its possible utility in Capture-the-Flag challenges). Firstly, the vulnerability affects many major Linux distributions with kernels dating back to approximately 2017, similar to Copy Fail. Secondly, due to the unfortunate embargo breach, the working exploit code is publicly available. Thirdly, since no CVE identifier was assigned, any automated workflow or systems tracking vulnerabilities by CVE identifers would not be able to show Dirty Frag automatically. Finally, in the case of containerized environments, an adversary may be able to leverage Dirty Frag, override relevant binaries in the base layer and escape to host.

Currently, patched kernels and live patches are in active build and testing for several distributions [4, 5]. Until a patched kernel or live patch is installed, the following mitigations could be applied:

1. Denylist and unload vulnerable kernel modules

This is the most immediate mitigation available. The vulnerable modules (esp4, esp6, rxrpc) can be denylisted to prevent them from being loaded:

# Unload modules if currently loaded modprobe -r esp4 esp6 rxrpc # Denylist modules to prevent loading on boot echo "Denylist esp4" >> /etc/modprobe.d/dirtyfrag-mitigation.conf echo "Denylist esp6" >> /etc/modprobe.d/dirtyfrag-mitigation.conf echo "Denylist rxrpc" >> /etc/modprobe.d/dirtyfrag-mitigation.conf

Important caveat: Denylisting esp4 and esp6 will disable IPsec ESP functionality. If your environment relies on IPsec VPN tunnels or IPsec-encrypted communication, this mitigation will cause disruption. Similarly, unloading rxrpc will affect services that depend on RxRPC (such as AFS filesystems). System administrators should assess the impact before applying this mitigation.

2. Apply live patches where available

CloudLinux KernelCare live patches are being made available for affected CloudLinux versions [4]. This allows patching without rebooting, which may be preferable for production environments where downtime windows are limited.

3. Install patched kernels from testing repositories

AlmaLinux has published patched kernels in their testing repository [5]. Once stable kernels are released for your distribution, apply them promptly and reboot.

4. Revert denylists after patching

After a patched kernel is installed and the system has been rebooted, the denylists can be removed:

rm /etc/modprobe.d/dirtyfrag-mitigation.conf

Dirty Frag joins a growing list of universal Linux LPE vulnerabilities that exploit kernel page-cache handling or memory management primitives. Notable related vulnerabilities include:

- Dirty COW (CVE-2016-5195): Exploited a race condition in copy-on-write memory handling to modify read-only file mappings

- Dirty Pipe (CVE-2022-0847): Exploited uninitialized flags in

pipe_bufferto overwrite page cache pages of read-only files - Copy Fail (CVE-2026-31431): Exploited AF_ALG crypto interface to write controlled bytes into page cache

It appears that kernel optimizations which perform in-place operations on shared page-cache-backed pages without verifying exclusive ownership introduce exploitable primitives (the ability to corrupt the global page cache by tricking the kernel into treating a shared/read-only page as a private, writable buffer). This suggests that closer attention to zero-copy and in-place operation paths in the kernel are required.

As Dirty Frag does not have a CVE ID yet, defenders have to rely on distribution advisories and direct monitoring of security mailing lists rather than automated CVE-based alerting. If you have not yet addressed Copy Fail (CVE-2026-31431), now would be a good time to treat both vulnerabilities as a combined remediation effort, given their similarity and overlapping mitigation steps.

Update - Dirty Frag is now issued with CVE IDs CVE-2026-43284 for the first vulnerability [6], and CVE-2026-43500 for the second one [7].

References:

[1] https://github.com/V4bel/dirtyfrag/blob/master/assets/write-up.md

[2] https://xint.io/blog/copy-fail-linux-distributions

[3] https://www.openwall.com/lists/oss-security/2026/05/07/8

[4] https://blog.cloudlinux.com/dirty-frag-mitigation-and-kernel-update

[5] https://almalinux.org/blog/2026-05-07-dirty-frag/

[6] https://www.openwall.com/lists/oss-security/2026/05/08/7

[7] https://www.openwall.com/lists/oss-security/2026/05/08/8

-----------

Yee Ching Tok, Ph.D., ISC Handler

Personal Site

Mastodon

Twitter

1 Comments

An Adaptive Cyber Analytics UI for Web Honeypot Logs [Guest Diary]

[This is a Guest Diary by Eric Roldan, an ISC intern as part of the SANS.edu BACS program]

Through the expansion of Large Language Models (LLMs), cybersecurity has exploded with a variety of tools for both offensive and defensive purposes. A majority of software and cyber tools are integrating Artificial Intelligence (AI) solutions into their applications, largely in the form of chatbots, automation tools through Model Context Protocol (MCP), or ingestion to prompt response type interfaces.

An overlooked and underestimated aspect of AI that is slowly arising is the creation of bespoke user interfaces (UI). That is simply put — a UI that is created custom fit to the specific needs and data provided impromptu from the user. With the ability for these models to ingest large amounts of data, it can orchestrate the appropriate elements for a UI that will be tailored to the ingested dataset.

Rather than the user having to adjust their queries or layouts for the logs that they are analyzing, the LLM will determine the proper UI elements to give to the user. This allows the user to focus on analyzing rather than tool setup.

Over days of web traffic on the DShield webhoneypot, there are large variations of intent behind the interactions. Some days, some actors may focus only on scanning and recon. Other days may be heavy on stealing credentials or trying to get a web shell exploit.

As a regular user would try to identify patterns then use their discretion to find the next proper 'grep', 'jq', or other similar pattern recognizing POSIX tools, the LLM does the same.

Before this type of bespoke UIs in cyber analytics, analysts would have to spend extensive time and energy to understand what to look for and how to use the appropriate tools. With LLM's able to do this heavy lifting, more analysts will be able to recognize attacks on their web servers with little to no cyber experience.

When developers have to manage feature implementations, documentation updates, meetings (which are always productive of course...), and dreaded bugs - security and active monitoring become an afterthought. To make the internet a safer place, we have to lower the barrier to entry for recognizing web attacks.

Okay enough selling you on how much potential this has, let's talk about how it actually works.

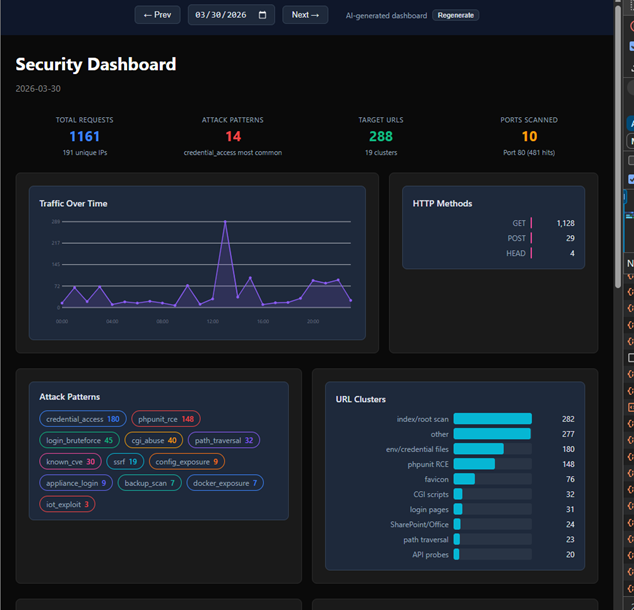

It works like this: the system reads your DShield web honeypot log file, then a Python analyzer goes through the entries and turns them into a clean summary of what happened instead of dumping raw attacker text into the AI. That summary includes things like top IPs, top URLs, time patterns, and tags for probe/attack types such as WordPress probes, SSRF, path traversal, CGI abuse, and other recognizable patterns. Then Claude looks at the cleaned summary and writes a React dashboard component that fits the shape of the attack activity for that day, so the UI can change depending on whether the logs are mostly one big campaign or a mix of background internet noise.

The safe part is that the LLM never gets the raw malicious strings directly, and the generated UI never gets to run loose in the main page. Instead, the app serves the generated dashboard through a backend API, caches it so it does not constantly change, and renders it inside a sandboxed iframe. If the generated code is broken, the system validates it and falls back to a static dashboard. So the whole flow is basically: logs came in -> analyzer summarizes them -> Claude generates a matching UI -> frontend loads it safely and pulls chart data from the backend.

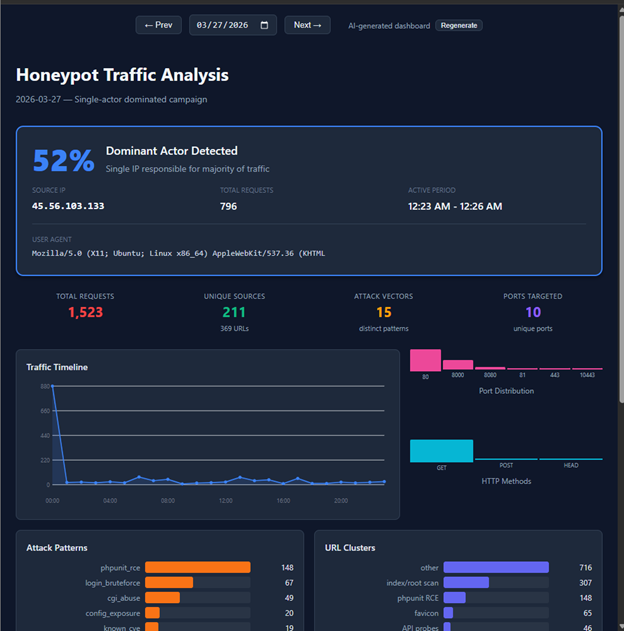

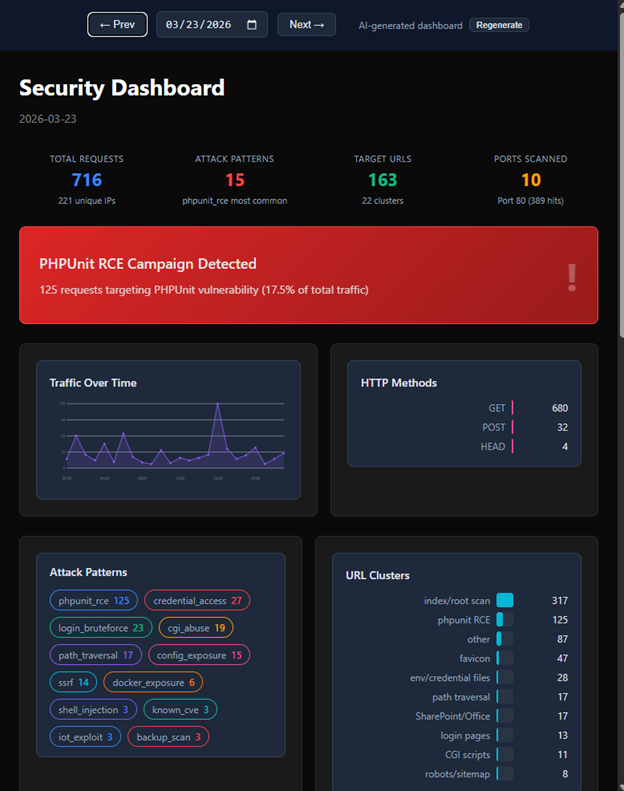

Let's take a look at some examples now! On days where there is more noise we do not see any dominant patterns highlighted on the UI

However, on days where there is a clear pattern from certain actors we see an immediate highlight…

Furthermore, it is able to recognize and highlight attack signatures that were most obvious (or would be obvious to an experienced analyst) at the very top of the UI’s dashboard

There are sometimes some interesting quirks like the LLM creating a dashboard with light mode instead of dark mode.

Nonetheless it is interesting to see how the LLM adapts to each day’s attack logs. I imagine if I could “vibe code” this idea in a few hours, it could become a full-blown platform and toolkit for major organizations and analysts. So yea…I didn’t write the code for all this madness, I simply took a problem that I constantly face when looking at attack logs - what is it that I’m actually looking for? And created a unique bespoke UI for each day’s scenario.

Shout out to Claude Code for agentically writing the repo which can be found here.

Shout out to ChatGPT for helping me write the ‘how it works’ section of this blog.

And a special shout out to my Internship mentor Guy Bruneau for helping me think bigger in terms of recognizing interesting attacks on my webhoneypot.

Be sure to subscribe to my youtube channel for more edgy tech content and cyber insights.

youtube.com/@gnarcoding

[1] https://github.com/gnarcoding/bespoke-ui-cyber-analytics/

[2] https://isc.sans.edu/tools/honeypot/

[3] https://www.sans.edu/cyber-security-programs/bachelors-degree/

-----------

Guy Bruneau IPSS Inc.

My GitHub Page

Twitter: GuyBruneau

gbruneau at isc dot sans dot edu

0 Comments

SSL.com rotates their root certificate today

I just got an email from SSL.com last night, they are rotating out their root certificate today (May 5,2026). This is normal, business as usual stuff for a CA, but certificates get used for all kinds of things, and sometimes they aren't used like they should be, so sometimes hiccups happen.

If you are using them for basic cert+website stuff, there's no need to worry. But if you go past that basic implementation, you should read their note to make sure that this change won't be affecting any of your services. Even if you don't use ssl.com, it's a good read, as every certificate expires, which means that everyone's root cert rotates out eventually - so forewarned if forearmed and all that ..

In particular (from the email):

- If you have pinned trust anchors, custom trust stores, or certificate validation logic tied to the 2016 roots, please audit those configurations promptly to avoid disruptions.

- Use cross-certificates. If you need backward compatibility with the 2016 root hierarchy during the transition, cross-certificates can bridge the gap.

- Migrate to dedicated Client Certificates. These are purpose-built for client authentication and are unaffected by Google Chrome's upcoming server authentication requirements, which impact SSL/TLS certificates with the ClientAuth EKU.

Their full post is here:

https://www.ssl.com/article/what-ssls-root-migration-means-for-you

===============

Rob VandenBrink

[email protected]

0 Comments

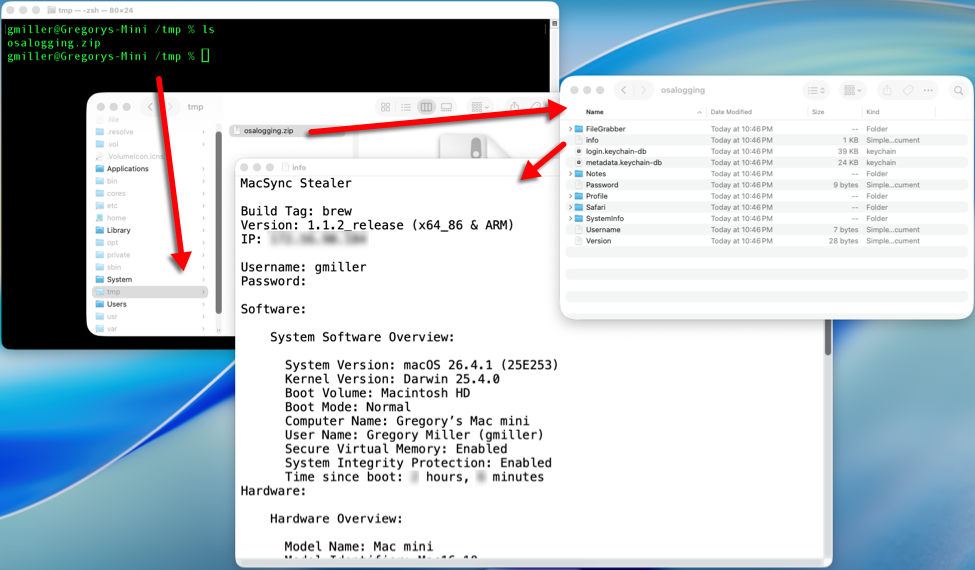

Cleartext Passwords in MS Edge? In 2026?

Yup, that is for real.

For me, this started with a post in X at hxxps://x.com/intcyberdigest/status/2051406295828250963?s=61 , which highlighted research by @L1v1ng0ffTh3L4N that found exactly this issue. Edge stores all of your browser passwords in clear text, even if you haven't used them in this session, y'know, just in case.

I figured, it couldn't be that easy, right? But like so many things, yes, yes it was.

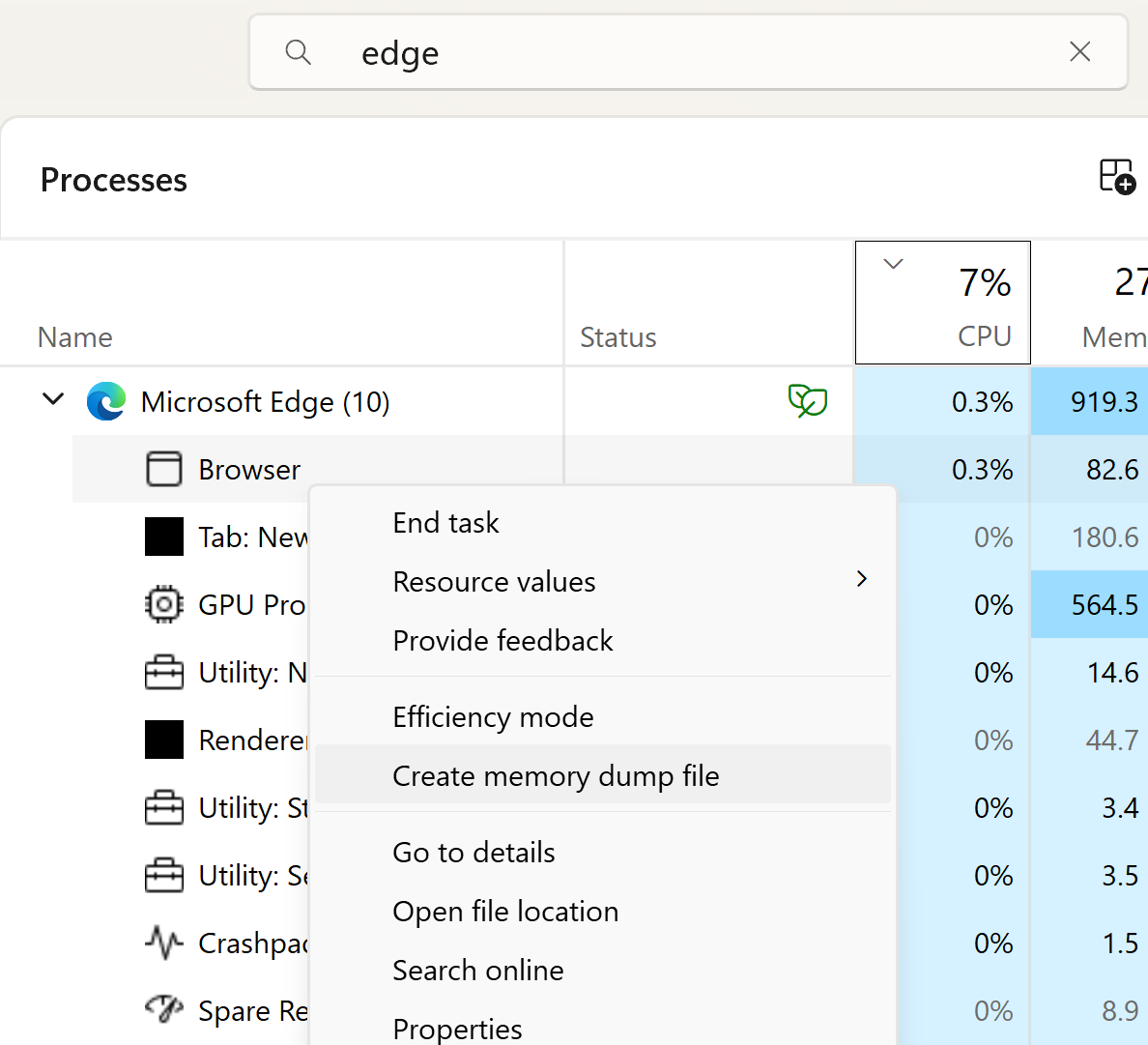

To reproduce this

- Open Edge. Don't browse anywhere, just open it

- Flip out to Task Manager, search for Edge, then expand that task

- Highlight the "browser" sub-task, right click, and choose "Create Memory Dump"

Navigate to where the DMP file is stored.

If you haven't used strings before, you're in for a treat. Strings is of course just part of most Linux distros, but you can easily get a copy for Windows as part of MS Sysinternals, at https://learn.microsoft.com/en-us/sysinternals/downloads/strings

Now let's look for passwords! You could use strings and look for known credentials, just search for a known password and you will certainly find it. Or you can take advantage of the format of the saved data:

<url of the site><protocol>< ><userid>< >password>

So, searching for "<tld><protocol>", which in most cases is "comhttps" (no spaces) will find most of them, and they'll all be in one nicely formatted group no less. The command for that will be:

strings -n 8 msedge.DMP | find "comhttps"

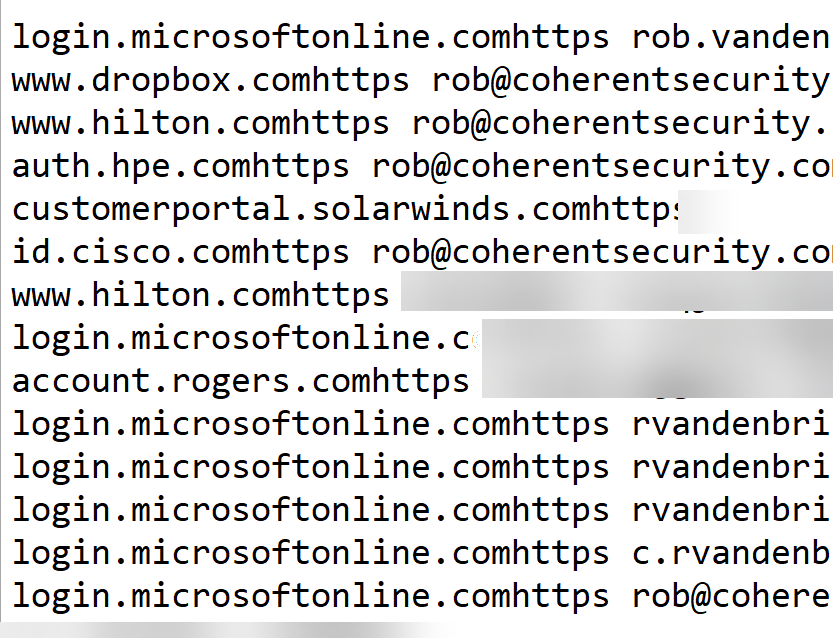

looking a bit down in the output (since comhttps does match more stuff in the memory dump than just the credential list), I see:

As you can see, Edge isn't my primary browser, but I do use it a fair bit for Azure work. And yes, this is a real session, so I cropped/blurred out sensitive accounts and of course passwords.

It really is that easy.

And the ironic thing? To view these same credentials in the browser, there's a whole security theatre process where Edge wants your biometrics as proof before disclosing even the userid and site names - you know, "for security". All the while, the whole shot is in clear text, free for the looking ..

Also as noted in the X post, Microsoft classifies this as "intended behaviour". I'm not sure what manager or lawyer decided that, hopefully it wasn't anyone in their security team.

Anyway, if the intent of this is to get me to use Firefox or Chrome, it's working!!

Have you seen a similar "strong front door / open window" security example in your forensics, please share in the comments (keeping any NDA's etc in mind of course)

=================

Update:

Tom Jøran Sønstebyseter Rønning (@L1v1ng0ffTh3L4N) just posted with more detail on his research at: x.com/l1v1ng0ffth3l4n/status/2051308329880719730 (follow the comment thread for all the info)

The main thrust of it remains the same. The logged in Windows user can dump all of their stored Edge credentials with no additional rights. Which means that the malware that user executes also has those credentials for the asking

===============