Investigating Gaps in your Windows Event Logs

I recently TA'd the SANS SEC 504 class (Hacker Tools, Techniques, Exploits, and Incident Handling) , and one of the topics we covered was attackers "editing" windows event logs to cover their tracks, especially the Windows Security Event Log.

The method to do this is:

- Set the Windows Event Log service state to "disabled"

- Stop the service

- Copy the evtx log file to a temporary location

- Edit the copy

- Copy that edited log file back to the original location

- Set the service back to autostart

This method was brought to popular use by the "shadow brokers" leak back in 2017, and has been refined nicely for us pentesters since then these days. This attack is easily scripted, so the whole sequence takes just seconds.

Often an an attacker or malware will create a new user with admin rights, create a service, or perform other tasks that give them elevated rights or persistence, and these things generally show up in the Security Event Log. Editing the security event log covers their tracks nicely, so is part of the attacker's toolkit these days.

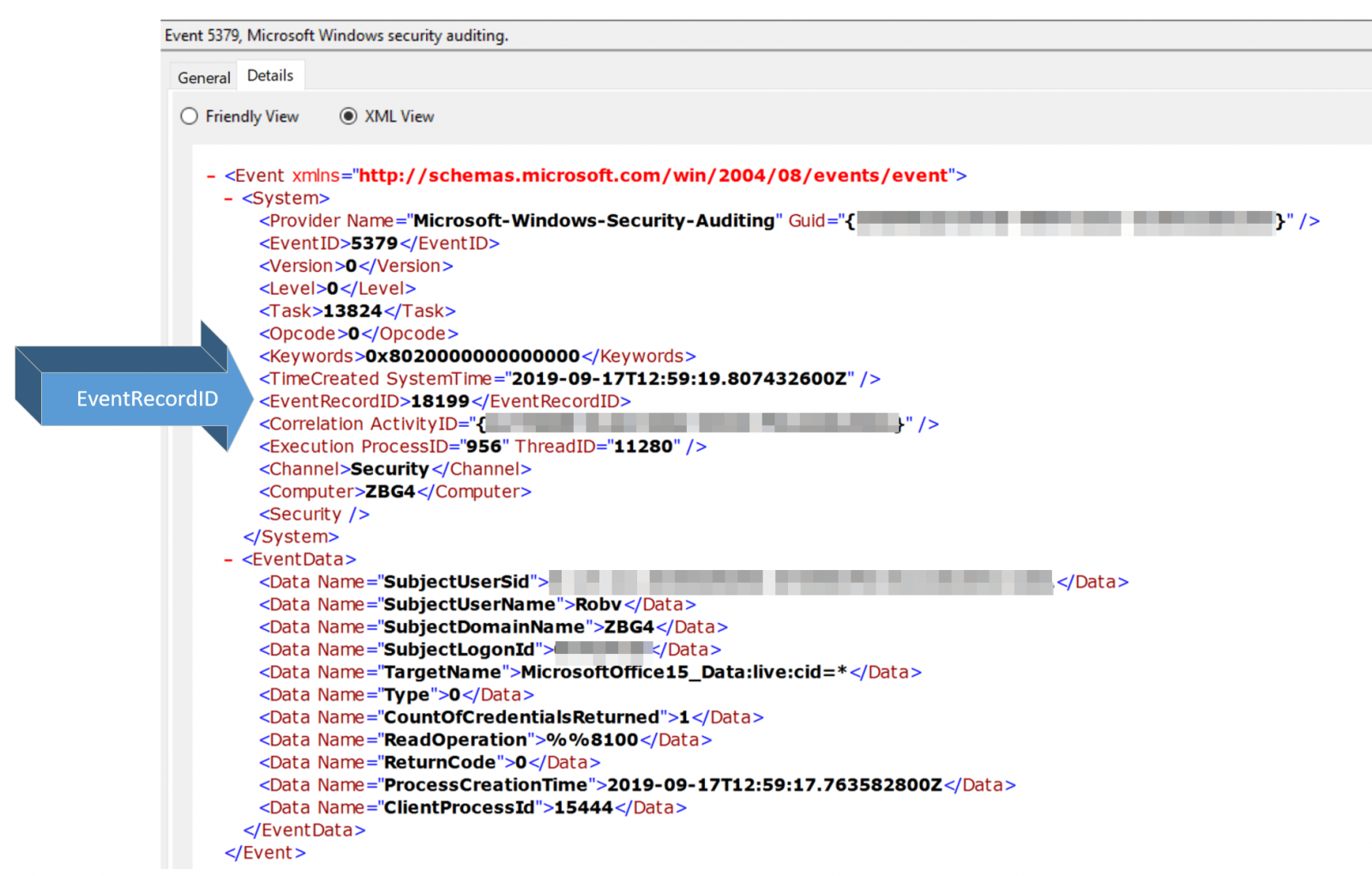

Since event log entries are numbered and sequential, any deletions show up pretty nicely as "holes" in that sequence. Of course, seeing that "Event RecordID" number for a single event involves 3 mouse clicks (see below), so finding such a hole in your event log manually is pretty much impossible.

So it's a neat attack, combined with a complex detection method, which of course got me to thinking about using PowerShell for detection and automating it to within an inch of it's life....

To automate this task, I wrote a quick-and-dirty PowerShell script to collect the security event log, then rip through the events looking for Event Record IDs that are not sequential. As always, feel free to edit this to work better for your environment. The majority of the execution time for this is reading the actual log, so I didn't try to optimize the sequence checking at all (that part takes seconds, even for a hefty sized log).

There are two caveats:

- Be sure that you have sufficient rights to read the log that you're targetting with this method - there's no sequence errors if you can't read any events :-)

- Reading an entire event log file into a list can be a lengthy and CPU intensive process. You should budget that associated load into deciding when to run scripts like this.

Also as usual, I'll park this in my github at https://github.com/robvandenbrink. Look for updates there over the next few days - I'll try to get a check into this code for log "wrapping"

|

# declare the object to hold "boundary" events on either side of a gap in the logs $BoundaryEvents = @() $SecEvents = Get-WinEvent "Security" $RecID0 = $SecEvents[0].RecordID foreach ($event in $SecEvents) { # check - is this the first event? if ($RecID0 -ne $event.RecordID) { if ($RecID0 - $event.recordid -eq 1) { $RecID0 = $event.recordid } else { # save the event record id's that bound the hole in the event log # also save them to the ":list of holes" $BEvents = New-Object -TypeName psobject $BEvents | add-member -NotePropertyName before -NotePropertyValue $RecID0 $BEvents | add-member -NotePropertyName after -NotePropertyValue $event.recordid

$BEvents | ft $BoundaryEvents += $BEvents $RecID0 = $event.recordid } } }

# this kicks the output to STDOUT # edit this code to handle the output however it best suits your needs

if ($BoundaryEvents.Count -eq 0) { Write-Host "No gaps in log" } else { Write-Host "Log Gap Summary:`r" $BoundaryEvents | ft } |

This can certainly be run against a remote computer, by changing one line:

$SecEvents = Get-WinEvent "Security" -ComputerName $RemoteHostNameorIP

However, if you want to run this against a list of computers or all computers in a domain, consider the time involved - just on my laptop reading the Security Event log into a variable takes a solid 3 minutes. While a busy domain controller or member server will have more CPU, it'll also have much larger logs. In many domains checking all the hosts in sequence simply doesn't make sense, if you plan to run this daily, do the math first - it could easily take more than 24hrs to run domain-wide. It's smarter to run this on all the target hosts at a scheduled time, and write all of the output to a central location - that gets the job done quickly and neatly, usually in a short after-hours window.

So for instance, change the summary "write-host" section in the script above to include:

|

$fname = "\\sharename\log-gaps\"+ $env:COMPUTERNAME+"-log-gaps.csv" |

You'll also likely want to:

- keep the script in a central location as well, so that if you update it there's no need to do any version checking.

- set the permissions on that collection directory appropriately - you don't want to give your attacker read or delete rights to this directory! It needs to be globally writable, but only admins need to be able to view or read from it.

- note that using this approach, the \\sharename\log-gaps directory should always be empty. If you ever see a file, you have some investigating to do!

- be sure that local log files actually have a "lifetime" greater than 24 hours - if they over-write or wrap in less than a day, you'll want to fix that

- finally, automate the collection of any files that show up here. You don't want tomorrow night's script to either fail or over-write the results from yesterday

Using this approach, each affected host will create their own summary file. If you wanted to combine them:

- update the original script to include a $hostname field

- after the collection, "cat" all the files together into one consolidated file, then run that through "sort | uniq"

- Or, if you want to get fancy, you could send log "gaps" directly to your SIEM as they occur (the $BEvents variable)

If you ever have a file in the data collection directory, either you have a wrapped logfile, or you have an incident to deal with - as discussed you can easily integrate this into your SIEM / IR process.

Also, if you are using this method to automate parsing the Security Event log, keep in mind that there are other logs that might be of interest as well.

In using methods like this, have you found an unexplained "gaps" in your logs that turned into incidents (or just remained unexplained)? Please, share any war stories that aren't protected by NDA using our comment form!

===============

Rob VandenBrink

rob <at> coherentsecurity.com

Comments