What's My (File)Name?

Modern malware implements a lot of anti-debugging and anti-analysis features. Today, when a malware is spread in the wild, there are chances that it will be automatically sent into a automatic analysis pipe, and a sandbox. To analyze a sample in a sandbox, it must be "copied" into the sandbox and executed. This can happen manually or automatically. When people start the analysis of a suspicious file, they usually call it "sample.exe", "malware.exe" or "suspicious.exe". It's not always a good idea because it's can be detected by the malware and make it aware that "I'm being analyzed".

From a malware point of view, it's easy to detect this situation. Microsoft offers to Developers thousands of API calls that can be used for "malicious purposes". Let's have a look at GetModuleFileName()[1]. This API call retrieves the fully qualified path for the file that contains the specified module. The module must have been loaded by the current process. Normally, a "module" refers to a DLL but, in the Microsoft ecosystem, the main program is also a "module" (like a DLL is also a PE file but with exported functions)

If you read carefully the API description, it expects 3 parameters but the first name can be omitted (set to NULL):

"If this parameter is NULL, GetModuleFileName retrieves the path of the executable file of the current process."

Let's write a small program:

using System;

using System.Runtime.InteropServices;

class Program

{

// Invoke declaration for GetModuleFileName

[DllImport("kernel32.dll", CharSet = CharSet.Auto)]

static extern uint GetModuleFileName(IntPtr hModule, [Out] char[] lpFilename, uint nSize);

static void Main(string[] args)

{

const int maxPath = 260;

char[] buffer = new char[maxPath];

uint length = GetModuleFileName(IntPtr.Zero, buffer, (uint)buffer.Length);

// Get the exec basename

string fullPath = new string(buffer, 0, (int)length);

string exeName = System.IO.Path.GetFileName(fullPath);

// List of potential sample names

string[] allowedNames = {

"sample.exe",

"malware.exe",

"malicious.exe",

"suspicious.exe",

"test.exe",

"submitted_sample.exe",

"file.bin",

"file.exe",

"virus.exe",

"program.exe"

};

foreach (var name in allowedNames)

{

if (string.Equals(exeName, name, StringComparison.OrdinalIgnoreCase))

{

// Executable name matched, silenyly exit!

return;

}

}

Console.WriteLine($"I'm {exeName}, looks good! Let's infect this host! }}:->");

}

}

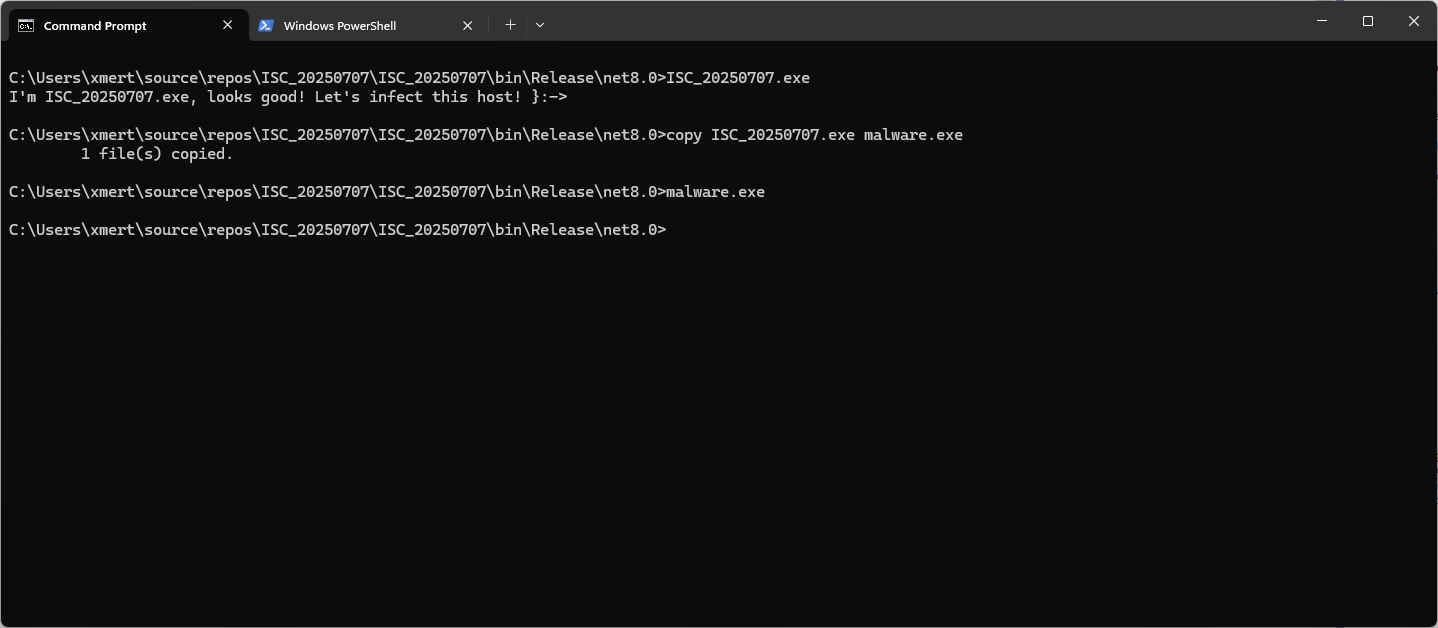

Let's compile and execute this file named "ISC_20250707.exe":

Once renamed as "malware.exe", the program will just silently exit! Simple but effective!

Of course, this is a simple proof-of-concept. In a real malware, there will be more tests implemented (ex: ignore the case) and the list of potential suspicious filenames will be obfuscated (or a dynamic list will be loaded from a 3rd-party website).

[1] https://learn.microsoft.com/en-us/windows/win32/api/libloaderapi/nf-libloaderapi-getmodulefilenamea

Xavier Mertens (@xme)

Xameco

Senior ISC Handler - Freelance Cyber Security Consultant

PGP Key

Comments